What Is LLMOps and Why It Matters

Riten Debnath

04 Apr, 2026

The world is currently fixated on the magic of Artificial Intelligence. We marvel at chatbots that write poetry, image generators that create art from thin air, and coding assistants that build software in seconds. But behind the curtain of every viral AI application lies a massive, complex, and often ignored city of digital machinery. If AI is the high-performance sports car, this hidden infrastructure is the refinery, the highway system, and the GPS combined. Without it, the AI Boom would be little more than a collection of impressive but unusable prototypes.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. Defining LLMOps: The Industrial Revolution of Artificial Intelligence

At its core, LLMOps (Large Language Model Operations) is the specialized practice of managing the entire life cycle of an AI model, from data collection and fine-tuning to deployment and daily maintenance. While traditional DevOps focused on keeping websites running, LLMOps is about keeping "intelligence" running without it degrading over time. It is the framework that allows a business to take a raw model like GPT-4 or Llama 3 and turn it into a reliable, predictable tool that performs a specific task for millions of users without failing or costing a fortune.

- Holistic Life Cycle Management: This involves overseeing the model from its initial design and training phase through to its final retirement, ensuring that every transition between development and production is documented and repeatable across different engineering teams.

- Operationalizing Intelligence: LLMOps creates the standardized "pipelines" that allow companies to automate the way they update their AI, meaning they can push out improvements every day rather than waiting months for a manual update by a data scientist.

- Cross-Functional Collaboration Infrastructure: It provides a common platform where software engineers, data scientists, and product managers can work together on the same AI project, using tools that translate complex math into actionable business metrics and user experiences.

- Scalability Frameworks: These are the protocols that allow an AI to grow from handling ten questions a day to ten million, ensuring that the underlying servers and databases can expand automatically to meet user demand without crashing or slowing down.

- Resource Allocation and Scheduling: High-end AI chips are scarce and expensive, so LLMOps includes the smart scheduling systems that decide which AI tasks get priority on the hardware, making sure that critical business functions are never stuck in a digital traffic jam.

Why it matters to the AI Boom:

The AI boom is currently in a transition phase from "hype" to "utility." LLMOps is the only way to ensure that this transition is successful. Without these operational standards, AI remains a brittle science experiment that is too risky for a bank, a hospital, or a government to use at scale.

2. Model Fine-Tuning and Prompt Engineering Pipelines

A general AI model is like a brilliant student who has read every book in the world but has never worked a day in your specific office. LLMOps provides the infrastructure to "teach" how to model your specific business rules through fine-tuning and advanced prompt management. This layer of the infrastructure ensures that the AI doesn't just give generic answers, but speaks in your brand's voice and understands your specific industry jargon.

- Automated Fine-Tuning Workflows: These systems automatically take a company’s latest documents and "re-train" small parts of the AI model on a regular basis, ensuring the AI’s knowledge is always up to date with the latest product launches or policy changes.

- Prompt Orchestration and Management: This involves storing and testing thousands of different instructions (prompts) in a central library, allowing developers to see which version of an instruction results in the most helpful and accurate answer for the end user.

- Hyperparameter Optimization: This is the technical process of fine-tuning the "hidden settings" of an AI model to find the perfect balance between creative writing and factual accuracy, which is essential for specialized tasks like legal drafting or medical reporting.

- Dataset Versioning for Training: Just as software has versions, AI data needs versions; this infrastructure tracks exactly which set of documents was used to train a model so that if the AI starts making mistakes, you can "go back in time" to find the bad data.

- Instruction Following Validation: This is a testing layer that checks if the AI is actually following the rules you gave it (like "Don't mention competitors") and flags any instances where the model ignores its instructions.

Why it matters to the AI Boom:

Generic AI is becoming a commodity that everyone has. The real value in the AI boom lies in "Specialized AI." The infrastructure for fine-tuning and prompt management is what allows a company to take a standard model and turn it into a unique, competitive advantage that no one else can easily copy.

3. Real-Time Monitoring: Detecting Model Drift and Hallucinations

One of the biggest problems with AI is that it can be "confidentially wrong," a phenomenon known as hallucination. Even worse, a model that works perfectly today might start giving bad answers next month because the world has changed. This is called "Model Drift." LLMOps infrastructure includes the constant, real-time monitoring systems that act as a 24/7 security guard for the AI's brain, catching errors before the user ever sees them.

- Semantic Drift Detection: This tool monitors the "meaning" of the AI's answers over time and alerts engineers if the model's logic starts to wander away from its original purpose or becomes less helpful as the data it was trained on gets older.

- Hallucination Scoring Systems: Modern infrastructure uses mathematical checks to give every AI answer a "certainty score," allowing the system to automatically say "I don't know" instead of making up a lie when the score is too low.

- Latency and Throughput Analytics: This involves tracking exactly how many milliseconds it takes for the AI to respond to a user, ensuring that the infrastructure is fast enough to provide a "human-like" conversation experience without annoying delays.

- Cost-per-Query Monitoring: Every word an AI generates costs money in electricity and hardware wear; this infrastructure tracks these costs in real-time to ensure that a viral AI feature doesn't accidentally bankrupt the company.

- Sentiment and Tone Tracking: For customer-facing bots, this system monitors the "vibe" of the conversation, ensuring the AI stays polite and professional even when a human user becomes frustrated or uses aggressive language.

Why it matters to the AI Boom:

Trust is the hardest thing to build and the easiest thing to lose. Monitoring infrastructure is the "honesty insurance" for the AI boom. It provides the transparency needed for humans to stay in control of the machines, ensuring they remain helpful assistants rather than unpredictable liabilities.

4. The RAG Infrastructure: Connecting AI to Live Data

Large Language Models are usually "frozen" in time based on when they were trained. To make them useful for the real world, we use Retrieval-Augmented Generation (RAG). This is a massive part of the LLMOps ecosystem that connects the AI's "brain" to a live "library" of information. This infrastructure allows a chatbot to check today’s weather, your current bank balance, or a new legal ruling that happened five minutes ago.

- Vector Embedding Pipelines: This is the process of taking raw text from a company's database and turning it into "math" that the AI can understand and search through in a split second to find the most relevant facts for a user's question.

- Context Window Optimization: Since AI models can only "remember" a certain amount of text at once, this infrastructure intelligently chooses the most important pieces of information to feed into the AI so it has the best context to answer a query.

- Source Citation and Attribution: This system forces the AI to provide a "link" or a reference to exactly where it found a piece of information, allowing users to verify the facts and reducing the fear of the AI making things up.

- Multi-Modal Retrieval: This advanced infrastructure allows the AI to not only search through text but also "look" at images, charts, and videos in a company's database to find the visual information it needs to answer complex questions.

- Dynamic Cache Management: To save money and time, the infrastructure "remembers" the answers to common questions so it doesn't have to use the expensive AI brain to answer the same thing twice.

Why it matters to the AI Boom:

Information is power, but only if it's current. RAG infrastructure is the bridge between the AI's static intelligence and the world's moving data. It is the reason AI is becoming a useful tool for daily work rather than just a fun parlor trick that can only talk about the past.

5. Security and Governance: The AI Police Force

As AI models get smarter, they also become targets for hackers. "Prompt injection" is a new kind of cyberattack where people try to trick the AI into revealing secrets or breaking its own rules. LLMOps includes the security and governance infrastructure that protects the AI, the user's privacy, and the company's intellectual property. This is the invisible shield that makes sure the AI boom doesn't turn into a privacy nightmare.

- Adversarial Prompt Filtering: This is a "firewall for words" that scans every user message for hidden commands or tricks designed to bypass the AI's safety settings or force it to generate harmful content.

- Personally Identifiable Information (PII) Scrubbers: This infrastructure automatically detects and removes names, phone numbers, and addresses from a conversation before it is sent to the AI, ensuring that no private data is ever leaked or used for training.

- Compliance and Audit Logs: Every interaction with the AI is recorded in a secure, unchangeable log, allowing companies to prove to government regulators that their AI is behaving legally and following all industry rules.

- Bias Detection and Redaction: This involves using specialized software to scan AI responses for hidden prejudices or unfair language, ensuring that the AI provides equal and fair treatment to all users regardless of their background.

- Geographic Data Residency: For global companies, this infrastructure ensures that an AI model used in Europe follows European privacy laws, while an AI in the USA follows American laws, keeping the company safe from legal trouble.

Why it matters to the AI Boom:

The only thing that can stop the AI boom faster than a technical failure is a legal or ethical disaster. Security and governance infrastructure provides the "social license" for AI to exist in our society, making it safe for children to use at school and for doctors to use in hospitals.

6. The API Economy and Integration Layers

An AI is useless if it lives in a bubble. The "Integration Layer" of LLMOps is what allows the AI to talk to your email, your calendar, your spreadsheet, and your company's custom software. This is the nervous system of the AI boom, connecting the central brain to the "hands" that actually get work done. In 2026, this has evolved into a massive ecosystem of "Agents" that can actually perform actions on your behalf.

- Standardized Model Gateways: These are the "universal adapters" that allow a company to switch from one AI provider to another (like switching from OpenAI to Google) without having to rewrite their entire software code from scratch.

- Asynchronous Request Handling: This infrastructure manages the "waiting line" for AI answers, ensuring that if millions of people ask a question at the same time, the system doesn't crash and everyone eventually gets their response.

- Tool-Calling and Action Orchestration: This is the logic that allows an AI to say "I need to check the calendar to answer this" and then actually opens the calendar app to find the information and book an appointment for you.

- Rate Limiting and Quota Management: This keeps the system fair by making sure no single user or "bot" can hog all the AI's attention and resources, preventing the system from being slowed down for everyone else.

- Streaming Data Interfaces: Instead of making the user wait for the whole answer to be finished, this infrastructure "streams" the text one word at a time, making the AI feel much more responsive and "alive" to the human user.

Why it matters to the AI Boom:

Integration is what turns AI into "Software." By connecting AI to our existing tools, this infrastructure makes it possible for AI to not just think, but to do. This is the difference between an AI that tells you how to plan a trip and an AI that actually books the flights and hotels for you.

7. Versioning and Deployment: Navigating Model Evolution

AI models are updated almost every week. Keeping track of which model is currently "live" and making sure the new version is actually better than the old one is a massive technical challenge. The deployment infrastructure of LLMOps allows companies to "swap out" the AI's brain while the application is still running, ensuring there is zero downtime for the users.

- Blue-Green Deployment Strategies: This involves running the "old" AI and the "new" AI at the same time and slowly moving users over to the new one to make sure it doesn't have any hidden bugs before everyone uses it.

- Canary Testing for AI: Developers release a new AI update to just 1% of users first to "see if it sings" (works well) before giving it to the other 99%, acting as an early warning system for any major mistakes.

- A/B Testing for Qualitative Performance: This infrastructure shows two different AI answers to a group of humans and asks them which one they prefer, using real human taste to decide which model update is truly the "best."

- Model Rollback Automation: If a new AI update starts acting weirdly at 3:00 AM, this system automatically "rolls back" the brain to the previous day's version without an engineer having to wake up and fix it manually.

- Environment Parity (Dev to Prod): This ensures that the AI behaves exactly the same on a developer's laptop as it does on a massive global server, preventing the "it worked on my machine" problem that plagues software development.

Why it matters to the AI Boom:

The pace of AI development is terrifyingly fast. Deployment infrastructure provides the "brakes" and the "steering wheel" that allow companies to move quickly without crashing. It allows for constant improvement while maintaining the high reliability that users expect from professional tools.

8. Data Governance and Ethical Stewardship

The final and perhaps most important headline in the world of LLMOps is the management of the data that goes into the AI. As we move into 2026, the question of "who owns the data" and "was this data stolen" has become a central part of the AI infrastructure. Ethical stewardship is the practice of ensuring the AI is built on a foundation of clean, legal, and consensually obtained information.

- Consent and Rights Management: This infrastructure tracks the "license" of every single sentence used to train the AI, ensuring that authors and creators are respected and, in many cases, compensated for their contribution to the model's intelligence.

- Data Lineage Tracking: This is a "family tree" for data that shows exactly where a piece of information came from, who cleaned it, and how it was used, which is essential for legal audits and solving disputes over copyright.

- Synthetic Data Safety Filters: When AIs are used to create data for other AIs, this infrastructure checks that the "fake" data isn't just repeating the biases of the old data, preventing a "loop of stupidity" where the AI gets worse over time.

- Human-in-the-loop (HITL) Workflows: This is the infrastructure that allows humans to easily step in and correct the AI when it's learning, providing the "parental guidance" that helps the AI develop a sense of accuracy and ethics.

- Sustainability and Carbon Tracking: Modern LLMOps tools now track the carbon footprint of training and running AI models, helping companies choose the most "green" data centers to keep the AI boom environmentally friendly.

Why it matters to the AI Boom:

The AI boom cannot survive if it is seen as a "theft machine." Ethical data infrastructure is the foundation of long-term sustainability. It ensures that the AI revolution is something that benefits everyone, creators, companies, and users alike, rather than just the few who own the biggest servers.

Promoting Fueler: Showcase Your Infrastructure Skills

As the tech world shifts toward these complex AI infrastructures, the way you demonstrate your value to employers must change. It is no longer enough to just list AI as a skill on a flat resume. Companies are looking for the architects, the plumbers, and the operators who can actually build and manage these eight layers of LLMOps.

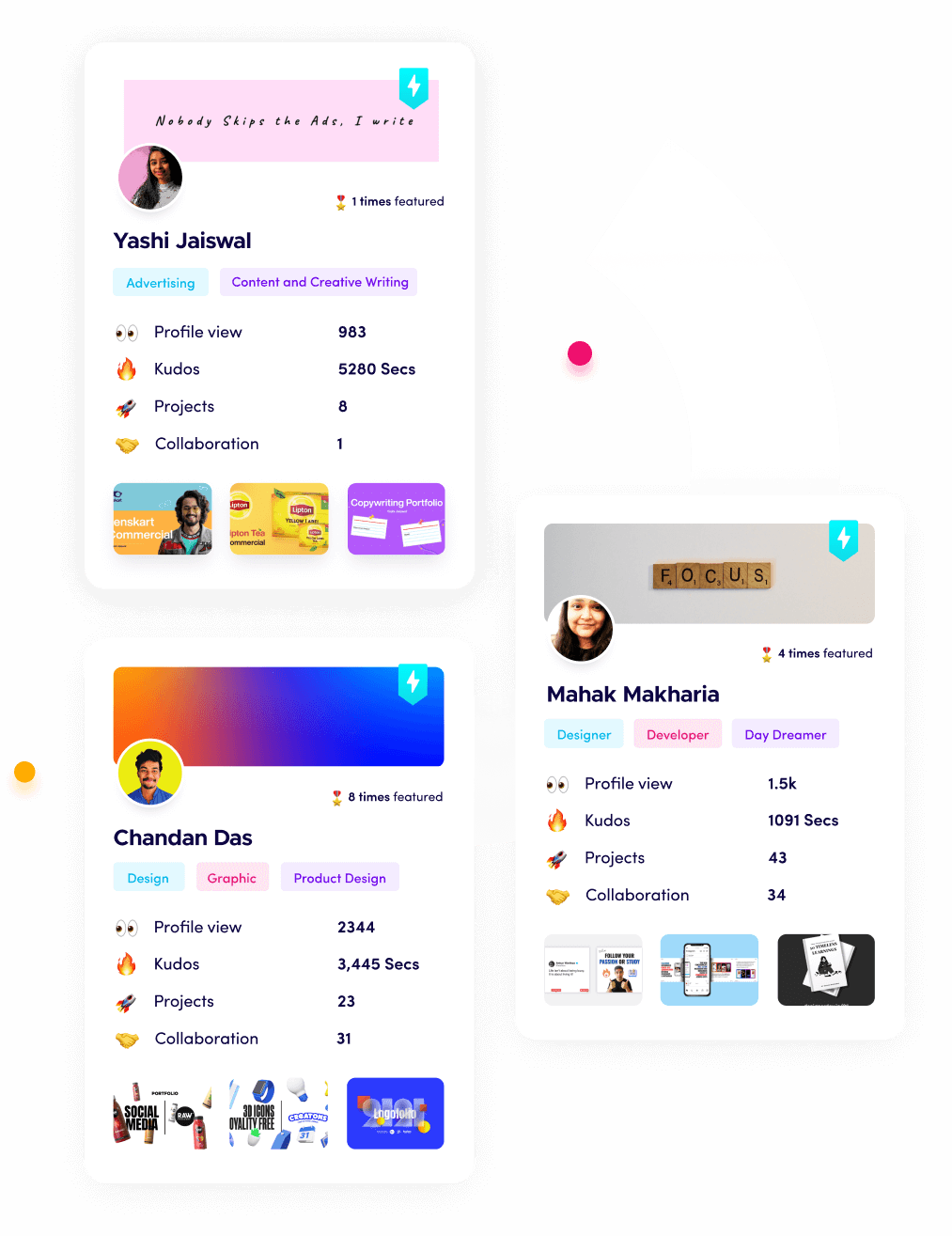

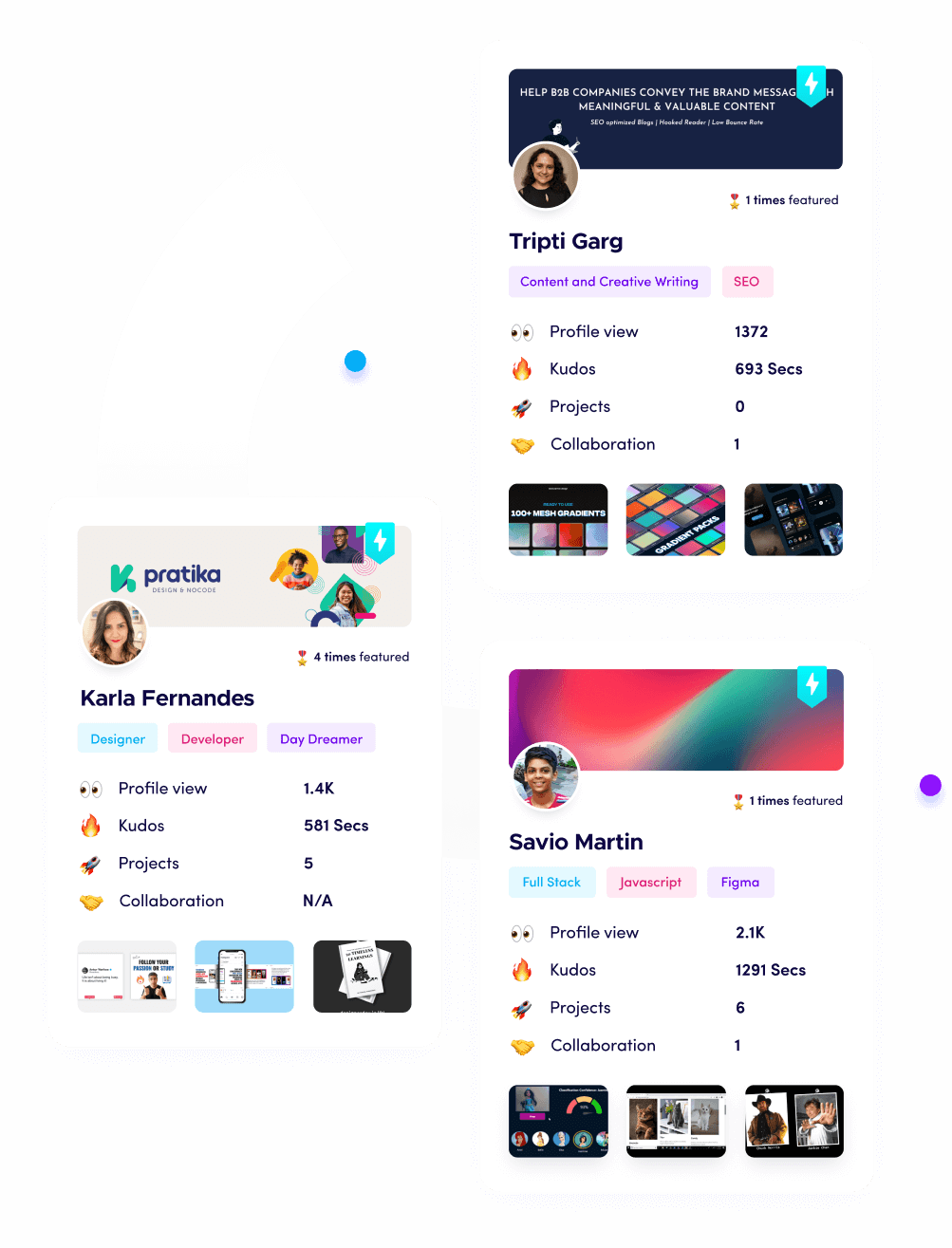

Using Fueler, you can document your journey by uploading your LLMOps projects, RAG experiments, and AI assignments. By creating a proof of work portfolio, you allow companies to see your actual skills in action. Whether you've built a custom security firewall for a chatbot or managed a model deployment pipeline, Fueler helps you stand out in a crowded market where traditional CVs are becoming less effective. Show the world what you can build, not just what you've studied.

Final Thoughts

The AI boom is a massive architectural achievement that goes far beyond simple chatbots. While the brains of the operation get all the headlines, it is the body of the infrastructure, the chips, the operations, the databases, and the security that make it all possible. As we move further into 2026, understanding these hidden layers of LLMOps will be the most valuable skill in the technology industry. If you want to build a career in this space, stop looking at the surface and start looking at the plumbing. Dive into the infrastructure, because that is where the real power and the real future of intelligence reside.

FAQs

What is the best way for a beginner to start learning LLMOps in 2026?

The best way is to start by building a small project. Use a framework like LangChain to build a basic chatbot, then try to add "memory" using a vector database like Pinecone. Documenting each step of this process on a portfolio like Fueler is the fastest way to learn and get noticed.

How does LLMOps differ from traditional DevOps?

DevOps is about the stability of software code (it either works or it doesn't). LLMOps is about the stability of "probabilistic" outputs. Because AI can give different answers to the same question, LLMOps requires new tools for monitoring quality, bias, and accuracy that don't exist in traditional DevOps.

Is it expensive to set up an LLMOps pipeline for a small startup?

It can be, but many tools now offer "serverless" or "pay-as-you-go" models. This means a startup only pays a few cents when someone uses the AI, making it much more affordable than buying their own hardware or paying for massive monthly subscriptions before they have customers.

Why is "Human-in-the-loop" so important in AI infrastructure?

AI is not perfect and can't understand context as well as a human yet. Having an infrastructure that allows a person to check and correct the AI’s work ensures that the system stays accurate and safe, especially in high-stakes industries like law or finance.

Can LLMOps help reduce the environmental impact of AI?

Yes. By using LLMOps to optimize how models are run (inference optimization) and by choosing energy-efficient ways to search data, companies can significantly reduce the amount of electricity required to power their AI features.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.