Top 10 AI Infrastructure Tools Powering Modern AI Systems

Riten Debnath

27 Mar, 2026

Last updated: March 2026

If you thought building a basic website was stressful, try keeping an AI model alive in 2026 without the whole thing exploding into a pile of expensive digital scrap. We have moved past the "magic chatbot" phase and into the era of "Agentic AI," where your systems aren't just answering questions, they are actually doing work, making decisions, and burning through server credits like they are going out of style. If your infrastructure is built on a shaky foundation of slow databases and weak processors, your AI will be about as useful as a solar-powered flashlight in a cave.

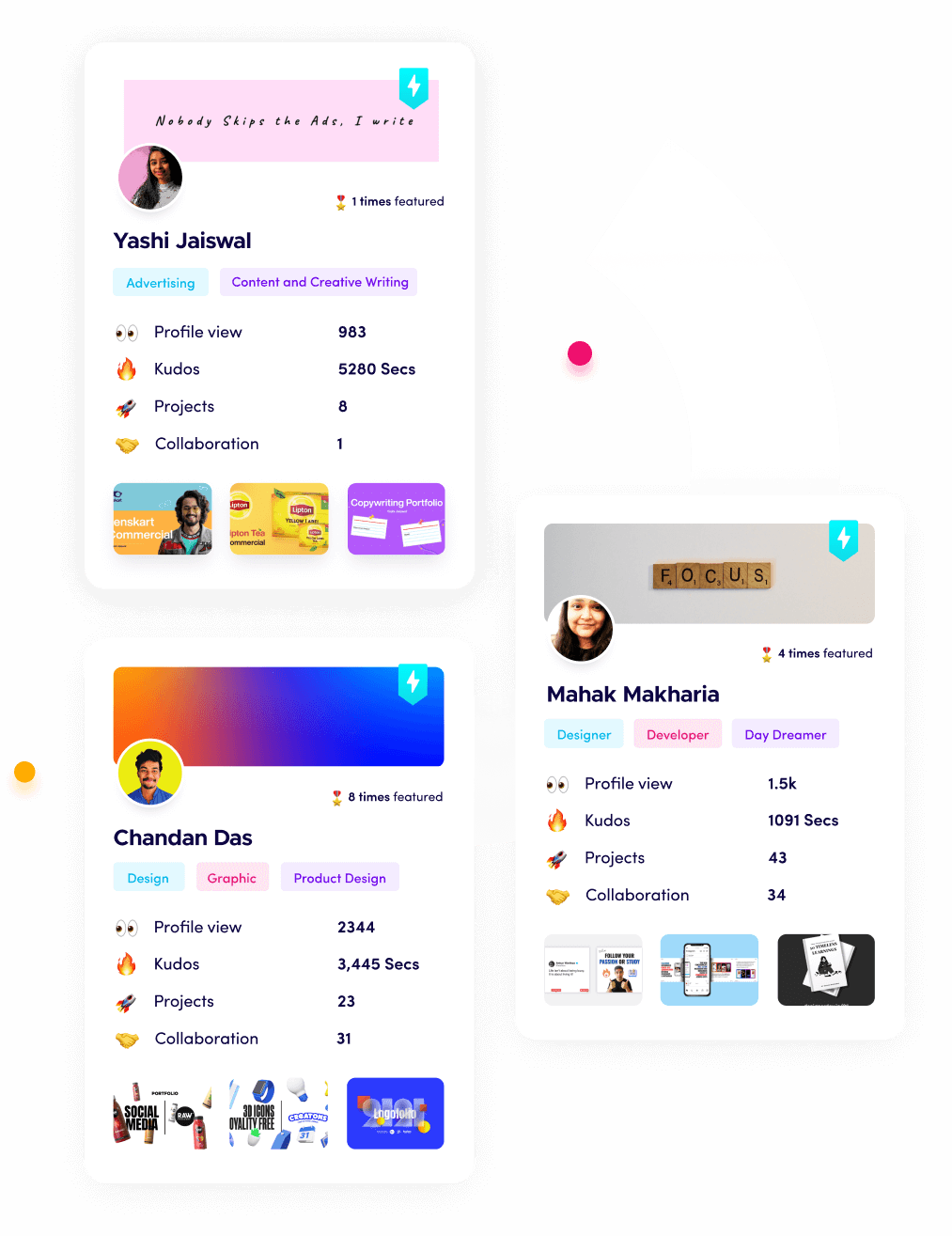

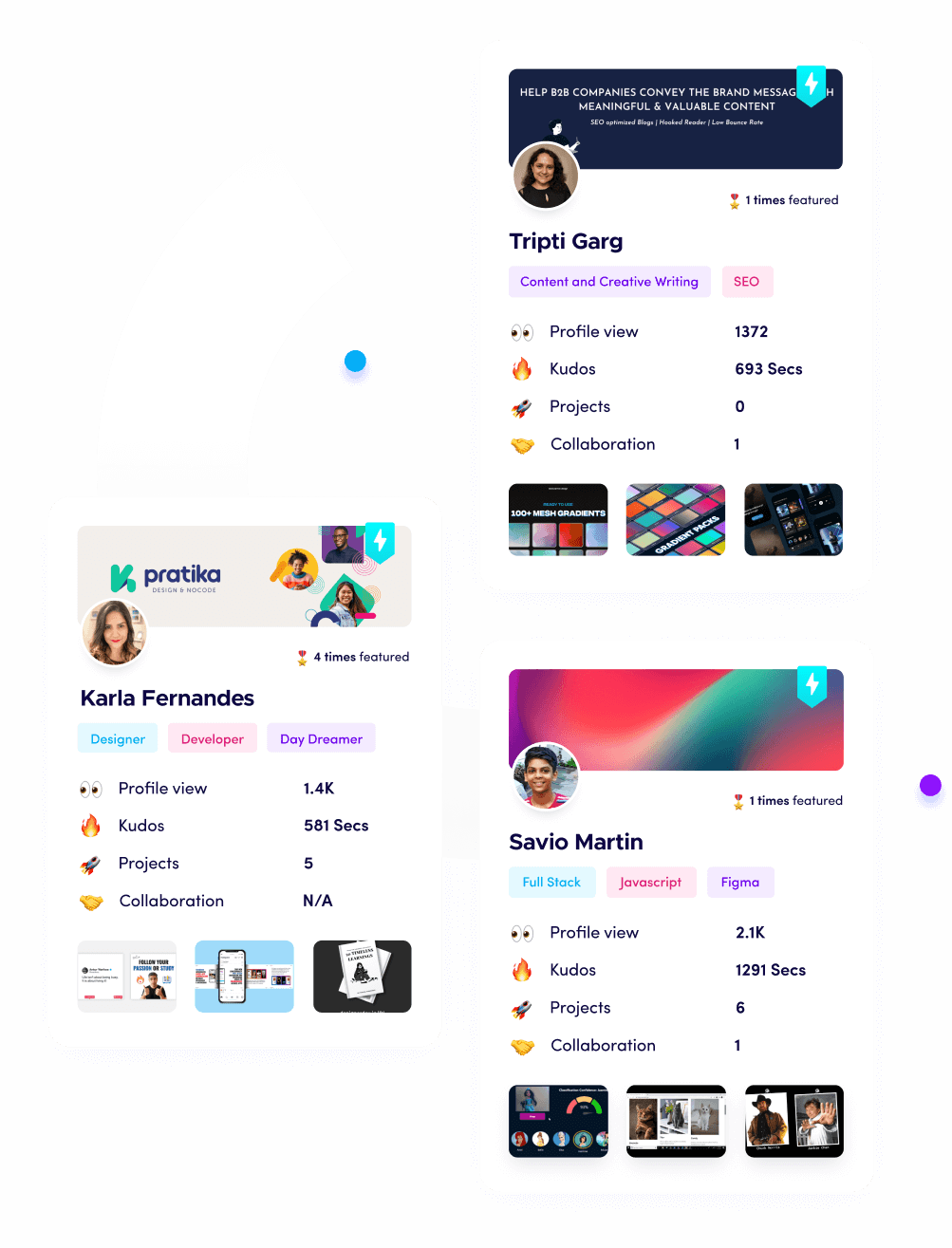

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

At a glance: Comparing the Top AI Infrastructure Tools Powering Modern AI Systems

1. NVIDIA HGX B300 (Blackwell Ultra)

Best for: Massive-scale model training and high-speed "reasoning" inference.

If the AI world has a god, it’s currently a piece of silicon from NVIDIA. The B300 is the 2026 heavyweight champion of chips, specifically designed to handle models with trillions of parameters. It is not just a "fast processor," it is a complete architecture that allows thousands of GPUs to talk to each other as if they were one giant brain. If you are trying to build the next GPT or a complex autonomous system, this is the hardware that keeps your latency low enough that users don't fall asleep waiting for a response.

- 2.1 TB HBM3e Memory: This massive memory pool allows the chip to hold entire complex models locally, drastically reducing the time spent moving data back and forth.

- Liquid-Cooled Design: To prevent the chip from melting itself during intense training sessions, it uses advanced liquid cooling to maintain peak performance 24/7.

- Fifth-Gen NVLink: Provides 1.8 TB/s of bidirectional throughput, meaning your GPUs can share information almost instantly without getting stuck in a digital traffic jam.

- Decompression Engine: Speed up data movement from storage to the GPU by up to 20x, ensuring the processor is never sitting idle waiting for "food" (data).

- Dedicated Reliability Engine: Features self-healing capabilities that can predict and route around hardware failures before they crash your three-month-long training run.

Pricing: Not sold as a single unit, usually accessed through cloud providers like CoreWeave or AWS. Expect to pay between $6.50 and $12.00 per hour for an H100/B300 equivalent instance, depending on your commitment.

Why it matters: Without this level of raw power, "Agentic AI" (AI that thinks and acts independently) simply wouldn't be fast enough to be useful. It is the literal engine under the hood of every major AI system in 2026.

2. CoreWeave

Best for: Startups needing "bare-metal" GPU performance without the bloat of traditional cloud providers.

CoreWeave is the "cool kid" of the cloud world right now. While giants like AWS try to be everything to everyone, CoreWeave does one thing: it provides the fastest possible access to NVIDIA GPUs. They don't have the overhead of traditional virtual machines, which means you get more "juice" out of every dollar you spend. In 2026, they are the primary partner for companies like Perplexity and Cursor because their network is built specifically for the high-speed data needs of AI.

- InfiniBand Networking: Unlike standard Ethernet, this uses "Remote Direct Memory Access" to let data skip the CPU entirely and go straight to the GPU, cutting latency by 30%.

- Flex Reservations: A new 2026 feature that lets you pay a small fee to "hold" GPU capacity and only pay full price when you are actually using it.

- Kubernetes-Native: The entire platform is built for modern developers who use containers, making it incredibly easy to scale from one GPU to ten thousand.

- Liquid-Cooled Data Centers: They run some of the most efficient hardware on the planet, which means fewer thermal throttles and more consistent speed for your models.

- Spot Instances: Highly discounted rates (up to 80% off) for non-critical tasks like batch processing or model backfills that can handle a short interruption.

Pricing: Pay-as-you-go. H100 instances range from $2.95 (Spot) to $4.25 (On-Demand). The new B300 instances are currently by quote but average around $7.50/hour for early adopters.

Why it matters: It levels the playing field. A small startup with a few thousand dollars can now access the same world-class hardware that OpenAI uses, allowing them to compete on speed and model quality.

3. Pinecone (Serverless)

Best for: Long-term "memory" for AI agents and high-performance RAG systems.

AI models are like geniuses with goldfish memories; they forget everything the second a conversation ends. Pinecone acts as the "hard drive" for AI. It stores information as "vectors" (mathematical representations of meaning) so your AI can "remember" your past conversations, company documents, or customer preferences. Their serverless version is a game-changer because you don't have to manage any database servers; you just pay for what you store and search.

- Zero-Ops Infrastructure: You never have to worry about "scaling up" a database; Pinecone handles all the background work so you can focus on your code.

- Metadata Filtering: Allows you to filter your search by specific categories (like "only show documents from 2025") without sacrificing search speed.

- 7ms p99 Latency: Even with billions of pieces of information, Pinecone can find the most relevant "memory" in less time than it takes to blink.

- Freshness Guarantee: As soon as you add new data, it is searchable by your AI within seconds, which is vital for news or real-time data apps.

- Usage-Based Billing: You are no longer billed for "idle" database time, making it significantly cheaper for startups with fluctuating traffic.

Pricing: Free tier available (up to 100k vectors). Serverless pricing is approximately $0.33 per GB of storage plus $0.00825 per 1,000 "read units" (searches).

Why it matters: "RAG" (Retrieval-Augmented Generation) is how you stop AI from hallucinating. Pinecone provides a reliable data source that ensures your AI says "I don't know" or cites a real document instead of making things up.

4. Weights & Biases (W&B)

Best for: Tracking AI experiments and making sure your team doesn't lose their minds.

Training an AI model is basically a high-stakes science experiment that costs $1,000 an hour. If you don't track your variables, you are just burning money. W&B is the "lab notebook" for AI engineers. It tracks every version of your model, the data you used, and the results of every test run. In 2026, their "Weave" product has become the standard for tracing how AI agents think, letting you see exactly where a chain of thought went off the rails.

- Experiment Tracking: Automatically logs your model's accuracy, loss, and hardware usage in a beautiful dashboard so you can compare different "recipes."

- W&B Weave: A specialized tool for debugging LLM applications, showing you the exact inputs and outputs for every step of a complex AI task.

- Artifact Versioning: Keeps track of every version of your dataset and model so you can always "rewind" to a version that worked better.

- Collaborative Reports: Allows teams to write "blog-style" reports inside the platform to explain to stakeholders why a certain model was chosen.

- Environment-Free RL: A new 2026 feature for Reinforcement Learning that allows agents to learn "on the job" without needing a simulated environment.

Pricing: Free for personal use. Pro plan starts at $60/month. Enterprise plans are custom but generally require an annual commitment starting around $5,000.

Why it matters: AI development is messy. W&B brings sanity to the process, ensuring that when you find a "winning" model, you actually know how you built it and can do it again.

5. Hugging Face

Best for: Accessing open-source models and collaborating on the "GitHub of AI."

If Hugging Face disappeared tomorrow, the AI industry would probably grind to a halt. It is the central library where everyone shares their models, datasets, and demo apps. Instead of building your own AI from scratch, you go to Hugging Face, find a model that's 90% of the way there, and fine-tune it for your specific needs. It is the ultimate hub for the open-source community, making high-end AI accessible to everyone.

- Model Hub: Home to over 1 million open-source models (like Llama, Mistral, and Stable Diffusion) that you can download and run for free.

- Inference Endpoints: Allows you to turn any model on the hub into a working API with just two clicks, so you don't have to manage your own servers.

- Datasets Library: Access to massive collections of text, images, and audio to train your models without having to scrape the internet yourself.

- ZeroGPU Spaces: A specialized hosting service that lets you run AI demos for free using shared GPU power from the community.

- Hugging Face Pro: Gives individual developers higher usage limits on free tools and early access to new "AutoTrain" features.

Pricing: Free for basic use. Pro badge is $9/month. Enterprise Hub starts at $20 per user per month. Inference endpoints are pay-as-you-go based on the GPU used.

Why it matters: It prevents "vendor lock-in." You aren't forced to use OpenAI or Google, you can use any open-source model and host it wherever you want, giving you total control over your startup's future.

6. Anyscale (Ray)

Best for: Scaling Python code from a single laptop to a thousand-node cluster effortlessly.

Python is the language of AI, but it wasn't really built to run on a thousand computers at once. Ray (and its commercial version, Anyscale) fixes this. It allows a developer to write code on their laptop and, with one click, deploy it across a massive cluster of GPUs. In 2026, it is the backbone of companies like Uber and OpenAI because it handles all the "scary" parts of distributed computing, like memory management and crash recovery.

- Ray Serve: A library for building high-performance model serving applications that can handle millions of requests per second.

- Anyscale Runtime: A proprietary, optimized version of Ray that runs up to 4.5x faster than the open-source version for data-heavy tasks.

- Interactive Workspaces: Spin up a cloud-based coding environment with a GPU in seconds, so you don't have to waste hours setting up your local machine.

- Smart Spot Orchestration: Automatically moves your work to "On-Demand" servers if your "Spot" server gets taken away, preventing lost work.

- Cost Governance: Built-in dashboards that show exactly which team member is spending the most on GPUs, so you don't get a surprise $50,000 bill.

Pricing: Open-source Ray is free. Anyscale offers a $100 starting credit. Paid plans are usage-based, typically adding a 20% to 30% management fee on top of your raw cloud costs.

Why it matters: It makes "scaling" a non-issue. You can start small and grow your infrastructure automatically as your user base grows, without ever having to rewrite your core code.

7. LlamaIndex

Best for: Connecting your custom data (PDFs, Slack, Notion) to Large Language Models.

LlamaIndex is the "bridge" between your data and the AI's brain. While Pinecone stores the data, LlamaIndex is the tool that goes into your Notion, Google Drive, or Slack, extracts the useful information, and formats it so the AI can actually understand it. It is the best tool for building "knowledge assistants" that actually know what is going on inside your company.

- Data Connectors: Over 100 "loaders" that can pull data from almost any source, including complex enterprise software like Jira or Salesforce.

- Advanced Chunking: Automatically breaks long documents into smaller pieces that "fit" inside an AI's context window without losing the meaning.

- Agentic RAG: New in 2026, it allows the AI to "plan" its search, like looking through five different documents to find the answer to a complex question.

- Structured Outputs: Ensures the AI gives you back data in a specific format (like JSON or a table) so your other software can use it.

- Evaluation Tools: Built-in tests to see if your AI's answers are actually accurate based on the data you provided.

Pricing: The open-source version is free. LlamaCloud (managed version) starts with a free tier and moves to a "per-index" or "per-query" pricing model starting around $50/month.

Why it matters: Raw AI models are generic. LlamaIndex makes them "expert" in your specific business, which is the only way to build a truly unique AI product.

8. Vercel AI SDK

Best for: Frontend developers building sleek, streaming AI user interfaces.

Vercel has become the "go-to" for the "AI-Native" web. Their AI SDK makes it incredibly easy to add things like "streaming text" (where the answer appears word-by-word) and "generative UI" (where the AI can actually build a button or a chart in the middle of a chat). If you want your AI app to feel like a premium, modern product rather than a clunky 90s chatroom, this is the toolkit you use.

- Unified API: Use a single way to talk to OpenAI, Anthropic, or Hugging Face, allowing you to swap models in minutes without changing your frontend code.

- Streaming Hooks: Pre-built code for React, Svelte, and Vue that handles the "typing" effect and error handling automatically.

- AI Gateway: A new 2026 feature that provides a single entry point for all your AI calls, offering caching (to save money) and rate limiting (to prevent abuse).

- Tool Calling: Simplifies the process of letting your AI "call" other functions, like "check the weather" or "book a meeting."

- Observability: Built-in tracking to see how much each user is costing you in AI tokens, directly inside your Vercel dashboard.

Pricing: SDK is free/open-source. Vercel Pro plan (for teams) is $20 per user per month. AI Gateway offers a $5/month free credit and is then pay-as-you-go.

Why it matters: The user experience is often more important than the model itself. Vercel ensures that your AI feels fast, responsive, and "magical" to the end user.

9. LangSmith

Best for: Debugging, testing, and monitoring complex AI "chains" in production.

If LangChain is the "Lego set" for building AI apps, LangSmith is the "security camera" and "diagnostic tool." Once your AI app is live, you need to know: Why did it say that? Why is it taking so long? How much did that one chat cost? LangSmith records every single step of every single interaction, so when a user reports a bug, you can see exactly what the AI was "thinking" at that exact moment.

- Full Traceability: See every step of an AI's process, from the initial prompt to the database search to the final answer.

- A/B Testing: Compare two different versions of a prompt to see which one gets better results from real users.

- Human-in-the-Loop: Allows you to manually review and "rate" AI answers to help the model learn over time.

- Cost Tracking: Provides a breakdown of token usage and cost per session, helping you stay within your startup budget.

- Automated Regression Testing: Every time you update your code, LangSmith runs a "test suite" to make sure the AI hasn't started giving worse answers.

Pricing: Developer tier is free (up to 5,000 traces/month). Plus plan starts at $39/month. Enterprise plans are custom.

Why it matters: You can't fix what you can't see. LangSmith gives you the visibility needed to turn a "cool prototype" into a "reliable enterprise product."

10. Scale AI (Donovan/Nucleus)

Best for: High-quality data labeling and fine-tuning models for maximum accuracy.

Data is the new oil, and Scale AI is the refinery. To make an AI model work for a specific task (like identifying medical images or writing legal contracts), it needs to be "trained" on high-quality, labeled data. Scale AI provides the infrastructure to take messy real-world data and turn it into perfect training sets. In 2026, their "Donovan" platform has become a favorite for government and enterprise teams who need to build "secure" AI that never leaks data.

- Scale Nucleus: A dashboard for visualizing your data, finding edge cases where your model fails, and curating better datasets.

- Expert Labeling: Access to thousands of human experts who can label complex data (like legal text or code) that a generic worker couldn't handle.

- RLHF Pipeline: A built-in system for "Reinforcement Learning from Human Feedback," which is how you teach an AI to be helpful and safe.

- Donovan AI: A secure, "FedRAMP" certified environment for running AI on sensitive data that can't leave your private cloud.

- Automated QA: Uses AI to check the work of the human labelers, ensuring your training data is 99.9% accurate.

Pricing: Scale Nucleus starts at $49/month for small teams. Data labeling is priced per unit (e.g., $0.05 per image or $1.00 per expert-written paragraph).

Why it matters: A model is only as good as the data it eats. Scale AI ensures your model isn't "eating junk food," which is the secret to building AI that people actually trust.

Which one should you choose?

If you are just starting, do not try to buy all ten. Start with the Vercel AI SDK to build your interface and Hugging Face to find a model. If your AI needs to remember things (and it probably does), add Pinecone. This "trio" is the standard starting point for most 2026 startups. Only move to things like NVIDIA/CoreWeave if you are building your own models, or LangSmith once you have actual users and need to start debugging their sessions.

How does this connect to building a strong career or portfolio?

Absolutely. In the 2026 job market, "Prompt Engineering" is a basic skill, but "AI Infrastructure Engineering" is a high-paying career. Companies aren't looking for people who can talk to AI; they are looking for people who can build the systems that power it.

When you use Fueler to showcase your work, don't just say "I built a chatbot." Instead, show a project where you used LlamaIndex to connect a database, Pinecone for memory, and LangSmith for testing. This "proof of work" shows that you understand the entire plumbing of the system. That is what gets you hired by the top AI labs and high-growth startups.

Final Thoughts

AI infrastructure is the new "electricity." It used to be something only the biggest companies in the world could afford, but thanks to the tools on this list, it has been democratized. We are moving toward a world where every single app will have an AI "brain," and knowing how to connect that brain to the rest of the world is the most valuable skill of the decade. Stay curious, keep building, and don't be afraid to break things. That is how the best systems are always built.

FAQs

1. Is it cheaper to use open-source models on Hugging Face or use the OpenAI API?

For low volume, OpenAI is usually cheaper and easier. However, once you scale to thousands of users, hosting an open-source model on a provider like CoreWeave is often 40-60% cheaper and gives you more privacy.

2. Do I need to be a "coding pro" to use these infrastructure tools?

Tools like Vercel and Pinecone are very beginner-friendly. However, things like NVIDIA HGX and Anyscale (Ray) require a solid understanding of Python and cloud computing. Start with the "SDKs" and work your way down to the "hardware."

3. What is "Vector Search" and why is Pinecone so popular for it?

Think of vector search like "searching by vibes" rather than keywords. If you search for "cold weather," a keyword search looks for those exact words. A vector search understands that you might also be looking for "winter," "snow," or "arctic." Pinecone is the leader because it makes this complex math incredibly fast.

4. How do I stop my AI from "hallucinating" (making things up)?

The best way is to use the "RAG" stack: LlamaIndex to find the right data and Pinecone to store it. By forcing the AI to look at a real document before it answers, you drastically reduce the chances of it lying.

5. Is NVIDIA still the only choice for AI hardware in 2026?

NVIDIA is the leader, but companies like Apple, Google (TPUs), and startups like Tenstorrent are catching up. However, NVIDIA's "CUDA" software is so widely used that it remains the safest bet for most startups.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.