Top 7 AI Infrastructure Platforms for Scalable AI Systems

Riten Debnath

27 Mar, 2026

Last updated: March 2026

Building an AI startup in 2026 is a bit like building a skyscraper in a city where the ground is constantly moving. You can have the most brilliant architectural plans, the most sophisticated neural networks, but if your foundation is shaky, the whole thing will collapse the moment you try to add a few floors of users. While everyone else is arguing over which LLM writes the best poetry, the real engineers are looking at the heavy machinery. They are looking for the platforms that handle the "boring" stuff: the data pipelines, the GPU clusters, and the memory systems that allow an AI to actually function at scale without burning through a billion dollars in cloud credits.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

At a glance: Comparing the Top AI Infrastructure Platforms for Scalable AI Systems

1. NVIDIA AI Enterprise

Best for: Industrial-grade reliability and the absolute gold standard in hardware-software integration.

If the AI world has a king, it’s NVIDIA. While they are famous for their chips, their AI Enterprise software suite is the secret sauce that makes that hardware usable for actual businesses. It’s a comprehensive platform that includes everything from "microservices" for deploying models to specialized tools for medical imaging or autonomous driving. When you use NVIDIA’s infrastructure, you aren't just renting a computer; you are plugging into a massive ecosystem where every single line of code has been optimized to squeeze every drop of performance out of the silicon.

- NVIDIA NIM (Inference Microservices): Pre-built "containers" that allow you to deploy popular AI models like Llama or Mistral in minutes with industry-leading speed.

- CUDA-X Libraries: A massive collection of accelerated libraries that speed up everything from basic math to complex physics simulations.

- Enterprise Support: Access to NVIDIA’s own engineers to help you troubleshoot your most complex scaling problems in real-time.

- Magnum IO: Advanced software that allows thousands of GPUs to talk to each other so fast they act like one giant, world-crushing brain.

- Certified Systems: A guarantee that their software will run perfectly on servers from Dell, HP, or Lenovo, removing the "it worked on my laptop" headache.

Pricing: Standard licensing starts at approximately $4,500 per GPU per year, though most large-scale users negotiate custom enterprise agreements based on their total cluster size.

Why it matters: In a field where a 10% increase in efficiency can save a company millions of dollars, NVIDIA’s deep integration is the difference between a profitable product and a massive money pit. It is the safest "no-fail" choice for mission-critical AI.

2. Databricks (Mosaic AI)

Best for: Enterprises that need to build custom AI models using their own messy, real-world data.

Most companies have a "data swamp" instead of a "data lake", a disorganized mess of old spreadsheets and random databases. Databricks is the giant vacuum and filter that cleans that mess and turns it into fuel for AI. With their acquisition of MosaicML, they now offer an end-to-end factory for building their own models. Instead of sending your sensitive data to a third party, you can train a model "in-house" on their platform, ensuring that your company's secrets stay inside your company's walls.

- Unity Catalog: A single "control tower" where you can see every piece of data and every AI model your company owns, and who has permission to use them.

- Mosaic AI Model Training: Tools that allow you to take a "base" model and fine-tune it on your specific industry jargon for a fraction of the cost of training from scratch.

- Delta Lake integration: Ensures that the data your AI is learning from is always fresh, accurate, and hasn't been corrupted or duplicated.

- MLflow Tracking: An open-source standard for managing the "life" of an AI model, from its birth as an experiment to its job in production.

- Serverless Compute: You don't have to manage the servers; Databricks automatically spins up the power you need and shuts it down when you're done.

Pricing: Uses a "pay-for-what-you-use" model based on Databricks Units (DBUs). Standard jobs cost roughly $0.07 to $0.40 per DBU, depending on the task and level of support.

Why it matters: Intelligence is only as good as the data it's built on. Databricks solves the "garbage in, garbage out" problem by making sure your AI is built on a foundation of high-quality, secure enterprise data.

3. Anyscale

Best for: Developers who want to scale their Python code from a laptop to a supercomputer without losing their minds.

Anyscale is the commercial home of "Ray," the open-source framework that powers the world's largest AI companies. Python is the language of AI, but it wasn't built to run on a thousand computers at once. Anyscale fixes this. It allows a developer to write code on their laptop and then, with a single command, run that exact same code across a massive cloud cluster. It removes the need for a giant "DevOps" team, allowing small groups of engineers to build world-class AI systems.

- Ray Turbo: A highly optimized version of the Ray engine that runs data processing and model training significantly faster than the free version.

- Job Ghosting: An intelligent feature that automatically detects if a server is about to fail and moves your AI task to a healthy one before anything crashes.

- Spot Instance Orchestrator: Saves up to 80% on cloud costs by automatically using "leftover" server capacity and handling any interruptions gracefully.

- Anyscale Workspaces: Collaborative, cloud-based coding environments that look like VS Code but have access to unlimited GPU power.

- Multi-Cloud Support: You aren't locked into AWS or Google; you can move your AI workloads between different clouds to find the best prices.

Pricing: New users get $100 in free credits. Paid usage is billed per hour, with NVIDIA H100 instances costing around $9.28 per hour (including the management fee).

Why it matters: Scaling is usually a nightmare of complex configurations and broken connections. Anyscale makes scaling feel like "magic," allowing you to focus on the science of AI rather than the plumbing of the cloud.

4. Weights & Biases (W&B)

Best for: Teams of researchers who need to track thousands of experiments and stay organized.

Building an AI model is a bit like baking a cake, where you change one tiny ingredient every time to see if it tastes better. If you don't take perfect notes, you'll never remember which version was the best. Weights & Biases is the ultimate "lab notebook" for AI. It tracks every single experiment, every version of the code, and every result, creating beautiful charts that show exactly how your model is improving over time. It’s the platform that turns "guessing" into "science."

- The Dashboard: A real-time, visual view of your models as they train, letting you stop a "bad" experiment early to save money.

- W&B Weave: A specialized tool for tracking "traces" in generative AI, showing you exactly why a chatbot gave a specific (and sometimes weird) answer.

- Artifact Versioning: Automatically saves your datasets and models, so you can "time travel" back to any version of your project in seconds.

- Collaborative Reports: Allows you to turn your data into a professional blog-style report to share with stakeholders or teammates.

- Launch Integration: Connects directly to your GPU providers (like CoreWeave) so you can start a training run directly from the W&B interface.

Pricing: Free for personal use and academic research. For professional teams, it starts at $60 per user per month (billed annually), with 100GB of storage included.

Why it matters: Without organization, AI development is just expensive trial and error. W&B provides the discipline and visibility required to move from a hobby project to a professional, scalable product.

5. SkyPilot

Best for: Organizations that want to use multiple cloud providers at once to get the lowest possible price.

In 2026, GPUs are like little; everyone needs them, and the price changes every hour. SkyPilot is an open-source platform that acts like a "travel agent" for your AI. You tell it what you want to run (e.g., "I need 8 H100 GPUs for 10 hours"), and SkyPilot scans 20+ different cloud providers to find the cheapest and most available option. It then automatically sets everything up, runs your job, and shuts it down when it's finished. It’s the ultimate tool for cost-conscious AI labs.

- Multi-Cloud Pools: Group GPUs from AWS, Azure, Google Cloud, and smaller providers into one giant "virtual" cluster.

- Managed Spot Instances: It can cut your bills by 3-6x by using "spot" capacity and automatically resuming your work if the cloud takes the server back.

- One-Click Deployment: Turn a complex GitHub repository into a running AI service on any cloud with a single line of text.

- Resource Optimization: It intelligently picks the right amount of CPU and RAM to match your GPUs, so you aren't paying for power you don't use.

- Auto-Stop: A "kill switch" that ensures you never accidentally leave a $30/hour server running over the weekend.

Pricing: SkyPilot itself is an open-source tool (Free). You only pay for the raw cloud computer you use, and SkyPilot helps you find the lowest rates available in the market.

Why it matters: The biggest threat to an AI startup is the cloud bill. SkyPilot gives power back to the developers by making cloud providers compete for your business based on price and availability.

6. Tecton

Best for: Real-time AI applications like fraud detection or personalized shopping recommendations.

Most AI models are built on "static" data (stuff that happened in the past). But for things like catching a credit card thief, you need "real-time" data (what is happening right now). Tecton is a "feature store" that bridges this gap. It manages the flow of data so that your AI can use both historical patterns and live events to make a decision in milliseconds. It’s the "nervous system" for applications that need to react to the world as it happens.

- Batch and Streaming Integration: Seamlessly combines old data from your warehouse with live data from sources like Kafka or Kinesis.

- Feature Lineage: Tracks exactly where every piece of data came from, which is vital for legal compliance and debugging.

- Point-in-Time Correctness: A specialized feature that ensures your AI doesn't accidentally "see the future" during training, which leads to false accuracy.

- Automated Data Pipelines: No more manual data cleaning; Tecton automates the process of turning raw numbers into "features" the AI can understand.

- Enterprise Security: Built-in governance to ensure that sensitive customer data is handled according to strict privacy laws.

Pricing: Now part of the Databricks ecosystem, pricing is generally integrated into broader enterprise contracts, but historical standalone pricing was around $1 per "credit" for usage.

Why it matters: A "slow" AI is a useless AI in many industries. Tecton provides the high-speed data delivery required to make AI work in the real world, where every millisecond counts.

7. Viam

Best for: Bridging the gap between AI software and physical hardware (robotics and IoT).

The future of AI isn't just on a screen; it's in the physical world. Viam is the infrastructure platform for "Physical AI." It provides a standardized way to connect AI models to cameras, sensors, and motors. Whether you are building a smart security camera, an automated warehouse robot, or a high-tech farm, Viam handles the complex networking and "edge" computing required to make hardware smart. It turns robotics development into something that feels like modern web development.

- Hardware Abstraction: Write one piece of code that works on any camera or motor, regardless of the brand or model.

- Edge Inference: Run your AI models directly on the device (like a Raspberry Pi or an NVIDIA Jetson) so it works even without an internet connection.

- Cloud Sync: Automatically uploads important data to the cloud for further analysis while keeping the "action" local for speed.

- Fleet Management: A central dashboard where you can see the health and status of thousands of different devices scattered around the world.

- No-Code Modules: A library of pre-built "blocks" for common tasks like face detection or obstacle avoidance that you can drag and drop into your project.

Pricing: Free to start (no credit card required). After your first $5/month in usage, you pay for what you use (e.g., $0.15/GB for cloud data or $0.0025/second for compute).

Why it matters: Hardware is notoriously hard. Viam removes the "robotics tax," allowing software engineers to bring their AI expertise into the physical world without needing a PhD in mechanical engineering.

Which one should you choose?

The "best" platform depends on the specific problem you are trying to solve. If you are an enterprise with high-security needs and messy data, Databricks (Mosaic AI) is your best bet. If you are a startup trying to stay lean and avoid massive cloud bills, you should be using SkyPilot to hunt for the best GPU deals. For those building complex agents that need to learn from experiments, Weights & Biases is non-negotiable. Finally, if you are looking to move your AI out of the cloud and into the physical world, Viam is the clear leader for the next generation of robotics.

How does this connect to building a strong career or portfolio?

The tech world has enough people who can write a "good prompt." What it lacks are the people who can build the systems that host those prompts. By mastering one of these infrastructure platforms, you are moving yourself into the "Top 1%" of tech talent. These are the skills that prove you can handle production-level challenges. When you showcase a project in your portfolio that demonstrates a "managed multi-cloud deployment" or a "real-time feature store," you are signaling to high-growth companies that you are ready to build the future, not just talk about it.

Showcase Your Proof of Work with Fueler

Building a scalable AI system is a massive achievement, and you shouldn't let it get buried in a GitHub repo that no one ever visits. Fueler is the perfect place to showcase the "how" behind your work. You can create a project entry that doesn't just show the final AI output, but includes your Anyscale dashboard logs, your Weights & Biases experiment charts, and your SkyPilot configuration files. This level of transparency is exactly what hiring managers at top AI firms are looking for. It proves your technical depth and makes your skills undeniable.

Final Thoughts

We are currently in the "Infrastructure Phase" of the AI revolution. Just like the early days of the internet required people to build the routers and the fiber-optic cables, today's AI requires people to build the GPU clusters and data pipelines. The seven tools mentioned above are the most important pieces of machinery in that construction project. Whether you are a solo developer or a CTO, these platforms will define how fast and how far you can go. Don't just follow the hypemaster the tools that power it.

FAQs

1. Is AI infrastructure different from regular cloud computing?

Yes. While regular cloud (like basic AWS) provides general servers, AI infrastructure is specifically optimized for high-speed "parallel processing" and massive data movement required by neural networks.

2. Why is "Multi-Cloud" becoming so popular for AI?

Because GPUs are often in short supply. Using a platform like SkyPilot allows you to jump to whichever cloud has available H100s at the best price, preventing your project from stalling.

3. Do these tools work with open-source models like Llama 3?

Absolutely. In fact, most of these platforms (especially Together AI and Anyscale) are specifically designed to make running and fine-tuning open-source models easier and cheaper than using closed APIs.

4. What is "Edge AI" and why does Viam focus on it?

Edge AI means running the "brain" on the actual device (like a drone or a camera) rather than in a distant cloud. This is vital for things that need to react instantly or work in areas with bad Wi-Fi.

5. How much can I save by using infrastructure tools like SkyPilot?

By using features like "Spot Instances" and "Multi-Cloud" price comparisons, teams often see their cloud bills drop by 50% to 80% compared to just using standard "On-Demand" cloud pricing.

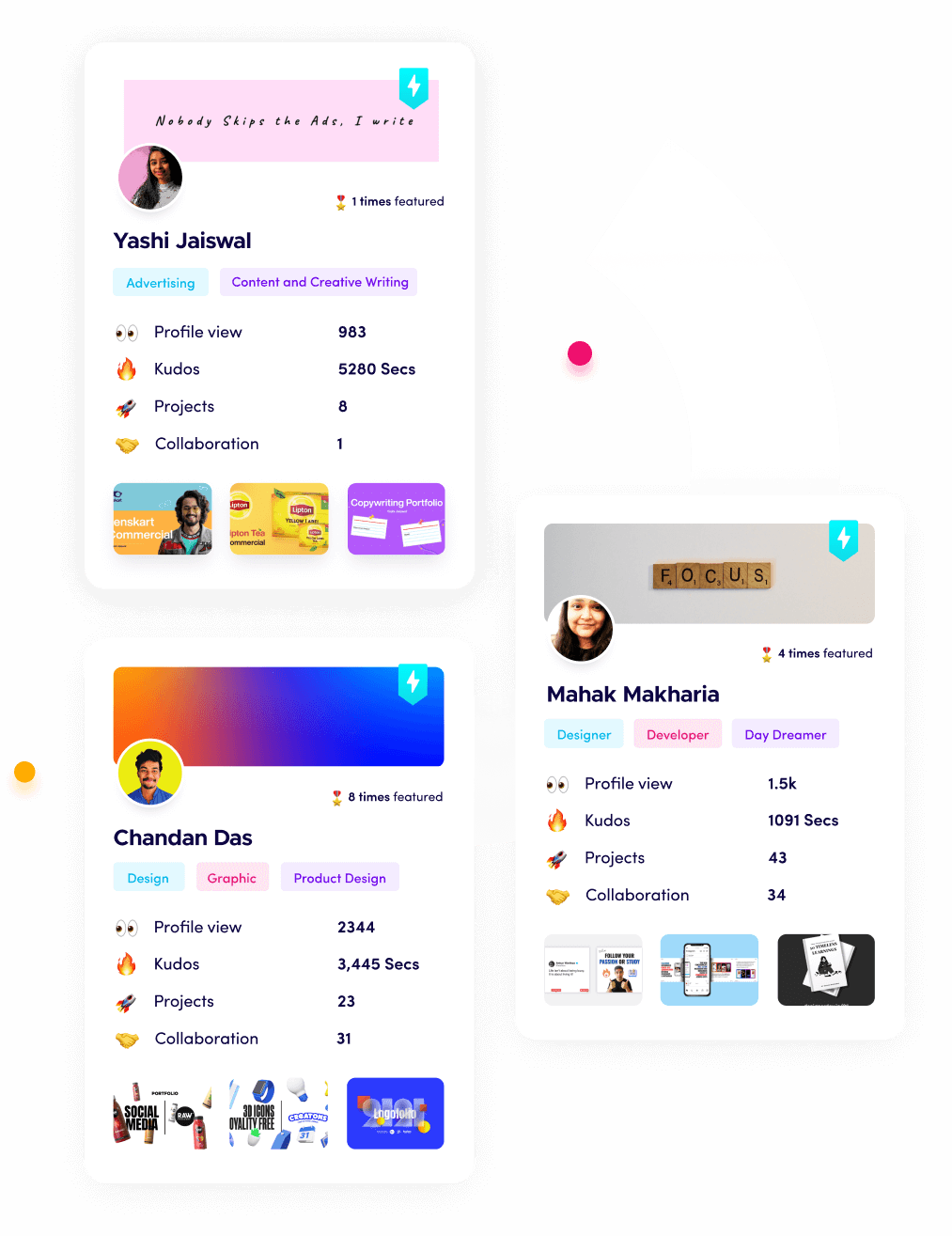

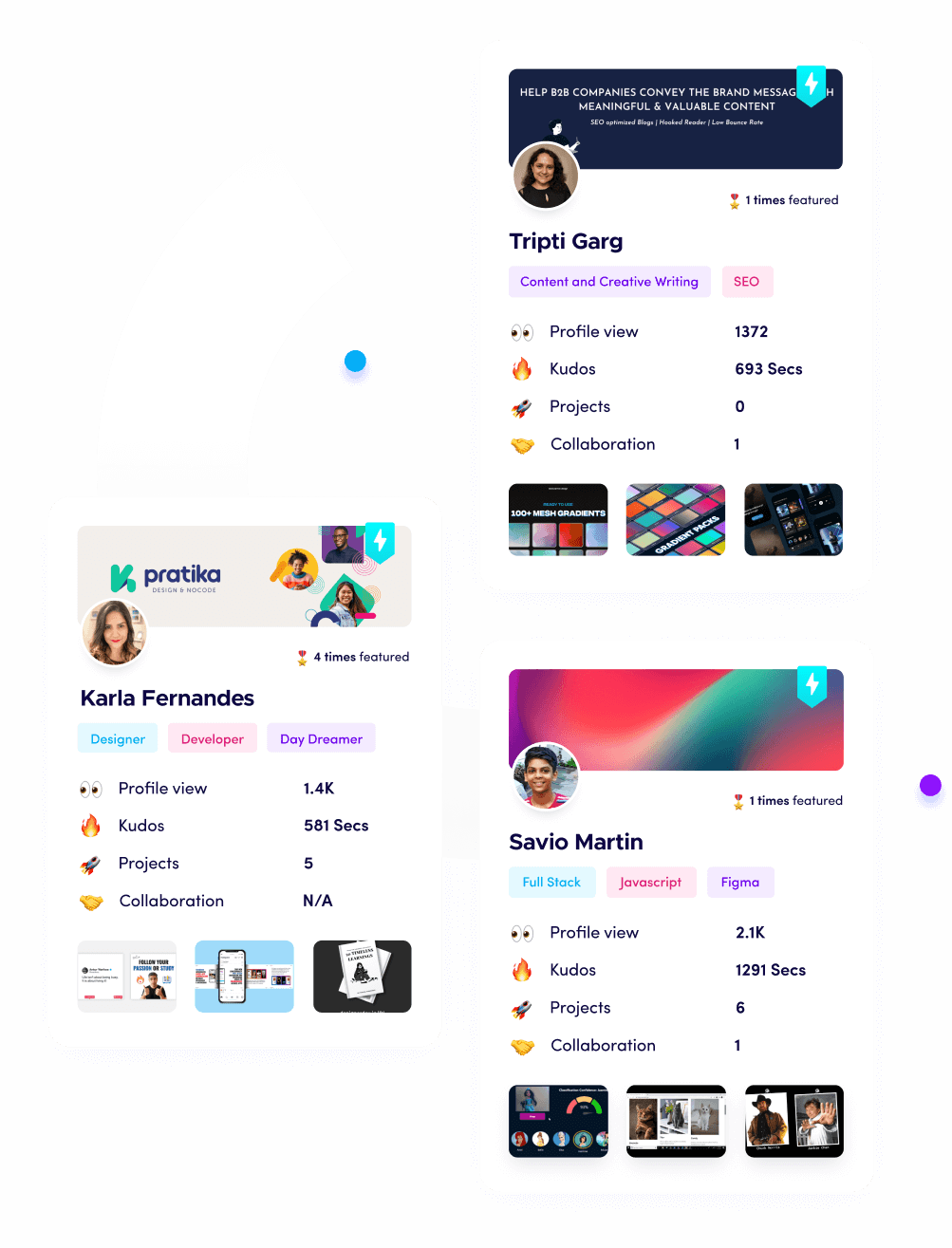

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.