The Hidden Infrastructure Powering the AI Boom

Riten Debnath

04 Apr, 2026

The world is currently fixated on the magic of Artificial Intelligence. We marvel at chatbots that write poetry, image generators that create art from thin air, and coding assistants that build software in seconds. But behind the curtain of every viral AI application lies a massive, complex, and often ignored city of digital machinery. If AI is the high-performance sports car, this hidden infrastructure is the refinery, the highway system, and the GPS combined. Without it, the AI Boom would be little more than a collection of impressive but unusable prototypes.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. The Physical Foundation: High-Performance GPU Infrastructure

When we talk about AI, we often think of the cloud, which sounds light and ethereal. In reality, the AI boom is grounded in some of the heaviest and most expensive hardware ever built. The infrastructure begins with specialized chips known as GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units). Unlike the standard processor in your laptop, these chips are designed to perform thousands of mathematical calculations simultaneously, which is exactly what a Large Language Model (LLM) needs to think. In 2026, the demand for these chips has reached a fever pitch as companies move from basic chatbots to massive, multi-modal systems.

- NVIDIA Blackwell B200 Architecture: This flagship chip features 208 billion transistors and provides up to 30x faster inference for LLMs compared to older models, making it the primary engine for modern AI, while also incorporating a dedicated engine for reliability and security at the hardware level.

- Advanced Liquid Cooling Systems: Because these high-performance chips generate immense heat, often reaching up to 1000W per GPU, modern data centers have shifted from traditional air fans to advanced liquid-to-chip cooling loops to maintain stability and prevent hardware throttling during intense training sessions.

- HBM3e High-Bandwidth Memory: This specialized memory allows the GPU to read and write data at speeds of up to 8 TB/s, which is critical because AI models are so large that the processor often spends more time waiting for data than actually calculating if the memory isn't fast enough.

- NVLink 5 Interconnect Technology: This is a high-speed bridge that allows thousands of individual GPUs to talk to each other as if they were one giant, unified super-chip, which is an absolute requirement for training the latest generation of models that contain trillions of parameters.

- Energy-Efficient FP4 Precision: A new mathematical format introduced in recent chip architectures that allows AI models to run using significantly less power and memory without losing accuracy, which is the only way the industry can meet global sustainability goals while increasing power.

Why it matters to the AI Boom:

Without this physical layer, the software has nowhere to live. The sheer scale of this hardware infrastructure is the reason AI can process trillions of words in seconds. Understanding the hardware layer is the first step in realizing that AI isn't just code; it is a massive industrial operation that requires world-class engineering to stay upright, functional, and economically viable for long-term use.

2. LLMOps: Managing the AI Life Cycle at Scale

Building an AI model is only a small part of the journey. The real challenge is keeping it running, accurate, and cost-effective once it is released to the public. This is where LLMOps (Large Language Model Operations) come into play. Think of LLMOps as the factory management system for AI. It ensures that when you type a prompt, the model responds quickly, the cost is managed, and the answer is actually useful. It bridges the gap between a data scientist’s experiment and a product used by millions of people every day.

- Automated Evaluation (LLM-as-a-Judge): This involves using a second, highly capable AI model to automatically grade and critique the responses of your main AI application to ensure quality remains high and consistent without needing thousands of humans to manually review every single chat.

- Prompt Version Control and Orchestration: This practice treats AI instructions like software code, allowing engineering teams to test different versions of a prompt, track changes over time, and instantly roll back to a safer version if a new update starts causing the AI to behave poorly or give wrong answers.

- Inference Optimization and Quantization: Developers use these techniques to shrink the mathematical size of models so they run much faster and cheaper on less powerful hardware, which is the only way to make AI features affordable for the average user without a noticeable drop in intelligence.

- Real-Time Guardrail Integration: These are safety layers that sit between the user and the AI, acting as a filter that blocks toxic content, prevents the AI from giving medical advice it isn't qualified for, and ensures private company data is never accidentally leaked in a response.

- Granular Token Usage Tracking: This is the detailed monitoring of how many tokens (word fragments) each user is consuming in real-time, which is essential for billing purposes and to prevent a single buggy application or malicious user from running up a million-dollar cloud bill in a single night.

Why it matters to the AI Boom:

The history of tech is full of cool ideas that failed because they couldn't scale. LLMOps is the discipline that allows AI to scale safely. It moves AI out of the research lab and into the real world, transforming it from a temperamental experimental tool into a reliable utility that businesses can trust with their customers and their most sensitive internal data.

3. Vector Databases: The Secret to AI Long-Term Memory

Standard databases store information in rows and columns, like an Excel sheet. However, AI does not see the world in rows; it sees the world in mathematical relationships called vectors. If you ask an AI about a regal feline, a standard database looks for those exact words and finds nothing. A Vector Database understands that the answer is Lion or King's Cat because it stores the mathematical meaning of words. This is the hidden memory that allows AI to remember your company’s 1,000-page manual or your entire personal chat history from a year ago.

- Semantic Similarity Search Capability: Instead of searching for literal keywords, these databases find information based on the intent and context of the user's query, meaning the AI can find the right answer even if the user uses completely different words than the source document.

- Hybrid Metadata Filtering: This allows developers to combine vector searches with traditional data filters, such as asking the AI to find all marketing documents related to AI that were specifically written after 2025 and approved by the legal department, adding a layer of precision to the AI's memory.

- Real-Time Data Ingestion and Indexing: This ensures that as soon as a new document or email is uploaded to a company's system, the AI can read, understand, and remember it within milliseconds without needing to undergo a long and expensive retraining process for the entire model.

- Multi-Tenant Data Isolation: This is a security feature that keeps different users' data mathematically separate within the same database, ensuring that one person’s private information or a specific department's secret project never accidentally ends up in another person’s AI response.

- Horizontal Scalability for Big Data: Modern vector databases are built to grow from a few thousand documents to billions of data points across multiple servers without slowing down, which is the only way global corporations can provide AI assistants that know everything about their entire history.

Why it matters to the AI Boom:

AI models have a cutoff date for their knowledge. Vector databases solve this by giving the AI eyes and ears to the present moment. This infrastructure is what makes AI useful for specific businesses rather than just being a general-purpose toy, allowing it to act as a specialized expert for any industry by providing it with a massive, searchable long-term memory.

4. AI Data Pipelines: Refineries for Synthetic and Real Data

AI is only as good as the data it consumes. However, the internet is increasingly filled with messy, biased, or repetitive information. Data pipelines are the hidden refineries that take raw, messy data from the internet or private company servers and clean, label, and format it. In 2026, we have also seen the rise of Synthetic Data, where AI models are used to create high-quality training data for other AI models to fill in gaps where real-world data is missing or too sensitive to use.

- Automated Data Cleaning and Deduplication: These pipelines use machine learning to automatically remove typos, duplicate entries, and junk text from massive datasets before training begins, ensuring the AI doesn't learn bad habits or waste its memory on useless information.

- Synthetic Data Generation for Rare Cases: This involves creating realistic but fake data to train AI in scenarios like fraud detection or rare medical conditions, where real-world examples are hard to find, allowing the AI to become an expert even in topics with limited public information.

- PII Anonymization and Scrubbing: These systems automatically detect and remove names, addresses, and phone numbers from data to comply with global privacy laws like GDPR, ensuring the AI can learn from the patterns in the data without ever seeing the private details of real people.

- Bias Mitigation and Balancing Loops: These tools check datasets for unfair patterns, such as gender or racial bias, and automatically balance the data to ensure the AI remains fair and neutral in its decision-making process once it is deployed to the public.

- Multimodal Data Processing: This is the ability to take images, audio files, and video transcripts and convert them into a unified format that a single brain AI can process and learn from simultaneously, which is how modern AIs learn to see, hear, and speak all at once.

Why it matters to the AI Boom:

We are reaching a point where high-quality human-written text on the internet is becoming scarce. The future of the AI boom depends on the infrastructure that can find, clean, and even create the next generation of training data. High-quality data pipelines ensure that AI gets smarter over time instead of hitting a ceiling due to a lack of new things to learn.

5. Security and Governance: Guardrails for Enterprise AI

As AI becomes more integrated into our lives, the risks of jailbreaking or data leaks increase. What if an AI is tricked into giving away a company secret? The security and governance layer of AI infrastructure acts as the digital police force. It monitors every interaction to ensure the system is being used safely and ethically. This layer is often invisible to the end user, but it is currently the highest priority for CEOs and government regulators worldwide who are worried about the risks of uncontrolled intelligence.

- Prompt Injection Defense Systems: These are specialized firewalls that detect when a user is trying to hack the AI by using clever language to trick it into ignoring its safety rules, acting maliciously, or revealing its internal instructions to the public.

- Response Redaction and Content Filtering: This is a final check that scans the AI's generated output for sensitive information, like credit card numbers or internal passwords, before it is ever displayed to the user, acting as a last line of defense against data leaks.

- AI Explainability and Audit Modules: These tools help humans understand exactly why an AI made a certain decision or gave a specific answer, which is critical for legal, medical, and financial applications where a human must be able to justify the AI's actions.

- Role-Based Access Control (RBAC): This ensures that an employee can only ask the AI questions about data they are officially allowed to see within the company, preventing a junior staff member from accidentally accessing sensitive CEO-level financial reports through a chatbot.

- Automated Adversarial Testing: This involves constantly sending thousands of attack prompts at the AI during its development phase to find weaknesses in its logic and safety filters before a real hacker has the chance to exploit them in the real world.

Why it matters to the AI Boom:

One major security breach could stop the entire AI industry in its tracks. This hidden security infrastructure provides the safety and permission for large-scale adoption. It allows a bank to use AI for loans or a hospital to use AI for diagnosis without fearing a catastrophic failure, a massive data breach, or a multi-million dollar lawsuit.

Tool-Specific Deep Dive: The Infrastructure Leaders

To build these complex layers, professional developers rely on a specific set of tools that handle the heavy lifting. These platforms are the reason why a small team of three people can now build an AI application that serves millions of users across the globe.

LangSmith by LangChain

LangSmith is a professional platform designed to help teams debug, test, and monitor their AI applications. It acts like a microscope for your AI's thought process, allowing you to see exactly where a conversation went wrong or why a model is responding slowly.

- Full Trace Visibility and Debugging: View every single step of an AI's logic in a visual timeline, including exactly which database it searched and which specific prompt it used to generate the final answer you see on your screen.

- Automated Regression Testing Suites: Run thousands of mock conversations every time you change your code to ensure a new update hasn't made the AI dumber, slower, or more prone to making mistakes that it didn't make yesterday.

- Dynamic Dataset Curation: Easily turn real user conversations into gold standard test cases that the AI can use to learn from its past mistakes, helping the model's accuracy improve every single day based on real-world feedback.

- Granular Performance Monitoring: Track the exact time it takes for each part of your AI chain to run, helping you find and fix slow spots before your users even notice that the application is lagging.

- Pricing Structure: LangSmith offers a generous free tier for personal projects and startups, with professional team plans typically scaling based on the number of traces or logs processed per month.

Why it matters to the AI Boom:

It significantly speeds up the development process by removing the guesswork. Instead of guessing why an AI is hallucinating or giving weird answers, developers can use LangSmith to pinpoint the exact data point or instruction causing the issue, leading to much more reliable products for the public.

Pinecone Vector Database

Pinecone is the leading managed vector database that provides long-term memory for AI applications. It is built to handle billions of pieces of information while still returning search results in just a few milliseconds, making it the industry standard for RAG (Retrieval-Augmented Generation) systems.

- Fully Managed Serverless Scaling: You don't have to worry about managing servers or hardware; the database automatically grows or shrinks its capacity based on how much data you are storing and searching at any given time.

- High-Speed Semantic Search: Uses advanced mathematical algorithms like HNSW to find the most relevant information almost instantly, even when searching through a database that contains a billion different text or image entries.

- Real-Time Data Indexing and Updates: Unlike some search engines that need to re-index for hours, Pinecone allows you to add, delete, or change information in real-time with zero downtime, so your AI always has the latest facts.

- Integrated Enterprise Security: Features built-in encryption for data at rest and in transit, along with private connection options to ensure that sensitive company data never touches the public internet during the search process.

- Pricing Structure: Pinecone offers a Free Forever tier for small projects and learning, while their paid plans follow a transparent pay-for-what-you-use model based on storage volume and the number of searches performed.

Why it matters to the AI Boom:

It makes AI smart about your specific world. Without Pinecone, an AI only knows what it learned during its initial training years ago. With Pinecone, that same AI can answer questions about your specific company's latest news, private documents, or real-time sales data with perfect accuracy.

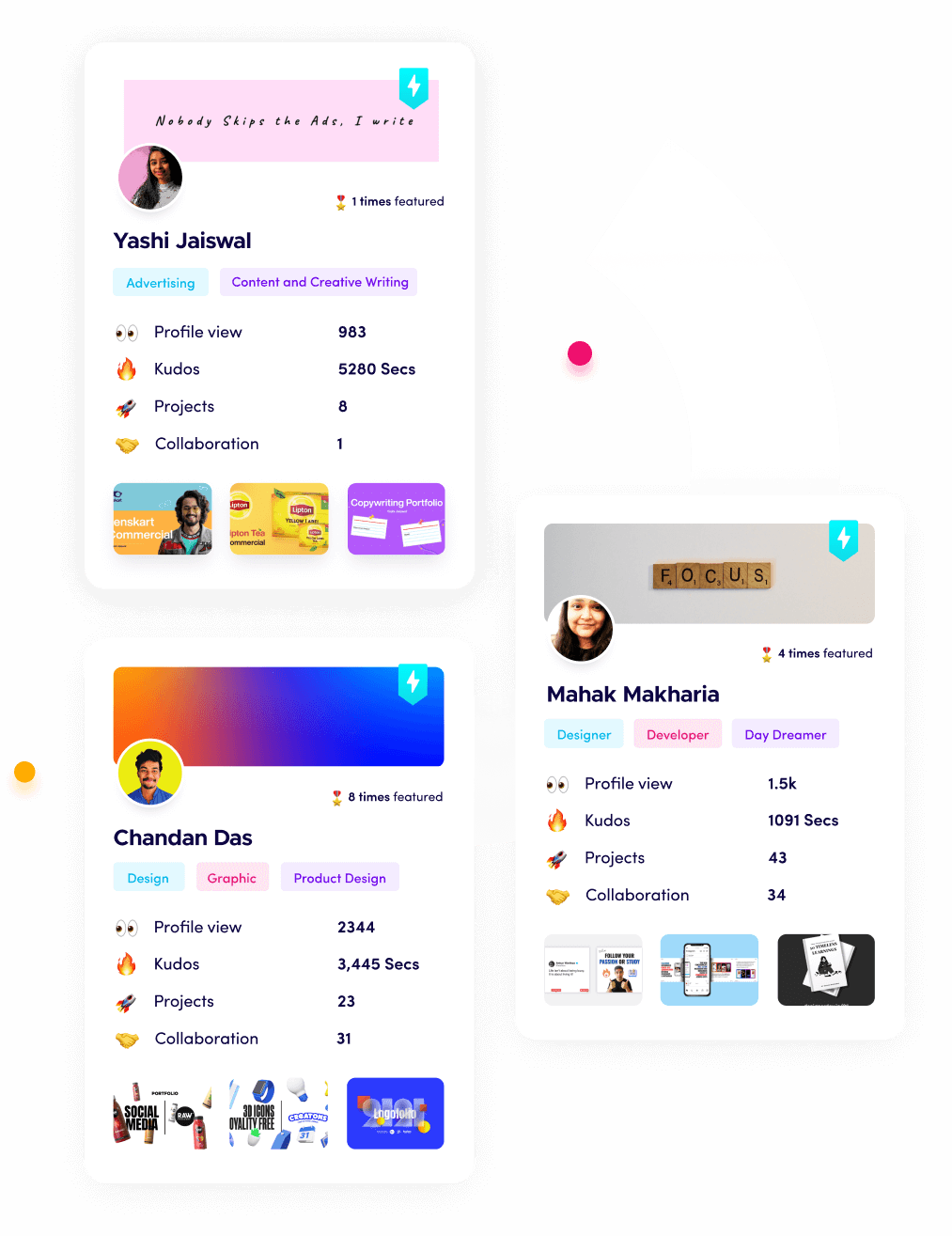

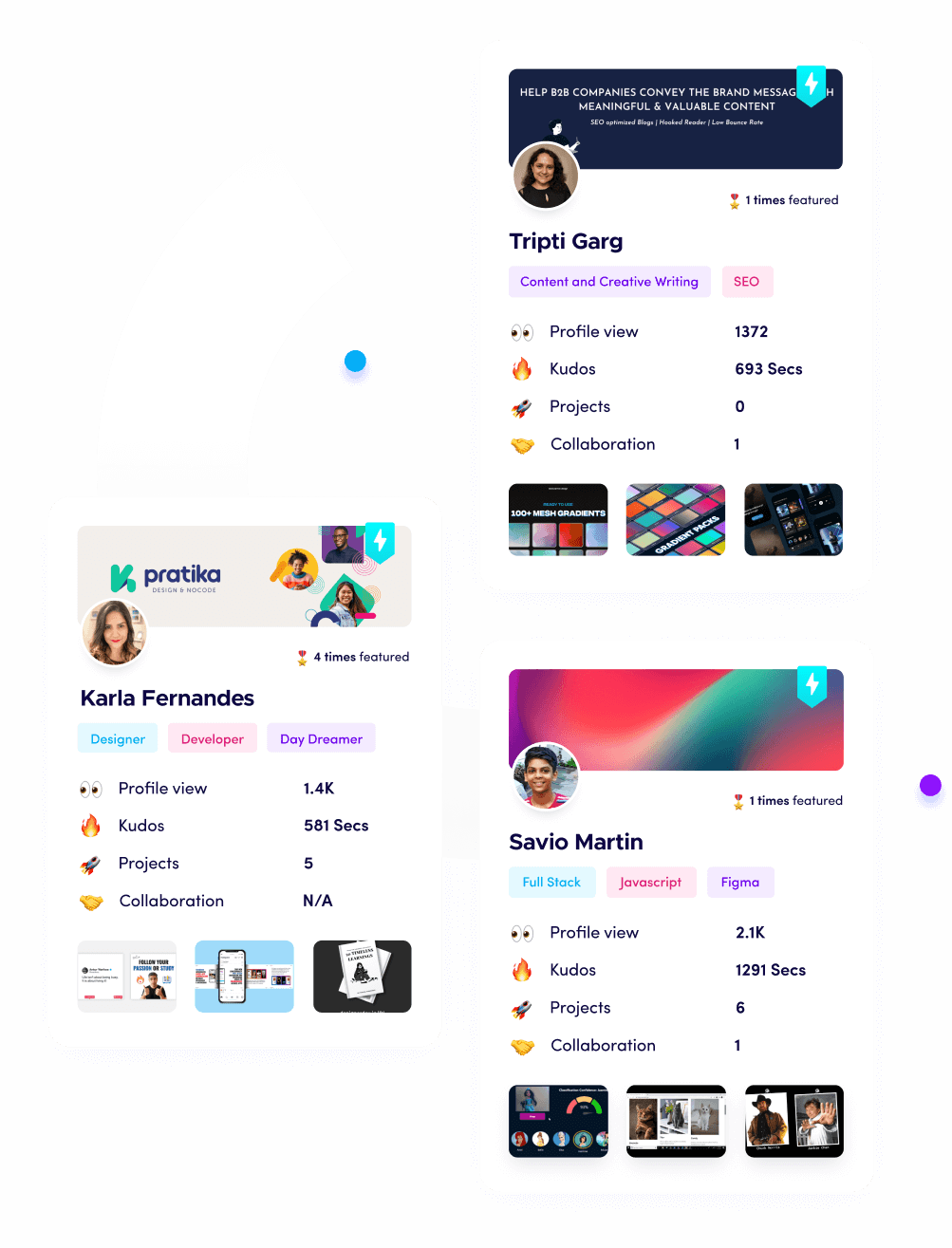

Promoting Fueler: Showcase Your Infrastructure Skills

As the tech world shifts toward these complex AI infrastructures, the way you demonstrate your value to employers must change. It is no longer enough to just list AI as a skill on a flat resume. Companies are looking for people who can actually build and manage these layers.

Using Fueler, you can document your journey by uploading your LLMOps projects, vector database experiments, and AI assignments. By creating a proof of work portfolio, you allow companies to see your actual skills in action. Whether you've built a custom RAG system or a model monitoring dashboard, Fueler helps you stand out in a crowded market where traditional CVs are becoming less effective. Show the world what you can build, not just what you've studied.

Final Thoughts

The AI boom is a massive architectural achievement that goes far beyond simple chatbots. While the brains of the operation get all the headlines, it is the body of the infrastructure, the chips, the operations, the databases, and the security that make it all possible. As we move further into 2026, understanding these hidden layers will be the most valuable skill in the technology industry. If you want to build a career in this space, stop looking at the surface and start looking at the plumbing. Dive into the infrastructure, because that is where the real power and the real future of intelligence reside.

FAQs

What are the most essential LLMOps tools for a beginner in 2026?

Beginners should start with LangChain for building their first AI applications, Pinecone for learning how AI memory works, and Helicone for basic monitoring. These tools have the largest online communities and offer free tiers that make it easy to learn without any upfront financial investment.

How can I get hired in AI infrastructure without a computer science degree?

The industry is moving toward a Proof of Work model. Build a portfolio on a platform like Fueler that showcases real projects, such as a chatbot trained on your own documents or a monitoring system you've set up for an open-source model. Real work samples are often more valuable than a degree in this fast-moving field.

Is AI infrastructure more expensive to maintain than traditional web apps?

Yes, it is significantly more expensive. AI requires specialized hardware like GPUs and higher data processing costs for every single user request. This is why LLMOps tools that focus on inference optimization and cost tracking are so important for keeping a business profitable while using AI features.

What is the main difference between MLOps and LLMOps?

MLOps was originally designed for traditional machine learning, like predicting stock prices or house values. LLMOps is a specialized branch that handles the unique challenges of Large Language Models, such as managing complex prompts, preventing AI hallucinations, and handling massive amounts of unstructured text and image data.

How can I learn about AI hardware and GPU infrastructure for free?

You can follow technical engineering blogs from companies like NVIDIA, Anthropic, and Google Cloud, or watch deep-dive engineering talks on platforms like YouTube. Many cloud providers also offer free credits or student programs that allow you to experiment with high-performance GPUs for a short period at no cost.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.