7 Self-Learning AI Agents That Improve Without Human Intervention

Riten Debnath

24 Feb, 2026

The era of "set it and forget it" software is undergoing a massive transformation in 2026. For years, we relied on AI that was static; it only knew what it was trained on and stayed that way until a human developer pushed an update. But today, the most exciting frontier in technology is Self-Learning AI Agents. These are autonomous systems designed to observe their own performance, learn from their mistakes, and literally rewrite their own logic to become more efficient. They don't just follow instructions; they evolve, turning every interaction into a lesson that makes them sharper for the next task.

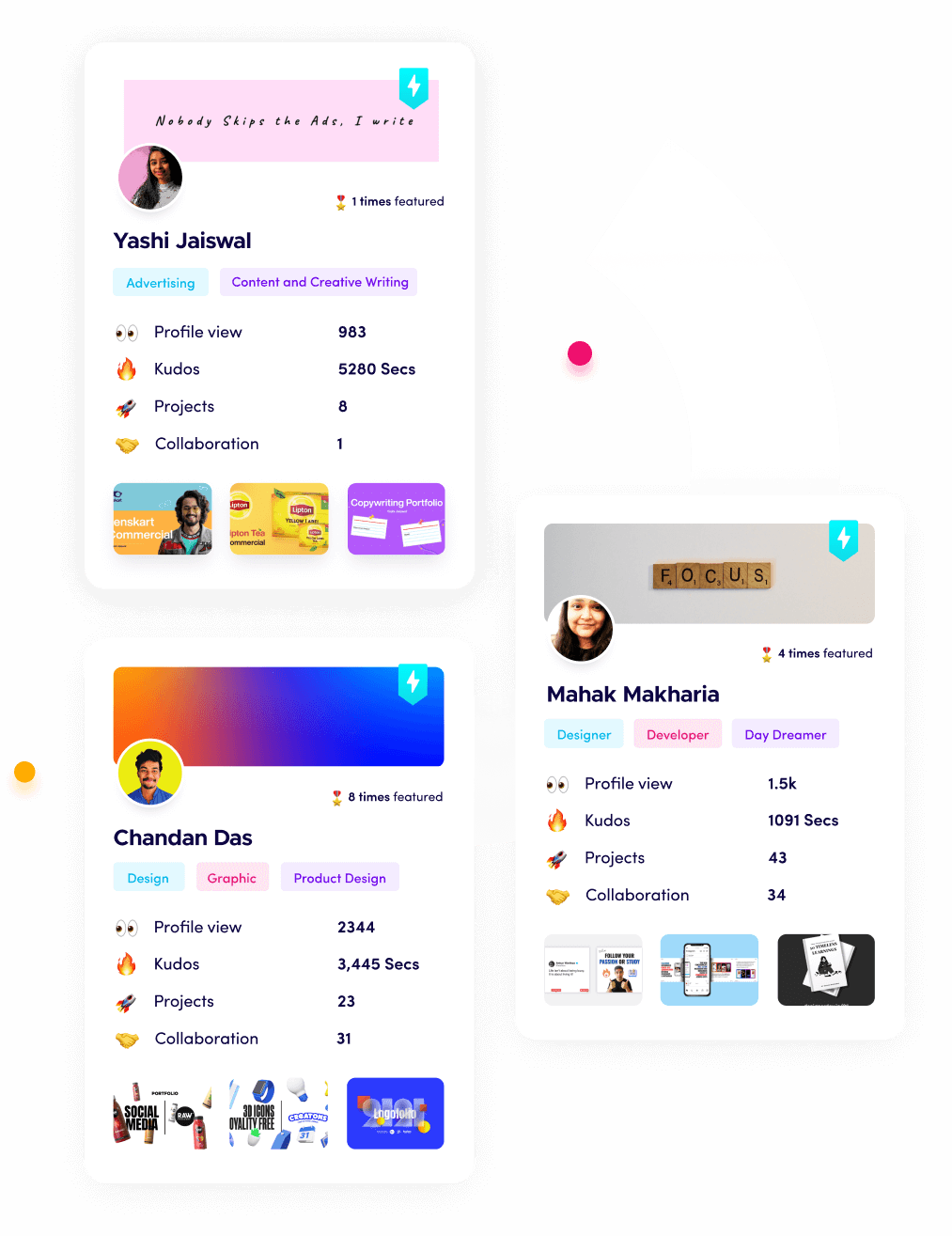

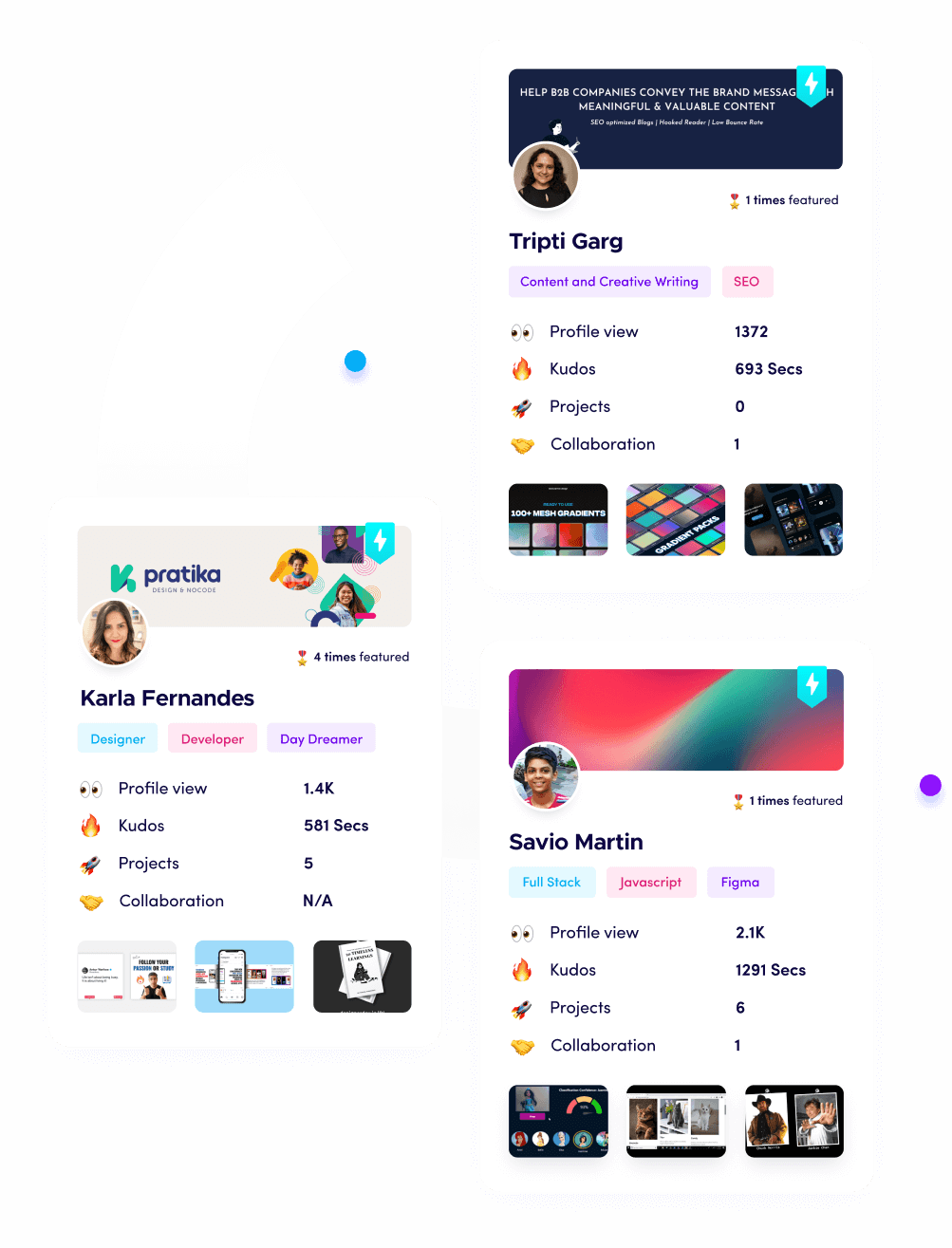

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. Recursive Code-Evolving Agents

Recursive code-evolving agents are perhaps the most "sci-fi" technology currently active in 2026. These agents are built with a primary directive: optimize your own source code. They work by creating a digital twin of their own architecture, running simulations to find bottlenecks, and then writing patches to improve their processing speed or reasoning accuracy. Instead of waiting for a version 2.0 from a developer, these agents are essentially deploying a version 2.1, 2.2, and 2.3 to themselves every few hours, leading to exponential gains in performance.

- Autonomous Logic Refactoring: These agents scan their own decision-making scripts to find redundant loops or inefficient logic gates, rewrite them into more streamlined code, and then run a series of self-tests to ensure the new version is more stable than the last one, ensuring that the software remains optimized without any manual intervention from a human engineer.

- Algorithmic Self-Selection: When faced with a complex problem, the agent can spawn multiple versions of itself with slightly different algorithms, compare which one solves the task fastest, and then "absorb" the winning code into its primary core for all future operations, essentially performing millions of mini-experiments to find the perfect solution path.

- Persistent Bug Self-Healing: If an agent encounters a runtime error, it doesn't crash, instead, it isolates the broken module, analyzes the stack trace, writes a fix, and redeploys itself in a sandboxed environment to verify the repair before going back to work, meaning the system gets more robust the more it is actually used.

- Dynamic Tool Synthesis: When these agents realize they lack a specific capability, like the ability to parse a new file format, they write a custom script or "tool" from scratch, add it to their permanent library, and learn how to use it for future tasks, effectively building their own specialized toolbox as they grow.

- Zero-Human Versioning: The entire lifecycle of these agents happens without manual intervention, meaning the software you start with on Monday could be significantly more capable and faster by Friday solely through its own internal evolution cycles, providing a massive competitive advantage to teams that deploy them.

Why it matters:

These recursive systems represent the ultimate form of software efficiency because they eliminate the need for manual maintenance. This self-driven evolution is a primary reason why these agents are trending in 2026.

2. Experience-Driven Embodied Agents

Embodied agents are designed to learn by "doing" within complex, open-ended environments like Minecraft or simulated robotics labs. In 2026, these agents use a mechanism called an "Automatic Curriculum" where they set their own goals based on what they’ve already learned. If an agent discovers it can craft a wooden tool, it autonomously decides its next goal should be finding stone. They store these successful behaviors in a "Skill Library," which acts as a permanent memory of executable code they can call upon whenever they face a similar challenge.

- Curiosity-Driven Exploration: These agents are programmed to seek out "novelty," meaning they prioritize exploring unknown areas of their environment to discover new items or interactions, ensuring they are always expanding their knowledge base rather than just repeating the same tasks over and over again for comfort.

- Modular Skill Libraries: Every time an agent solves a new problem, it saves the exact sequence of code as a reusable function in a long-term library, allowing it to build increasingly complex behaviors by combining simple skills it mastered months ago into a sophisticated multi-step solution for new challenges.

- Internal Feedback Loops: Instead of needing a human to say "good job," these agents use self-verification systems to check if their actions achieved the intended result, such as checking if an item was successfully crafted, and then using that feedback to refine their future execution strategies.

- Cross-Environment Generalization: Once an agent masters a set of skills in one simulation, it can "zero-shot" transfer that knowledge to a completely different world or robot body, drastically reducing the time it takes to become productive in a new setting because it doesn't have to relearn basic physics.

- Infinite Task Scaling: Because these agents manage their own learning path, they can stay active for weeks or months, slowly climbing a "tech tree" of complexity that would be impossible for a human to pre-program, leading to discoveries and optimizations that human developers might never have even considered.

Why it matters:

By treating experiences as reusable code, these agents solve the problem of "forgetting" that plagues older AI models. This lifelong learning is a hallmark of the top self-improving agents in 2026.

3. Verbal Reinforcement (Reflexion) Agents

Verbal reinforcement agents improve by engaging in "Self-Talk" or internal critique. Instead of updating massive mathematical weights, which is slow and expensive, these agents write a linguistic summary of what went wrong after a failure. This summary is stored as a "Hint" in their memory. On the next attempt, they read their own previous advice to avoid making the same mistake twice. This method has boosted AI performance on complex coding benchmarks from 80% to over 91% without any additional training data.

- Natural Language Self-Critique: After every task, the agent runs a "Critic" module that looks at the final output and identifies specific logical errors or missing information, writing a detailed note to itself about what should be changed next time to achieve a more accurate and reliable result.

- Episodic Memory Retrieval: Before starting a new assignment, the agent searches its historical logs for similar past failures, reads the "lessons learned" it wrote previously, and incorporates that wisdom into its current planning process to ensure it doesn't fall into the same traps twice.

- Multi-Step Plan Revision: If an agent realizes halfway through a task that its initial plan is failing, it can "stop and think," write a new strategy based on the current context, and pivot its actions immediately, which makes it much more resilient to unexpected changes in the environment.

- Transparent Learning Process: Unlike traditional AI where learning is hidden in complex numbers, you can actually read a Reflexion agent's "thoughts," which makes it incredibly easy for developers to understand exactly why an agent is improving or where its logic might still be flawed.

- High-Efficiency Alignment: By learning through language rather than retraining, these agents can be updated and improved in seconds rather than hours, allowing them to adapt to a user's specific style or a company's unique standards with very few examples of "correct" behavior.

Why it matters:

The ability to "think before acting" allows these agents to solve problems that require deep reasoning rather than just pattern matching. This cognitive growth is a key feature of autonomous systems in 2026.

4. Meta-Learning (Learning-to-Learn) Agents

Meta-learning agents are the "master students" of the AI world. Instead of learning a specific task like "how to write an email," they focus on mastering the process of learning itself. They are trained on thousands of different types of problems so they can find the "underlying patterns" of problem-solving. When you give a meta-learning agent a task it has never seen before, it doesn't start from zero, it applies its "learning strategies" to become an expert in the new domain in just a few minutes.

- Few-Shot Task Adaptation: While traditional AI needs millions of data points, a meta-learning agent can master a new skill with just 5 or 10 examples because it understands how to quickly fine-tune its internal parameters to match the new requirements of the specific environment.

- Optimized Parameter Initialization: These agents find the "perfect starting point" for their internal settings, ensuring that they are always just a few small adjustments away from being an expert in any given field, whether that is legal research, medical analysis, or creative writing.

- Cross-Domain Knowledge Transfer: Because they learn "how to learn," these agents can take a logic pattern they found useful in finance and apply it to a problem in engineering, making them the most versatile and flexible tools in a developer's arsenal this year.

- Dynamic Optimization Strategies: The agent monitors its own learning speed and can autonomously decide to change its "learning rate" or "attention focus" if it feels it is not picking up a new concept fast enough, essentially managing its own education like a self-aware student.

- Resilience to Data Scarcity: In fields where there is very little information available, such as rare disease research, these agents excel because they rely on their "meta-knowledge" to fill in the gaps that would normally stop a standard machine learning model in its tracks.

Why it matters:

These agents solve the "brittleness" of old AI, creating systems that are actually useful in the messy, unpredictable real world. This adaptability is driving the massive adoption of meta-learners in 2026.

5. Self-Supervised Knowledge Graph Agents

Knowledge graph agents improve by constantly "cleaning" and "connecting" their own internal databases. In 2026, these agents act like autonomous researchers that crawl through company documents, chat logs, and codebases to build a visual map of how everything is related. As they encounter new information, they look for contradictions in their existing graph, resolve them, and update their understanding of the world without being told to do so by a human.

- Automated Entity Relationship Mapping: These agents can read thousands of scattered PDF files and automatically identify that "Project Alpha" is related to "Client B" and uses "Server X," creating a structured web of knowledge that is far more useful than a simple folder of documents.

- Conflict Detection and Resolution: When an agent finds two pieces of information that disagree, such as two different prices for the same service, it proactively searches for a third source or checks the "date of publication" to determine which fact is current and correct.

- Proactive Insight Generation: By looking at the connections in their graph, these agents can "predict" things that aren't explicitly stated, such as identifying that a certain team is likely to be overworked next month based on their current project load and historical performance.

- Semantic Memory Pruning: To stay fast and efficient, these agents autonomously delete outdated or irrelevant information from their database, ensuring that the "brain" of the company stays focused on the most important and high-impact data points available.

- Contextual Query Expansion: When a user asks a simple question, the agent uses its knowledge graph to pull in "related" context that the user didn't even know to ask for, providing a much more comprehensive and helpful answer than a standard search tool could ever manage.

Why it matters:

By managing their own knowledge, these agents prevent "information rot" within organizations. This self-curating ability is a top reason why knowledge agents are a major trend in 2026.

6. Real-Time Feedback Loop Agents

Feedback loop agents are designed for high-stakes environments like stock trading or customer service, where the "ground truth" changes every second. These agents use reinforcement learning to measure the success of every single action they take. If a customer hangs up happy, the agent gives itself a "reward" and remembers the exact phrases it used. If a trade loses money, it takes a "penalty" and immediately adjusts its risk model for the next transaction, ensuring it never makes the same mistake in the same market conditions twice.

- Instantaneous Reward Processing: These agents are built with "online learning" architectures that allow them to update their behavior models in milliseconds, making them perfect for environments where waiting even an hour to retrain would result in massive financial or operational losses.

- Human-in-the-Loop Refinement: While they learn autonomously, these agents can also "ask for a grade" from a human supervisor during edge cases, using that specific feedback to sharpen their decision-making for similar future scenarios where the correct path might be morally or legally gray.

- Environment-Driven Strategy Shifts: The agent can detect when the "rules" of its environment have changed, such as a new government regulation or a change in consumer sentiment, and automatically pivot its strategy to stay compliant and effective without needing a manual update.

- A/B Testing Autonomy: These agents can run their own internal "split tests," trying out two different ways of solving a problem simultaneously and then permanently switching to the more successful method once they have enough data to be statistically certain of the winner.

- Risk-Aware Exploration: Unlike older models that might take reckless actions to learn, 2026 feedback agents use "Safe Reinforcement Learning" to ensure they only experiment with new ideas within pre-defined safety boundaries that prevent catastrophic system failures.

Why it matters:

These agents turn "failure" into a valuable data point rather than a system crash. This resilience is a core requirement for the self-learning systems being built this year.

7. Collaborative Multi-Agent Evolving Systems

The most advanced self-learning happens when agents work in teams. In 2026, developers are building systems where different agents have roles like "Teacher," "Student," and "Examiner." The Teacher agent generates difficult practice problems, the Student agent tries to solve them, and the Examiner agent grades the performance. Because they are all AI, they can run through millions of these "study sessions" in a single afternoon, allowing the whole system to reach expert-level performance in a fraction of the time it would take a single model.

- Self-Play Training Cycles: Similar to how AI learned to beat humans at Chess and Go, these agents "play" against themselves to find weaknesses in their own logic, constantly pushing the boundaries of what the system is capable of achieving through internal competition.

- Peer-to-Peer Knowledge Sharing: When one agent in a cluster learns a new trick or discovers a shortcut, it can "broadcast" that update to the rest of the team, allowing the entire organizational AI to get smarter simultaneously rather than one agent at a time.

- Specialized Role Evolution: Over time, the agents in a team will "specialize" based on their performance, with some becoming experts in security and others in user experience, ensuring that the collective "crew" has the right balance of skills for any project that comes their way.

- Automated Performance Auditing: The system includes dedicated "Auditor Agents" that constantly look for biases, errors, or "laziness" in the other agents, providing an internal quality control mechanism that keeps the entire autonomous workforce operating at peak efficiency.

- Scalable Collective Intelligence: You can add more agents to the system as your company grows, and the existing agents will automatically "onboard" the new ones by sharing their learned skill libraries and operational guidelines, making the AI workforce incredibly easy to scale.

Why it matters:

Collective learning is faster and more stable than individual learning. This "teamwork" is the final frontier of self-improving AI that developers are mastering in 2026.

Show Your Proof of Work on Fueler

As these self-learning agents become the standard, the way you prove your skills as a developer must change. You are no longer just a "coder," you are an "Agent Architect." Fueler is the best platform to showcase these complex projects. Instead of a boring CV, use Fueler to show how you built an agent that improves over time, including the feedback loops, memory structures, and self-correction logic you implemented. This "Proof of Work" is what top companies in 2026 are looking for when they hire for high-level AI roles.

Final Thoughts

The shift from static AI to self-learning agents is the most significant change in software history. We are moving toward a world where our tools grow with us, getting smarter, faster, and more reliable every single day. For the 10th-grade student or the seasoned developer, the message is clear: the future belongs to those who can build and manage these evolving systems. By understanding these 7 types of self-improving agents, you are preparing yourself for a career where your software is a living, breathing partner in your success.

FAQs

Can self-learning AI agents become "too smart" or dangerous?

In 2026, developers use "Guardrail Agents" and "Constrained Optimization" to ensure that as an agent evolves, it stays within safe ethical and operational boundaries. While they get smarter at their tasks, they are still limited by the primary goals and safety rules set by their human creators.

Do I need a supercomputer to run these self-learning agents?

Not necessarily. While training huge models requires massive power, many self-learning agents in 2026 use "Small Language Models" (SLMs) that can run on a high-end laptop. They improve by updating their memory and "self-talk" notes, which don't require nearly as much energy as traditional retraining.

What is the best language to build self-learning agents in 2026?

Python remains the king due to its massive library of AI frameworks like LangGraph and CrewAI. However, TypeScript is becoming very popular for "Browser Agents," and Mojo is being used for high-performance agents that need to process data at lightning speeds.

How do self-learning agents handle incorrect information?

These agents use "Cross-Verification," where they check multiple sources or use a "Critic" module to fact-check their own conclusions. If they realize they learned something wrong, they can "unlearn" it by updating their knowledge graph and deleting the incorrect data point.

Are there any free tools to start building these agents today?

Yes, you can use open-source frameworks like AutoGPT, OpenHands, or LangGraph for free. These tools provide the "skeletons" for agents, allowing you to add your own logic and feedback loops to create your first self-improving autonomous system.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.