How AI Voice Assistants Are Becoming Human-Like

Riten Debnath

02 Apr, 2026

Last updated: April 2026

The sound of a human voice is one of the most complex instruments on earth, carrying emotion, intent, and subtle nuances that a machine was never supposed to replicate. Yet, we are currently witnessing a massive technological shift where the line between digital synthesis and human speech is thinning to the point of invisibility. We have moved far beyond the robotic, stuttering voices of the past decade. Today, AI voice assistants are learning to breathe, pause, and even emphasize words based on the emotional context of a conversation, making them more like companions and professional partners than simple software tools.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. The Evolution of Natural Language Generation in Voice AI

The journey from monotone text-to-speech engines to the fluid, melodic voices we hear today is rooted in a technology called Neural TTS. In the early days, computers used "concatenative synthesis," which basically meant stitching together tiny recordings of a human voice. It sounded choppy and unnatural. Modern AI uses deep learning models that analyze thousands of hours of human speech to understand how pitch, volume, and speed change depending on what is being said. This allows the AI to predict how a sentence should sound before it even speaks the first word, ensuring a flow that feels continuous and intentional.

- Advanced Prosody and Melodic Rhythm: Modern AI assistants now understand the complex rhythm of speech, known as prosody, which allows them to sound authoritative during a high-stakes presentation or soft and comforting during a late-night chat by adjusting the pitch of every single syllable.

- Contextual Breathing and Micro-Pauses: New models actually insert tiny, artificial breaths and realistic micro-pauses between sentences to mimic the human respiratory cycle, which effectively removes the "robotic" feeling and makes the listener feel more comfortable and less fatigued during long interactions.

- Complex Emotional Range and Adaptability: High-level AI can now detect if a user is frustrated, happy, or confused and will automatically adjust its own tone to match the mood, providing an empathetic experience that feels like talking to a real person who understands your feelings.

- Zero-Shot Voice Cloning Technology: Advanced systems can now learn the unique characteristics, vocal fry, and specific quirks of a particular human voice with just a few seconds of audio, replicating it with startling accuracy that was previously impossible without hours of studio recording.

- Multi-Lingual Phonetic Mastery: AI is now capable of switching between languages while maintaining the same "personality" and vocal texture, allowing for a seamless transition that sounds natural regardless of the language being spoken by the user.

Why it matters

This evolution matters because it breaks down the barrier between humans and technology. When a voice assistant sounds human, we trust it more. This trust is essential for sensitive industries like healthcare, where an empathetic-sounding AI can provide comfort to patients, or in education, where a friendly voice helps students stay engaged. As a professional, understanding this shift helps you prepare for a future where your primary interface with your computer will be a natural conversation rather than a keyboard.

2. Real-Time Latency Reduction and Conversational Flow

One of the biggest "tells" that you were talking to a robot used to be the long pause after you finished speaking. You would ask a question, and the machine would take two seconds to process it. Today, the goal is "Ultra-Low Latency," where the AI begins responding in less than 500 milliseconds. This mimics the natural "overlap" that happens in human conversations, where we often start reacting to a statement before the other person has even finished their sentence. This creates a sense of presence that makes the assistant feel like a real person sitting in the room with you rather than a server in a distant building.

- Predictive Streaming Inference: AI models now process your words as you say them in real-time, rather than waiting for you to finish the entire sentence, allowing for near-instant responses that keep the conversation moving at a human pace without awkward silences.

- Dynamic Interruption Handling: Modern assistants are being trained to handle being interrupted mid-sentence, meaning they can stop talking the moment you speak up and instantly pivot to your new request without getting confused or finishing their previous thought.

- Intelligent Background Noise Filtering: Advanced voice AI can now isolate your voice from a crowded room or a noisy coffee shop, ensuring the conversation remains fluid and accurate even when environmental distractions are present in the background.

- Strategic Filler Word Integration: Engineers are teaching AI to use "um," "uh," and "well" strategically to fill tiny gaps while the system "thinks" or processes data, which makes the interaction feel remarkably organic and mimics how humans buy time during a chat.

- Turn-Taking Logic and Cues: The AI is becoming skilled at recognizing when a user is actually finished speaking versus when they are just pausing for a second, which prevents the frustrating experience of being cut off before you have finished your thought.

Why it matters

Speed is the currency of modern productivity. If a voice assistant takes too long to respond, the flow of work is broken and the user becomes frustrated. By achieving human-like latency, AI becomes a viable partner for real-time collaboration. Whether you are brainstorming ideas for a new project or asking for data during a meeting, the lack of delay ensures that the AI feels like an extension of your own mind rather than a slow, external database.

3. Neural Speech Synthesis and Emotional Intelligence

To truly become "human-like," a voice assistant must do more than just speak clearly; it must understand emotion. This is known as Affective Computing. When you speak to an assistant today, the software isn't just listening to your words; it is analyzing your "acoustic features," such as the shakiness in your voice or the speed of your delivery. If the system detects stress, it might respond with a calmer, slower tone to help de-escalate the situation. This level of emotional intelligence is what separates a simple tool from a sophisticated digital collaborator.

- Real-Time Sentiment Analysis: The AI constantly scans the vocabulary, sentence structure, and tone of the user to determine their underlying emotional state, allowing the machine to react with appropriate levels of concern or excitement.

- Adaptive Tone Matching: The assistant can switch between "excited," "neutral," "apologetic," or "professional" modes depending on the context of the conversation, ensuring that the response never feels tone-deaf or socially inappropriate for the situation.

- Localized Accents and Dialects: Modern AI is moving away from "standard" accents and learning the localized dialects of various regions, which allows it to sound more familiar, relatable, and trustworthy to users from different cultural backgrounds.

- Dynamic Emphasis and Intonation: The system knows which words in a sentence are the most important and applies physical stress to them, just as a human would to convey meaning and ensure that the most critical information is understood clearly.

- Personality Persistence: Developers are creating AI that maintains a consistent personality over time, remembering your preferences and past interactions to build a rapport that feels like a long-term professional relationship.

Why it matters

Emotional intelligence in AI prevents "uncanny valley" interactions where the machine sounds too perfect or too cold to be real. In the professional world, being able to interact with a system that understands nuance is a game-changer. It allows for better customer service bots that don't make angry customers even angrier, and it helps leaders use AI to practice difficult conversations by providing a realistic emotional sounding board.

4. The Role of Large Language Models in Voice Cognition

The voice is just the output, but the "brain" behind the voice is a Large Language Model (LLM). These models have been trained on nearly all of human knowledge, allowing them to understand context, sarcasm, and complex instructions. When an AI voice assistant sounds human, it is because the brain behind it understands the world. This integration allows the assistant to move beyond simple commands like "set a timer" and into deep, philosophical discussions or complex technical troubleshooting that requires a high degree of logical reasoning.

- Massive Context Windows: Modern AI can remember details from a conversation you had ten minutes ago, allowing it to reference previous points and build a cohesive narrative throughout the entire interaction without repeating itself.

- Sarcasm and Humor Detection: Because these models understand the nuances of language, they can now identify when a user is being sarcastic or telling a joke, allowing the AI to respond with an appropriate witty remark or a laugh.

- Reasoning and Problem Solving: When you ask a complex question, the AI doesn't just look for a pre-written answer; it "thinks" through the steps of the problem and explains its reasoning in a natural, conversational way that is easy to follow.

- Creative Collaboration Capabilities: You can use voice AI to co-write stories, compose music, or brainstorm marketing slogans, with the assistant providing creative input that feels like it’s coming from a human partner.

- Fact-Checking and Accuracy: Newer models are being designed to verify information in real-time, ensuring that the human-like voice is delivering reliable data rather than just sounding confident while providing incorrect information.

Why it matters

A human-like voice is useless if the information it provides is shallow or wrong. By combining realistic speech with the massive intelligence of LLMs, we are creating a tool that can actually help us solve problems. For someone building a portfolio on a platform like Fueler, this means having a digital assistant that can help refine your work samples, offer advice on your presentation, and even mock-interview you using a voice that sounds exactly like a real hiring manager.

5. Privacy, Ethics, and the Human-Centric Design

As AI voices become indistinguishable from human ones, we face new ethical challenges. How do we know when we are talking to a machine? How is our voice data being stored? Companies are now focusing on "Human-Centric Design," which prioritizes transparency and security. This means that while the AI sounds human, it must always identify itself as an AI when asked, and it must have strict safeguards to prevent it from being used for malicious purposes like deepfake scams or spreading misinformation.

- Transparent AI Identification: Ethical guidelines now require AI systems to clarify that they are synthetic voices to ensure that users are never deceived into thinking they are speaking to a biological human.

- On-Device Voice Processing: To protect privacy, many modern assistants process your voice directly on your phone or laptop rather than sending the sensitive audio data to a cloud server where it could be intercepted.

- Biometric Voice Security: Your unique voice print can now be used as a secure password, as AI can distinguish your specific vocal characteristics from a recording or an impersonator with extremely high levels of accuracy.

- Anti-Deepfake Watermarking: Sophisticated AI developers are embedding "digital watermarks" into synthetic speech that are inaudible to humans but can be detected by software to prove that the audio was generated by an AI.

- Consent-Based Voice Cloning: New regulations are being formed to ensure that a person's voice cannot be cloned without their explicit, legal consent, protecting the "vocal identity" of actors, singers, and everyday individuals.

Why it matters

Ethics and privacy are the foundation of any long-term technology. If people feel that AI voice assistants are "creepy" or dangerous, they won't use them. By prioritizing these ethical standards, the tech industry ensures that AI remains a helpful tool for growth rather than a source of anxiety. It allows professionals to use these tools with confidence, knowing that their identity and data are being handled with the highest level of care.

6. Accessibility and Global Communication Barriers

One of the most beautiful aspects of AI becoming human-like is its ability to help people who have lost their own voices or who struggle with traditional communication. For individuals with speech impediments or those who speak a minority language, AI provides a bridge to the rest of the world. By creating voices that sound natural and carry personality, AI gives these individuals a way to express themselves that feels authentic to who they are, rather than forcing them to use a generic, robotic output.

- Personalized Voice Restoration: For people with conditions like ALS, AI can analyze old recordings of their voice and create a digital clone that allows them to continue speaking to their loved ones in their own unique "sound."

- Real-Time Speech Translation: AI can now act as a live translator, listening to one language and speaking another in the same voice and tone, effectively erasing language barriers during international business meetings.

- Support for Non-Verbal Individuals: Sophisticated interfaces allow people who cannot speak to select words or images that the AI then converts into fluid, emotional speech, giving them a powerful new way to communicate.

- Dialect Preservation Efforts: AI researchers are working to document and replicate dying languages and rare dialects, ensuring that these cultural "voices" are not lost to history and can be spoken by future generations.

- Adaptive Learning for Speech Therapy: Voice AI can act as a patient, non-judgmental coach for people in speech therapy, providing real-time feedback and encouragement in a voice that feels supportive and human.

Why it matters

Technology is at its best when it creates equality. AI voice assistants aren't just for people who want to set timers; they are life-changing tools for millions of people worldwide. In the context of career growth, this means that talent is no longer restricted by geography or physical ability. If you can communicate your skills, whether through a voice assistant or a portfolio on Fueler, you have a chance to succeed in the global marketplace.

7. The Future of Work and Voice-First Interfaces

We are moving toward a "voice-first" world. In the next few years, you might spend more time talking to your computer than typing on it. Imagine walking into your office and simply saying, "Hey, show me the progress on my latest assignment," and having the AI respond with a detailed, spoken summary while it opens the relevant files. This transition will redefine how we view productivity and how we present our professional selves to the world. Your ability to collaborate with these "human-like" systems will become a key skill that employers look for.

- Hands-Free Professional Productivity: Voice-first interfaces allow you to answer emails, organize your calendar, and manage projects while you are commuting, exercising, or multitasking, significantly increasing your daily efficiency.

- The Rise of Voice-Based Portfolios: Professionals may soon use AI-narrated "guided tours" of their portfolios, where a human-like voice explains the thought process behind their work samples while a recruiter views them.

- Virtual Executive Assistants: AI is evolving from a simple bot into a proactive assistant that can make phone calls on your behalf, book appointments, and even negotiate simple service terms using a professional, human tone.

- Interactive Training and Simulation: Companies are using human-like voice AI to create realistic training simulations where employees can practice sales pitches or customer service interactions in a safe, controlled environment.

- Ubiquitous Voice Integration: Voice assistants are moving out of the speaker and into our glasses, watches, and even our clothing, creating a seamless "ambient" intelligence that is always ready to help when we speak.

Why it matters

The future of work belongs to those who can adapt. As these tools become more human-like, they become easier to use. You won't need to learn complex coding or software shortcuts; you will just need to know how to speak clearly and logically. This levels the playing field for everyone. At Fueler, we see that every day the most successful individuals aren't always the ones with the best degrees, but the ones who can clearly demonstrate their skills and communicate their value effectively to the world.

Showing off what you can do is becoming easier as technology evolves. At Fueler, we provide a platform where you can take all the projects and assignments you’ve completed, perhaps even with the help of these amazing AI tools, and display them in a professional portfolio that speaks for itself. While AI voice assistants are busy sounding more human, Fueler is busy making sure your human talent is seen and hired by the best companies in the world.

Final Thoughts

The rise of human-like AI voice assistants marks a turning point in our relationship with technology. We are no longer just using machines; we are interacting with them in a way that feels natural, emotional, and deeply personal. As these tools continue to improve, they will become an invisible but essential part of our professional and daily lives. The key is to embrace these changes, understand the ethical implications, and use these powerful tools to enhance our own human capabilities rather than replace them.

FAQs

1. How can I use human-like AI voice assistants for my career?

You can use these assistants to practice for interviews, proofread your written work by hearing it read aloud, and manage your daily schedule hands-free. They act as a 24/7 executive assistant that helps you stay organized and professional while you focus on building your portfolio and completing assignments.

2. Is it safe to use AI voice cloning for personal use?

While the technology is incredibly powerful, you should only use reputable platforms that prioritize data privacy and encryption. Always ensure you have the rights to any voice you are cloning and be aware of the ethical guidelines surrounding synthetic media to protect your digital identity and reputation.

3. What are the best free AI voice tools available in 2026?

There are several high-quality tools that offer free tiers for creators, including advanced platforms that allow for natural-sounding text-to-speech and basic voice cloning. These tools are perfect for narrating your work samples or creating voiceovers for your professional project presentations.

4. Will AI voice assistants replace human customer service jobs?

AI will likely handle repetitive and simple queries, but human agents will always be needed for complex problem-solving and deep emotional support. The most successful businesses will use a combination of human-like AI for speed and real humans for high-level decision-making and genuine connection.

5. How do I make my AI assistant sound more human?

Most modern assistants allow you to customize the speed, pitch, and "personality" of the voice. By choosing a voice that matches your specific context, such as a professional tone for work or a casual tone for home, you can create a more natural and productive interaction that feels less like a machine.

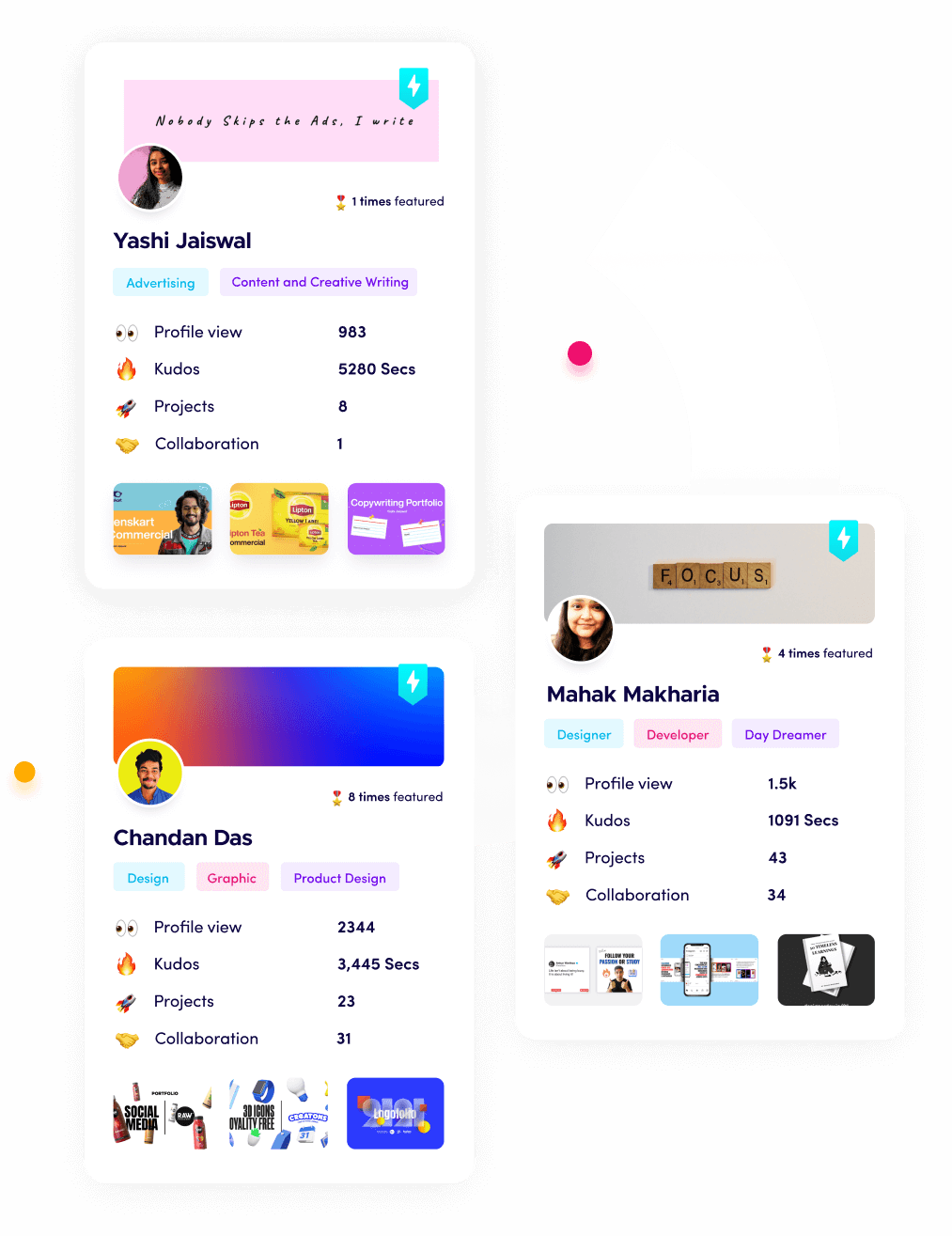

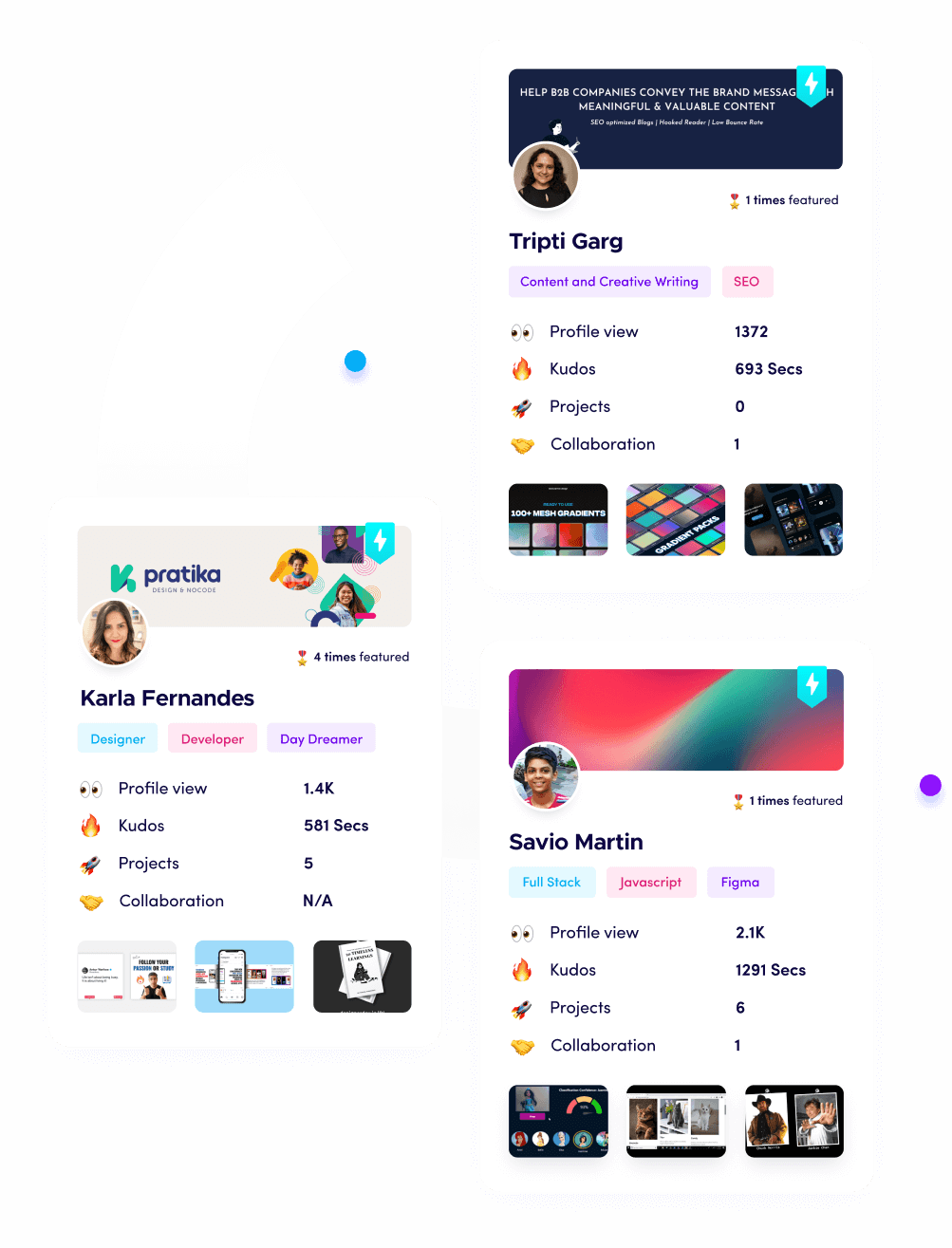

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.