Best 10 AI Infrastructure Startups Transforming AI Development

Riten Debnath

27 Mar, 2026

Last updated: March 2026

If you think the AI revolution is just about clever chatbots and pretty pictures, you are only looking at the paint on the walls while the foundation of the house is being poured. The real power in 2026 does not belong to those who write the prompts; it belongs to the companies building the massive, high-speed digital plumbing that makes those prompts actually work. Building a serious AI system today is like trying to run a Formula 1 car on a gravel road; you can have the best engine in the world, but without the right track, you are going to crash and burn. These ten infrastructure giants are building the superhighways that allow modern businesses to move at the speed of thought without breaking the bank or their servers.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

At a glance: Comparing the Best AI Infrastructure Startups Transforming AI Development

1. CoreWeave

Best for: Massive scale model training and enterprise-grade GPU clusters.

CoreWeave is the heavyweight champion of specialized cloud providers, offering a leaner and meaner alternative to the traditional tech giants. While legacy clouds try to be everything to everyone, CoreWeave focuses purely on high-performance compute, giving developers direct access to the most powerful hardware on the planet. They have essentially built a dedicated playground for the world’s most demanding AI workloads, ensuring that when you need a thousand GPUs at once, they actually show up and work.

- Bare Metal Performance: Unlike standard virtual machines, their infrastructure allows you to run code directly on the hardware for maximum speed.

- Liquid-Cooled Reliability: They use advanced cooling systems to keep their massive HGX B300 clusters running at peak performance without thermal throttling.

- Zero Egress Fees: You can move your data in and out of their storage systems without being hit by the sneaky "data tax" other clouds charge.

- Kubernetes-Native Design: The entire platform is built for modern developers, making it incredibly easy to automate and scale your deployments.

- NVIDIA Partnership: As a preferred partner, they get the newest chips, like the Blackwell Ultra platform, long before the general market.

Pricing: NVIDIA H100 instances start at approximately $4.25 per GPU-hour, with newer HGX B200 and B300 clusters requiring custom enterprise quotes based on volume.

Why it matters: In the race to build the next "God-model," speed is the only metric that counts. CoreWeave provides the raw horsepower that allows startups to compete with trillion-dollar tech giants by cutting training times from months to weeks.

2. Lambda Labs

Best for: Deep learning researchers and startups looking for the best price-to-performance ratio.

Lambda Labs is the "no-nonsense" choice for engineers who want to get straight to work without navigating a million confusing cloud menus. They have built a reputation by being transparent about what they offer and keeping their prices significantly lower than the big-box cloud providers. Whether you are a solo researcher or a growing AI lab, Lambda provides a predictable environment where you can rent a single GPU or a massive "1-Click Cluster" with zero friction.

- 1-Click Clusters: You can spin up hundreds of interconnected GPUs in minutes, skipping the weeks of manual configuration usually required.

- Reserved Instances: They offer long-term contracts that significantly lower the hourly rate for teams with consistent, ongoing compute needs.

- On-Demand Availability: Their public cloud allows you to grab high-end H100 or A10 chips instantly for quick experiments or fine-tuning.

- Integrated Hardware: Unlike others, they also build and sell physical AI workstations, giving them a deep understanding of the full hardware stack.

- InfiniBand Interconnect: Their clusters use high-speed networking to ensure that data moves between GPUs fast enough to keep the processors busy.

Pricing: NVIDIA H100 PCIe instances are currently priced at $2.49 per hour, while the higher-end H100 SXM instances go for $2.99 per GPU-hour.

Why it matters: Most AI projects die because the cloud bill grows faster than the product. Lambda Labs keeps the lights on for the next generation of innovators by providing elite hardware at a price that does not require a second mortgage.

3. Pinecone

Best for: Long-term memory for AI agents and high-speed search applications.

If a Large Language Model is the "brain," Pinecone is the "hard drive" that allows it to remember things. Standard databases are great for names and numbers, but they are terrible at understanding the complex relationships between ideas. Pinecone specializes in "vector data," which is how AI understands context. By storing information this way, Pinecone allows your AI to "search" through millions of documents in milliseconds to find the exact piece of information it needs to answer a user's question accurately.

- Serverless Scaling: You do not have to worry about managing servers; the database automatically grows or shrinks based on how much data you throw at it.

- Pinecone Assistant: A built-in tool that helps you build RAG (Retrieval-Augmented Generation) systems without writing thousands of lines of boilerplate code.

- High-Precision Search: Their algorithms are fine-tuned to find the most relevant information even when the user’s query is vague or poorly worded.

- Metadata Filtering: You can combine "concept search" with "fact search," like finding all "happy poems" written after 2024.

- Enterprise-Grade Security: Includes features like SOC2 compliance and customer-managed encryption keys to keep sensitive company data private.

Pricing: The starter plan is free (up to 2GB storage). The Standard plan starts at a $25/month minimum plus usage fees, like $0.33/GB per month for storage.

Why it matters: An AI that forgets everything the moment the chat ends is just a toy. Pinecone turns these models into professional tools by giving them the ability to remember your specific company data and customer history.

4. Together AI

Best for: Open-source model fine-tuning and ultra-fast inference.

Together AI is the champion of the open-source movement, providing the tools and the cloud to run models like Llama 4 or DeepSeek without being locked into a single provider. They focus on making these models run faster and cheaper than anyone else through clever software optimizations. If you want to take a raw model and "teach" it about your specific industry, Together AI provides the most streamlined fine-tuning workflow in the business.

- Together Flash: A custom-built engine that makes open-source models respond significantly faster than standard deployments.

- Serverless Inference: Pay only for the tokens you use, allowing you to scale from ten users to ten million without managing a single server.

- Custom Fine-Tuning: A simplified dashboard where you can upload your data and "train" a model to speak your brand’s language in a few clicks.

- Massive Model Library: They host and optimize hundreds of the latest open-source models, so you can test and deploy the newest tech the day it drops.

- Private Endpoints: For sensitive tasks, you can deploy models on dedicated hardware that is not shared with any other customers.

Pricing: Serverless inference for models like Llama 3.3 70B is $0.88 per 1M tokens. Dedicated H100 GPUs are $3.99 per hour on-demand.

Why it matters: Privacy and control are becoming the biggest concerns in AI. Together AI allows companies to own their intelligence by running their own models rather than just renting a "black box" from a giant corporation.

5. Anyscale (Creators of Ray)

Best for: Scaling complex Python applications and production-ready AI pipelines.

Anyscale is built on top of "Ray," the open-source project that almost every major AI company (including OpenAI) uses to manage their workloads. While Python is the favorite language of AI developers, it was never designed to run on a thousand computers at once. Anyscale fixes this by providing a platform that takes your Python code and magically spreads it across a massive cluster. It turns the nightmare of "distributed computing" into a simple "push-to-deploy" experience.

- Ray Serve: A specialized tool for taking your trained models and turning them into high-speed web services that can handle millions of requests.

- Spot Instance Management: It can automatically use "leftover" cloud capacity to save you up to 90% on your compute bills without crashing your tasks.

- Interactive Dev Console: A cloud-based workspace that feels like your local laptop but has the power of a thousand GPUs behind it.

- Zero-Downtime Upgrades: You can update your models or your code while the system is running without your users ever noticing a glitch.

- Framework Agnostic: Whether you use PyTorch, TensorFlow, or JAX, Anyscale handles the heavy lifting of scaling regardless of the library.

Pricing: They offer a $100 free credit to start. Production usage is based on "Anyscale Credits," which typically adds a small management fee on top of your raw cloud provider costs.

Why it matters: Moving from a laptop prototype to a global product is where most engineers fail. Anyscale removes the "infrastructure wall," allowing developers to focus on their code while the platform handles the scaling.

6. Weights & Biases

Best for: Tracking experiments and managing the AI development lifecycle.

In the world of AI, you might run 500 different versions of a model before finding one that works. If you do not keep perfect notes, you are just guessing. Weights & Biases (W&B) is the "system of record" for AI teams. It tracks every change, every data point, and every result, allowing teams to collaborate and figure out exactly why a model is performing better (or worse) than the day before. It is essentially GitHub, but specifically designed for the messy, trial-and-error world of machine learning.

- Experiment Tracking: Automatically logs your code, hyperparameters, and results so you can visualize your progress in beautiful, real-time charts.

- Model Registry: A central "library" where your team can store, version, and share the best models that are ready for production.

- W&B Launch: A tool that allows you to send your training jobs to any cloud provider (like CoreWeave or Lambda) with a single command.

- Automated Sweeps: It can automatically try thousands of different settings to find the "sweet spot" for your model’s performance.

- Reports and Collaboration: You can build interactive dashboards to show your boss or your clients exactly how the project is moving along.

Pricing: The personal plan is free forever. The Pro plan for individuals starts at $60 per month, with custom pricing for enterprise teams.

Why it matters: AI development is expensive and chaotic. W&B brings order to the chaos, ensuring that teams do not waste hundreds of thousands of dollars re-running the same failed experiments.

7. LangChain (LangSmith)

Best for: Building and debugging complex AI agents and multi-step workflows.

LangChain started as a simple library to connect AI models to the internet, but it has grown into the standard toolkit for building "AI Agents." Their platform, LangSmith, is where the real magic happens for professionals. It allows you to "peek inside" the head of your AI to see exactly why it made a mistake. When an AI agent gets stuck in a loop or gives a hallucinated answer, LangSmith provides the "trace" that helps you find the bug in seconds rather than hours.

- Trace Visibility: You can see every single step the AI took, every document it read, and every prompt it sent to the model.

- Prompt Hub: A collaborative space where your team can test, version, and improve your prompts without breaking the main code.

- Annotation Queues: A simple way for humans to look at AI answers and give them a "thumbs up" or "thumbs down" to improve future performance.

- Automated Evals: You can set up "test suites" to make sure that a new update to your AI does not make it accidentally start swearing at customers.

- A/B Testing: Easily compare two different models or two different prompts to see which one performs better in the real world.

Pricing: Developer plan is free (up to 5,000 traces/month). The Plus plan starts at $39 per seat per month for growing teams.

Why it matters: Most AI "apps" are just wrappers. Real AI "products" are complex systems. LangChain provides the debugging tools necessary to make those systems reliable enough for actual business use.

8. Vectara

Best for: Secure, hallucination-free search for enterprise knowledge bases.

Vectara is the "trustworthy" infrastructure choice for companies that cannot afford to have their AI make things up (like law firms or hospitals). They have built an end-to-end "Trusted GenAI" platform that handles everything from reading your PDFs to giving the final answer. Their secret weapon is their focus on "RAG" (Retrieval-Augmented Generation) with built-in guardrails that stop the AI from answering questions it does not have the data for.

- Boomerang LLM: Their custom-built model is designed specifically for finding information rather than just generating creative text.

- Hallucination Detection: Built-in "scorecards" that tell you exactly how much the AI is sticking to the facts provided in your documents.

- Multi-Language Support: It can read a document in German and answer a question about it in English perfectly.

- Instant Indexing: As soon as you upload a file, it is ready to be searched by the AI, with no long "training" periods required.

- Hybrid Search: It combines the power of AI understanding with the precision of traditional keyword search for the best of both worlds.

Pricing: Offers a 30-day free trial. Enterprise SaaS deployments typically start at $100,000 per year for high-scale usage.

Why it matters: The biggest barrier to AI adoption is "trust." Vectara removes that barrier by providing a system that is designed from the ground up to be accurate, secure, and fully auditable.

9. Modular (Mojo)

Best for: High-performance AI coding and unifying the software stack.

Modular is trying to solve the "two-language problem" in AI. Currently, researchers write in Python because it is easy, but engineers have to rewrite everything in C++ because it is fast. Modular created "Mojo," a new programming language that looks exactly like Python but runs as fast as C++. This allows a single team to write code that works on a laptop and scales to a supercomputer without ever having to change languages.

- Mojo Programming Language: A "superset" of Python that gives you low-level control over hardware while keeping the simple syntax you love.

- MAX Engine: A high-performance "runtime" that can execute models from any framework (like PyTorch) significantly faster than standard engines.

- Hardware Agnostic: Their software is designed to run on NVIDIA, AMD, and even Apple Silicon, so you are not locked into one chip maker.

- Parallel Computing: Mojo has built-in features that make it easy to write code that uses every single core on a modern processor.

- Python Compatibility: You can still import all your favorite Python libraries like NumPy or Pandas, directly into your Mojo projects.

Pricing: The Mojo SDK is free for community use. The Modular MAX platform for enterprise scaling uses a per-token or per-minute billing model.

Why it matters: We are reaching the limits of what standard software can do. Modular is reinventing the very language of AI to ensure that we can keep making models bigger and faster without the code becoming a total mess.

10. Mosaic AI (by Databricks)

Best for: Large enterprises building custom models on their own private data.

Mosaic AI (now part of Databricks) is the gold standard for big companies that want to build their own "mini-OpenAIs." They provide the entire factory floor for AI development. Instead of just giving you a model, they give you the tools to take your company’s massive data lakes and turn them into a custom-tuned intelligence system. Because it is built on Databricks, it comes with all the heavy-duty security and governance that big banks and healthcare companies require.

- Training Recipes: They provide "blueprints" for the world’s most famous models, so you can train your own version from scratch for a fraction of the cost.

- Unity Catalog: A single place to manage all your data, your models, and your security permissions so nothing gets lost.

- Serverless Model Serving: Deploy your custom models to a global audience with high availability and automatic scaling.

- Gateway Guardrails: A central "filter" that checks every AI response for safety, bias, and toxic language before the user sees it.

- Inference Tables: Automatically logs every single AI interaction back into your database so you can analyze it for quality later.

Pricing: Billed in Databricks Units (DBUs). Foundation model serving typically starts at $0.07 per DBU, with total costs scaling based on compute intensity.

Why it matters: For a huge company, "sending data to a third party" is a non-starter. Mosaic AI allows enterprises to keep their data in their own house while still building the most advanced AI tools available.

Which one should you choose?

The choice depends entirely on where you are in the "building" process. If you need raw, unadulterated power to train a massive new model from scratch, go with CoreWeave or Lambda Labs. If you are building an AI-powered application and need a place to store its memory and track its thoughts, Pinecone and LangChain are your best friends. For those working in large corporations with strict security needs, Vectara or Mosaic AI are the only serious options. If you are a developer tired of slow code and complex scaling, Anyscale and Modular will change your life.

How does this connect to building a strong career or portfolio?

In the current market, saying "I know how to use ChatGPT" is like saying "I know how to use a microwave." It is no longer a flex. The people who are getting hired for the highest-paying roles are the ones who can show they understand the plumbing. By building projects that use Pinecone for memory, LangChain for logic, or Together AI for custom fine-tuning, you are proving that you can build production-ready systems, not just demos. These are the skills that move you from being a "user" to being an "architect," and that is exactly where the big money is.

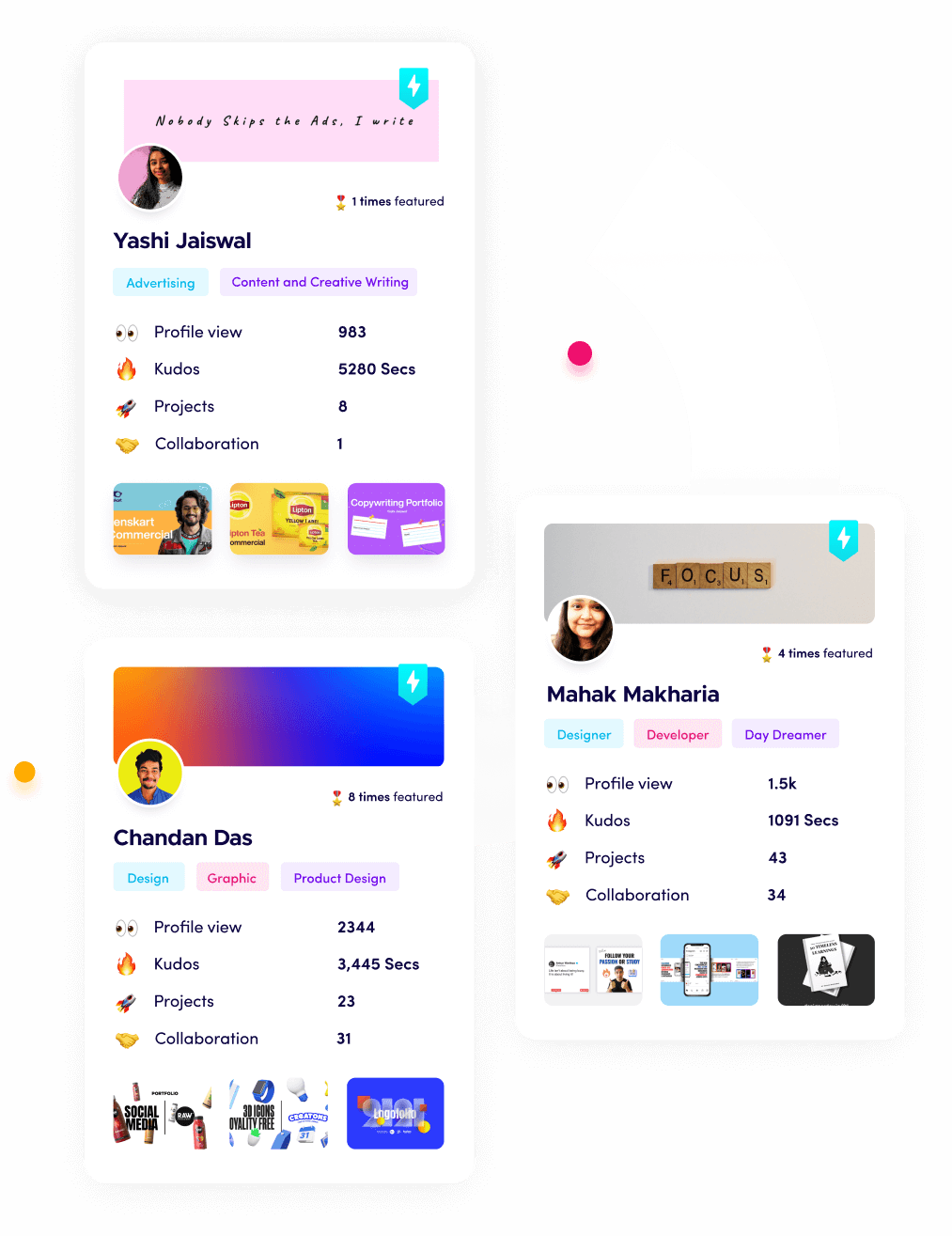

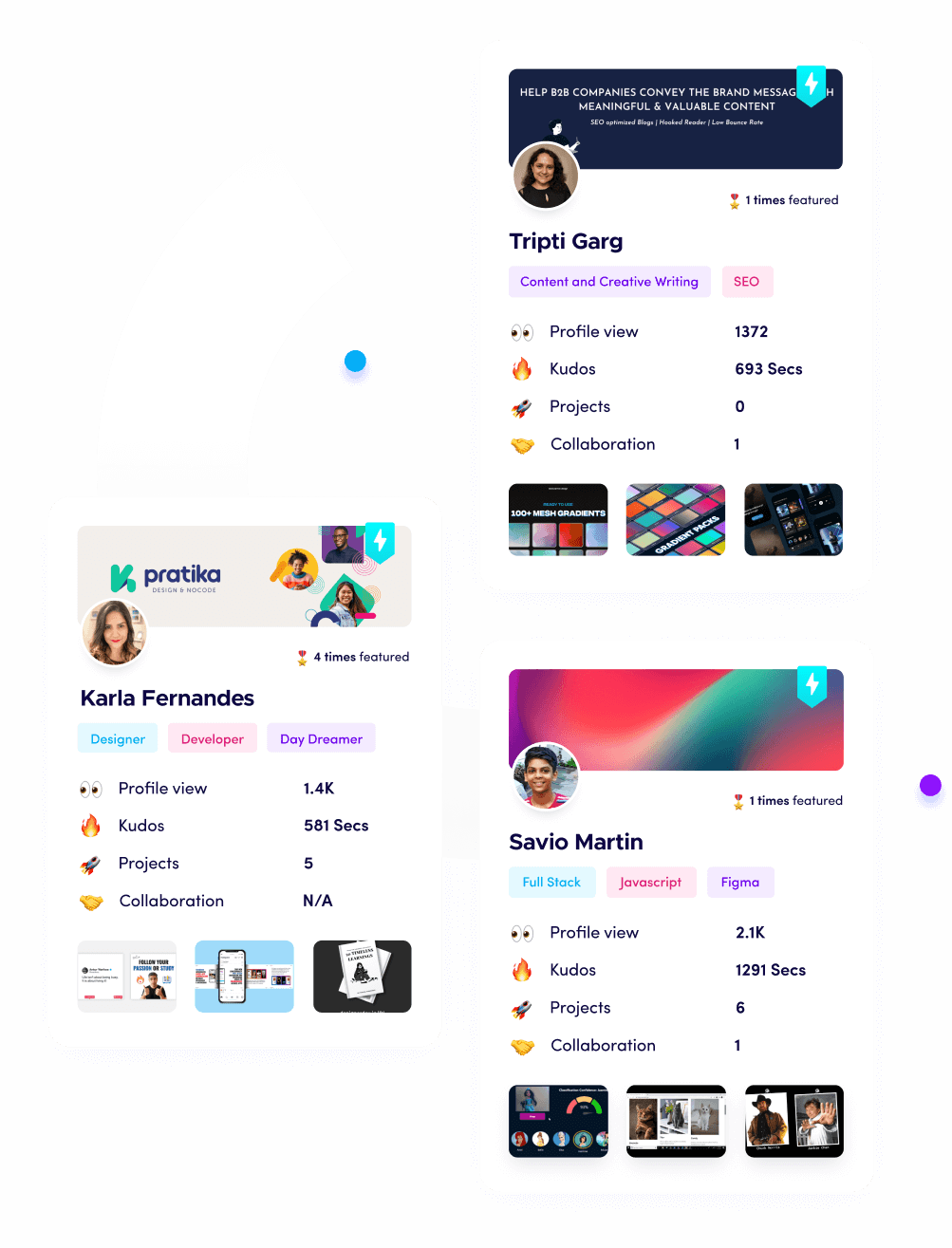

Showcase Your Proof of Work with Fueler

Building these complex AI systems is impressive, but only if people can actually see what you have done. Fueler is designed to help you document these technical wins. Instead of a boring bullet point on a resume that says "Used Pinecone," you can create a project entry on Fueler that shows your architecture diagram, your performance charts from Weights & Biases, and a video of the final agent in action. It is the best way to prove to a hiring manager that you don't just talk about AI infrastructure, you actually know how to build it.

Final Thoughts

The "AI Infrastructure" layer is the most stable and profitable part of the tech world right now. While consumer apps come and go, the companies that provide the compute, the memory, and the tools are the ones that will be here for the next decade. If you are looking to build a career or a business, stop chasing the latest "prompt engineering" hack and start learning how these ten engines work. The future isn't just being written, it's being built on these platforms.

FAQs

1. What is AI infrastructure in simple terms?

It is the collection of hardware (like GPUs) and software tools (like databases and scaling frameworks) that allow artificial intelligence models to be built, trained, and served to users.

2. Do I need to be a math genius to use these tools?

Not anymore. Most of these startups, like Pinecone and Together AI, have created "APIs" that allow you to use their power with basic coding skills.

3. Why are GPUs so important for AI development?

Unlike regular computer chips, GPUs can do thousands of small tasks at the same time, which is exactly how AI "learns" and "thinks" through massive amounts of data.

4. Can I build a custom AI for my small business?

Yes. Tools like Together AI and LangChain make it possible to take an existing open-source model and "teach" it your business data for a few hundred dollars.

5. How much does it cost to train a model in 2026?

While massive models still cost millions, "fine-tuning" a model for a specific task has dropped significantly in price, often costing less than $50 on platforms like Together AI.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.