Best 9 AI Infrastructure Platforms Developers Should Know

Riten Debnath

27 Mar, 2026

Last updated: March 2026

The gap between a weekend AI project and a production-grade intelligent application is no longer defined by the complexity of your code, but by the reliability of the infrastructure beneath it. In 2026, developers are moving away from managing raw server clusters and toward specialized platforms that handle the heavy lifting of GPU orchestration, low-latency inference, and automated scaling. If you want to build apps that don't just work but scale globally, choosing the right backbone is the most critical decision you will make this year.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

At a glance: Comparing the Best AI Infrastructure Platforms Developers Should Know

1. CoreWeave

Best for: Massive scale model training and enterprise-grade GPU clusters.

CoreWeave has solidified its position in 2026 as the primary alternative to legacy cloud providers for heavy AI workloads. Unlike general-purpose clouds, CoreWeave is built specifically for compute-intensive tasks, offering a massive inventory of NVIDIA’s latest hardware. By stripping away the bloat of traditional virtualization, they provide bare-metal performance that allows developers to squeeze every ounce of power out of their GPUs. Their partnership with NVIDIA ensures they are often the first to deploy new hardware generations like the Blackwell series.

- Bare-Metal GPU Access: Get direct access to NVIDIA H100 and B200 hardware without the performance tax of hypervisors or complex virtualization layers.

- InfiniBand Networking: Utilizes high-speed RDMA technology to allow GPUs to communicate with sub-microsecond latency, which is essential for distributed training.

- Kubernetes-Native Scaling: The platform is built on top of Kubernetes, allowing developers to manage massive AI clusters using standard DevOps tools they already know.

- Zero Egress Fees: Unlike major competitors, they do not charge for moving data out of their cloud, making it cost-effective for data-heavy applications.

- Liquid-Cooled Infrastructure: Advanced thermal management ensures that your high-density compute nodes never throttle, maintaining consistent performance during long training runs.

Pricing: On-demand NVIDIA H100 GPUs are currently priced around $4.76 per hour. For long-term commitments (1 to 3 years), reserved pricing can drop as low as $1.90 per hour.

Why it matters: When your application reaches a scale where every millisecond of training time translates to thousands of dollars, the efficiency of bare-metal hardware becomes a necessity rather than a luxury.

2. Lambda Labs

Best for: Researchers and developers seeking the most cost-effective high-end GPU rentals.

Lambda Labs remains the fan favorite for the developer community because they make high-performance computing accessible. They have successfully positioned themselves as the most transparent provider in the market, avoiding the "contact sales" walls that plague the industry. Their Cloud GPU service is designed for simplicity, allowing a single developer to spin up a powerful machine with a pre-configured deep learning stack in under a minute.

- 1-Click Deep Learning Stack: Instances come with NVIDIA drivers, CUDA, PyTorch, and TensorFlow pre-installed and optimized for the specific hardware you are renting.

- Public Pricing Transparency: Every GPU rate is listed publicly on their website, ensuring you always know exactly what your burn rate is without hidden fees.

- High-Speed NVMe Storage: Fast local storage ensures that data bottlenecking never slows down your model training or inference cycles.

- On-Demand & Reserved Instances: Flexible billing allows you to rent a single GPU for an hour or an entire cluster for a year with significant discounts.

- Global Data Centers: Strategically located hardware clusters reduce latency for developers and end-users regardless of their physical location.

Pricing: NVIDIA A100 80GB instances are approximately $2.06 per hour, while the flagship H100 80GB on-demand instances are priced at $2.99 per hour.

Why it matters: Lambda Labs removes the "gatekeeping" of high-end AI hardware, allowing solo developers and startups to compete with giant tech firms by providing affordable access to the same chips.

3. Together AI

Best for: Production-grade serverless inference and fine-tuning open-source models.

Together AI has emerged as the leader for developers who want to use open-source models like Llama 3 or DeepSeek without managing the underlying servers. They offer a "Serverless Inference" model where you simply call an API and pay for what you use. Their secret sauce is "FlashAttention," a custom optimization technique that makes their inference speeds significantly faster than standard implementations.

- Unified Inference API: Access over 200 open-source models through a single OpenAI-compatible API, making it easy to swap models as newer ones are released.

- Custom Fine-Tuning: Upload your dataset and fine-tune models directly on their platform with a few clicks or a single API command.

- Dedicated GPU Clusters: For high-traffic apps, you can reserve dedicated GPUs to ensure zero-queue time and consistent latency for your users.

- Turbo Model Versions: They provide optimized "Turbo" versions of popular models that deliver faster response times and lower costs per token.

- Model Registry: A secure place to host and deploy your own custom-trained weights while benefiting from Together’s optimized inference engine.

Pricing: Serverless inference for Llama 3.3 70B is roughly $0.88 per million tokens. Dedicated H100 GPU endpoints start at $3.99 per hour.

Why it matters: It allows developers to ship features in days rather than months by handling the complex world of model deployment and scaling automatically.

4. RunPod

Best for: Developers needing a flexible marketplace for individual GPUs and community-driven pods.

RunPod operates as a specialized marketplace for GPU compute, offering both "Secure Cloud" and "Community Cloud" options. It is the go-to platform for developers who need specific configurations or are looking for the absolute lowest price points for non-sensitive data processing. Their "Pods" are incredibly lightweight and support instant SSH and VS Code integration, making the dev-to-cloud loop feel instantaneous.

- Secure Cloud Tiers: Provides enterprise-grade security and reliability for production workloads that require strict data privacy.

- Community Cloud: A marketplace of distributed GPUs at lower prices, perfect for personal projects, testing, or massive batch processing.

- Network Volumes: Attach persistent storage that can be shared across multiple pods, making it easy to manage large datasets.

- Serverless Endpoints: Deploy models as serverless functions that scale to zero when not in use, saving you money during idle periods.

- Built-in Tunnels: Easy access to your pods via HTTP or TCP tunnels without having to manually configure complex networking or firewalls.

Pricing: Community Cloud RTX 4090s start as low as $0.69 per hour. Secure Cloud H100 SXM instances are priced competitively at $2.69 per hour.

Why it matters: RunPod provides the most granular control over your computer budget, ensuring you never pay for more "cloud" than you actually need.

5. Google Vertex AI

Best for: Full-stack enterprise AI lifecycles and integration with the Google Cloud ecosystem.

Vertex AI is the most comprehensive platform on this list, offering a "one-stop-shop" for everything from data labeling to model monitoring. For developers already using Google Cloud, Vertex provides a seamless path to integrate Gemini and other state-of-the-art models into their existing architecture. It is particularly powerful for those who need TPUs (Tensor Processing Units), which are Google’s custom-built AI chips.

- Gemini Pro & Flash Access: Native integration with Google’s flagship multimodal models, allowing for massive context windows up to 2 million tokens.

- AutoML Features: Allows developers with limited machine learning expertise to build high-quality custom models using Google’s automated training pipelines.

- Vertex AI Search: A managed service for building RAG (Retrieval-Augmented Generation) systems that can "chat" with your private company data.

- Managed Notebooks: Fully integrated JupyterLab environments with pre-installed libraries and direct access to Google’s data lakes.

- Model Monitoring: Automatically alerts you if your production model starts losing accuracy or if the input data shifts significantly over time.

Pricing: Pay-as-you-go. Gemini 2.5 Flash is priced at $0.30 per million input tokens. Custom training on A3 HighGPU nodes is roughly $101 per hour for an 8-GPU cluster.

Why it matters: Vertex AI acts as an invisible team of infrastructure engineers, automating the "boring" parts of AI so you can focus on the user experience.

6. Pinecone

Best for: High-performance vector search and long-term memory for AI agents.

No AI application is complete without a way to store and retrieve information efficiently. Pinecone is the industry standard for vector databases, which are essential for RAG (Retrieval-Augmented Generation). It allows your AI to "remember" millions of documents and find the most relevant one in milliseconds. Their new "Serverless" architecture has made it incredibly affordable for startups to start with massive datasets.

- Serverless Architecture: Pay only for the reads and writes you perform, with no need to manage or provision underlying server capacity.

- Instant Freshness: Vectors become searchable as soon as they are uploaded, ensuring your AI always has the most up-to-date information.

- Hybrid Search: Combines semantic (meaning-based) search with traditional keyword search to provide the most accurate results possible.

- Metadata Filtering: Narrow down your search results instantly by filtering on custom tags like "user_id," "date," or "category."

- Multi-Cloud Support: Available on AWS, Azure, and GCP, allowing you to keep your data close to your computer for maximum speed.

Pricing: The Starter plan is free for up to 2GB of data. The Standard plan has a $50 monthly minimum, with write units at $4 per million and read units at $16 per million.

Why it matters: Pinecone solves the "hallucination" problem by giving your AI a reliable, high-speed library of facts to reference before it speaks.

7. Modal Labs

Best for: Serverless Python functions that need to scale from zero to 1,000 GPUs instantly.

Modal is perhaps the most "developer-centric" tool on this list. It allows you to run Python code in the cloud as easily as running it on your laptop. You don't have to write Dockerfiles or manage Kubernetes YAML files; you just add a decorator to your Python function, and Modal handles the containerization and GPU provisioning in the background. It is perfect for modern, event-driven AI applications.

- Cold Starts in Seconds: Containers spin up almost instantly, making it viable for real-time inference without keeping expensive GPUs idling 24/7.

- Zero Configuration Infra: No need for Docker or Terraform; your local Python environment is automatically replicated in the cloud.

- High-Performance Mounting: Mount large model weights or datasets into your cloud functions as if they were local files.

- Integrated Scheduling: Easily run batch jobs or cron tasks for model retraining or data processing directly from your code.

- Automatic Scaling: Automatically handles traffic spikes by horizontal scaling across thousands of GPUs and then scaling back to zero.

Pricing: Billing is per-second of compute. H100 GPUs cost approximately $4.56 per hour of active use. You are never charged for idle time.

Why it matters: Modal allows a single developer to manage infrastructure that would typically require a whole DevOps team, drastically reducing the "time to market."

8. Anyscale (Ray)

Best for: Scaling complex Python and AI applications across giant clusters with ease.

Anyscale is the commercial platform built by the creators of Ray, the open-source framework used by OpenAI and Uber. It is designed for developers who have outgrown a single machine and need to distribute their work across dozens or hundreds of nodes. Whether you are doing massive data ingestion or distributed training, Anyscale makes the cluster feel like a single, unified computer.

- Ray Serve Integration: Effortlessly deploy and scale multiple models in a single pipeline with intelligent request batching.

- Anyscale Workspaces: Collaborative environments that allow teams to share code, data, and compute resources without configuration conflicts.

- Spot Instance Optimization: Automatically uses cheaper "spot" instances from cloud providers while handling the risk of interruptions seamlessly.

- End-to-End Observability: Detailed dashboards that show exactly how your resources are being used across the entire cluster.

- Cloud-Agnostic: Run your workloads on AWS or GCP using the same code, preventing vendor lock-in.

Pricing: Uses a "Bring Your Own Cloud" (BYOC) model. You pay Anyscale a platform fee (roughly $4.95 per hour for an A100 node) on top of your underlying cloud provider’s hardware costs.

Why it matters: It provides the "gold standard" for distributed computing, ensuring that as your app grows from 100 users to 10 million, your backend won't be the bottleneck.

9. LangSmith (by LangChain)

Best for: Debugging, testing, and monitoring complex LLM chains and agents.

Building an AI app is one thing; knowing why it failed is another. LangSmith is the "observability" layer of the AI infrastructure stack. It allows you to trace every step of your AI’s "thought process," see exactly what prompt was sent, how much it cost, and where the logic broke down. In 2026, you cannot ship a professional AI agent without a tool like this to ensure quality control.

- Full Traceability: Visualize the entire chain of events for every user request, including nested tool calls and data retrievals.

- Dataset Management: Easily convert failed user interactions into test cases to ensure your model never makes the same mistake twice.

- A/B Testing for Prompts: Compare different versions of your prompts side-by-side to see which one performs better with real-world data.

- Cost & Latency Tracking: Monitor your API spend and response times in real-time to identify inefficient model calls.

- Human-in-the-Loop: Built-in tools for humans to review and "grade" AI responses, which can then be used to further fine-tune your models.

Pricing: The Developer plan is free for the first 5,000 traces per month. The Plus plan starts at $39 per seat per month and includes higher trace limits.

Why it matters: It turns the "black box" of AI into a transparent, debuggable system, which is the only way to build trust with enterprise clients.

Which one should you choose?

The "best" platform depends entirely on your current stage of development. If you are in the prototyping phase and want zero friction, Modal or RunPod are unbeatable for their speed and low entry cost. If you are building a production-ready RAG application, you almost certainly need Together AI for inference and Pinecone for memory. For large-scale enterprise training, CoreWeave remains the king of performance, while Google Vertex AI is the choice for teams that want a fully managed, hands-off experience. Finally, regardless of your stack, LangSmith is a mandatory addition for anyone serious about monitoring their app's performance.

How does this connect to building a strong career or portfolio?

In today's market, saying "I can write code" is no longer enough; you need to prove you can deploy and scale systems. When you build a project using these professional infrastructure tools, you aren't just making a toy; you are demonstrating that you understand the operational realities of the modern tech stack. By showcasing these projects in a portfolio, you signal to employers that you are ready to handle production-level responsibilities. This is where your work samples become your most powerful resume.

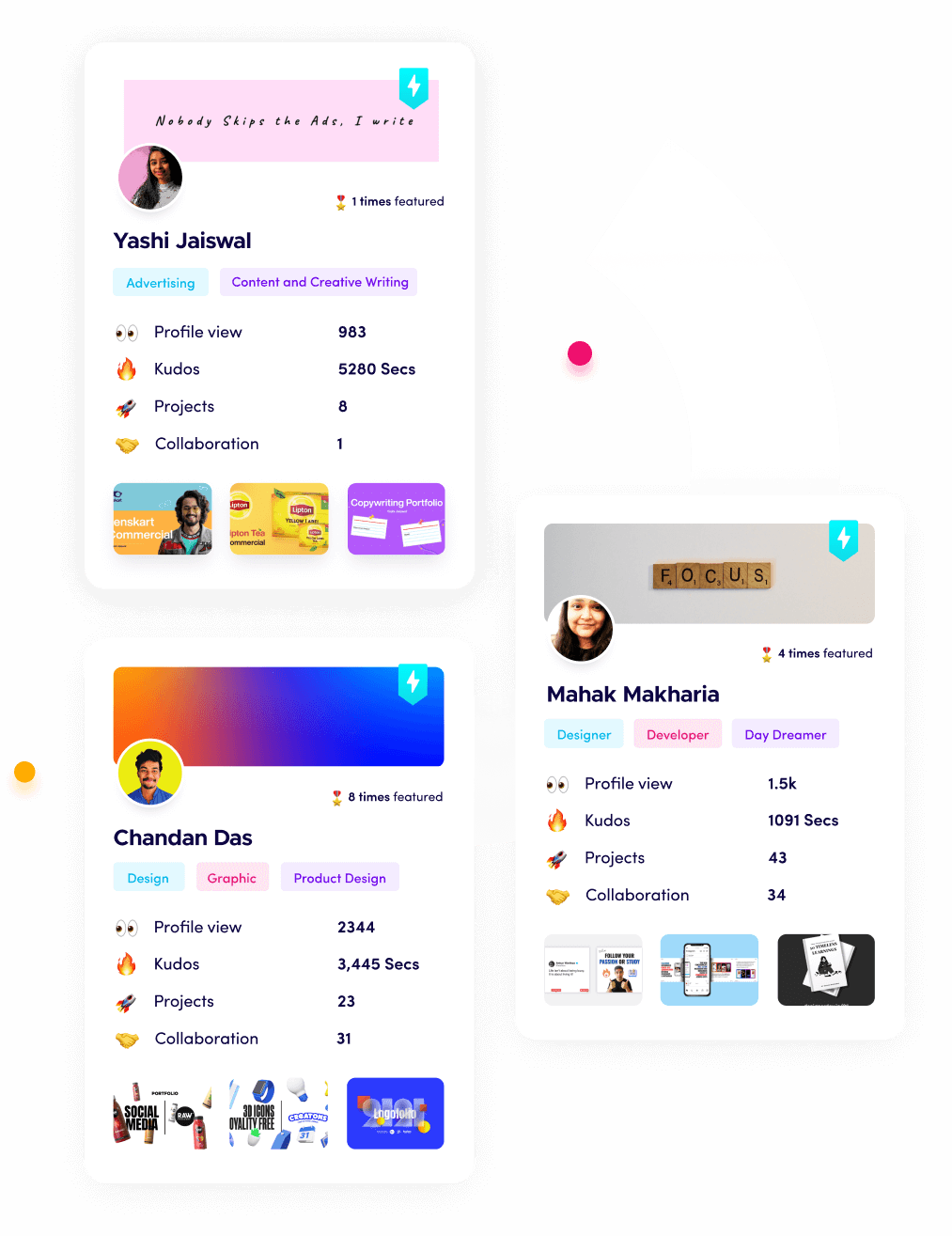

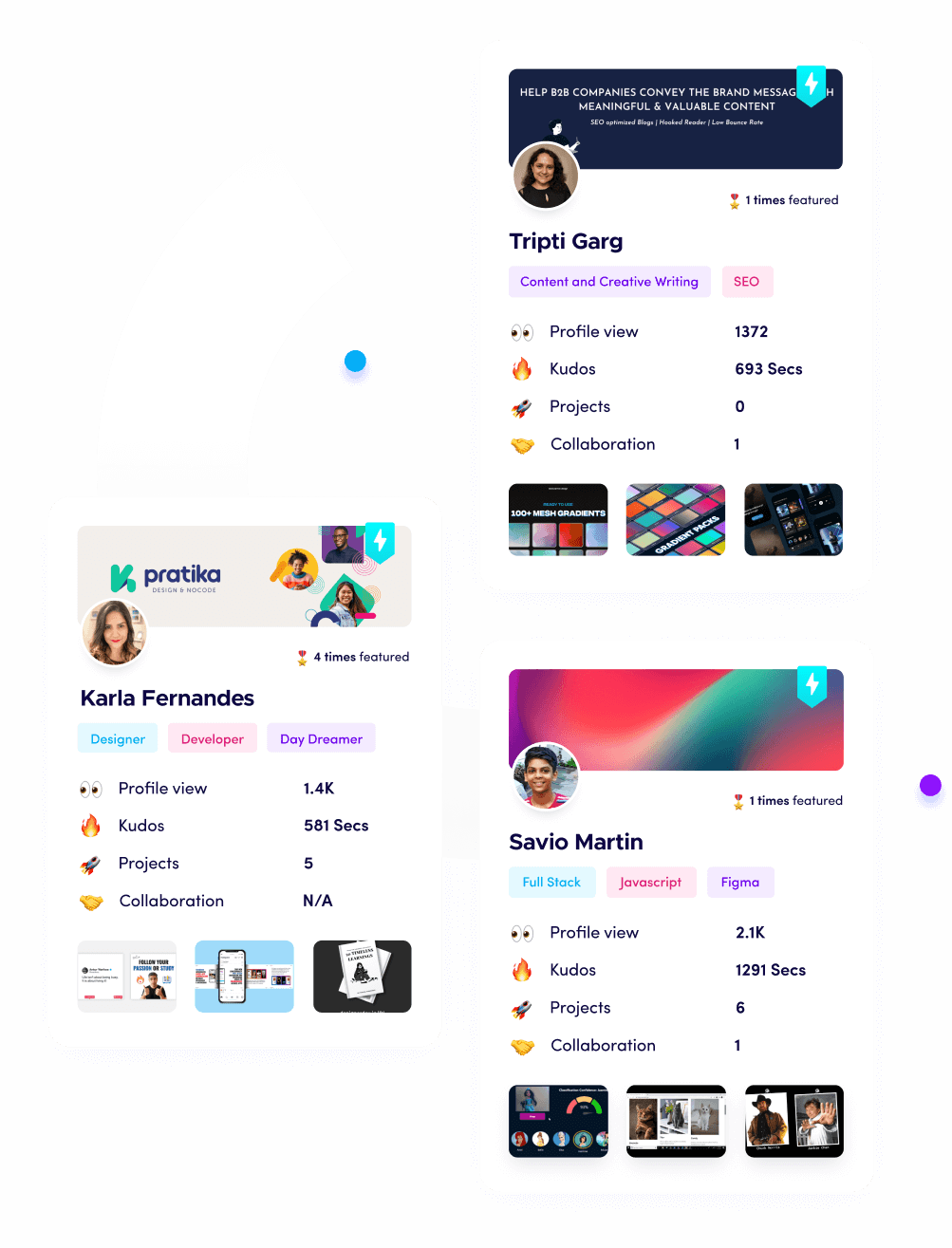

Showcasing Your Skills with Fueler

Building an incredible AI app is a massive achievement, but it doesn't help your career if no one sees the complexity behind it. This is where Fueler comes in. Instead of just listing "AI Development" as a bullet point on a flat resume, Fueler allows you to create a high-impact portfolio that showcases the actual assignments, work samples, and infrastructure choices you made. You can document how you scaled a model on Modal or how you optimized a Pinecone index, giving recruiters a "skills-first" view of what you are truly capable of. It’s the difference between saying you’re a developer and proving you’re an engineer.

Final Thoughts

The landscape of AI infrastructure is evolving at a breakneck pace, but the trend is clear: abstraction is winning. As a developer in 2026, your value lies in your ability to orchestrate these powerful platforms to solve real problems, not in your ability to manually configure GPU drivers. By mastering these nine tools, you are effectively future-proofing your career and ensuring that your applications are built on a foundation that can handle the demands of the next decade. Choose your stack wisely, build in public, and always prioritize the user's experience over the complexity of the hardware.

FAQs

What are the best free AI infrastructure tools for beginners in 2026?

Most major platforms like Pinecone, LangSmith, and Google Vertex AI offer generous free tiers or "trial credits" (usually around $300) that allow beginners to build and deploy small-scale projects without any upfront cost.

How do I choose between H100 and A100 GPUs for my app?

For most standard inference and fine-tuning tasks, the A100 is more than enough and significantly cheaper. You should only jump to the H100 if you are training very large models or require the absolute lowest latency for high-traffic production environments.

Can I build an AI app without using a vector database like Pinecone?

Technically, yes, but it is not recommended for apps that need to access large amounts of custom data. Without a vector database, your "context window" will be limited, and your API costs will skyrocket as you try to pass too much data in every single prompt.

Is it better to use serverless AI tools or dedicated GPU clusters?

Serverless tools (like Modal or Together AI) are better for variable traffic and starting out because you only pay for what you use. Dedicated clusters are better for high-volume, constant traffic, where the hourly rate of a reserved GPU becomes cheaper than the "per-token" or "per-second" serverless cost.

Do these AI infrastructure platforms work with any programming language?

While most of these platforms offer REST APIs that can be called from any language, the best support and libraries are almost always in Python and JavaScript/TypeScript, as these are the primary languages of the AI development community.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.