10 AI Voice Agents vs Chat Agents: Key Differences and Use Cases

Riten Debnath

24 Feb, 2026

The year 2026 has marked a permanent split in how businesses deploy artificial intelligence. We have moved past the era where "AI" was just a box on a screen. Today, the choice between a Voice Agent and a Chat Agent is a strategic decision based on human psychology, physical environment, and the urgency of the task. While a Chat Agent is like a high-end digital textbook that you can study at your own pace, a Voice Agent is like a live emergency responder who must understand your tone, your pauses, and your surroundings in a fraction of a second.

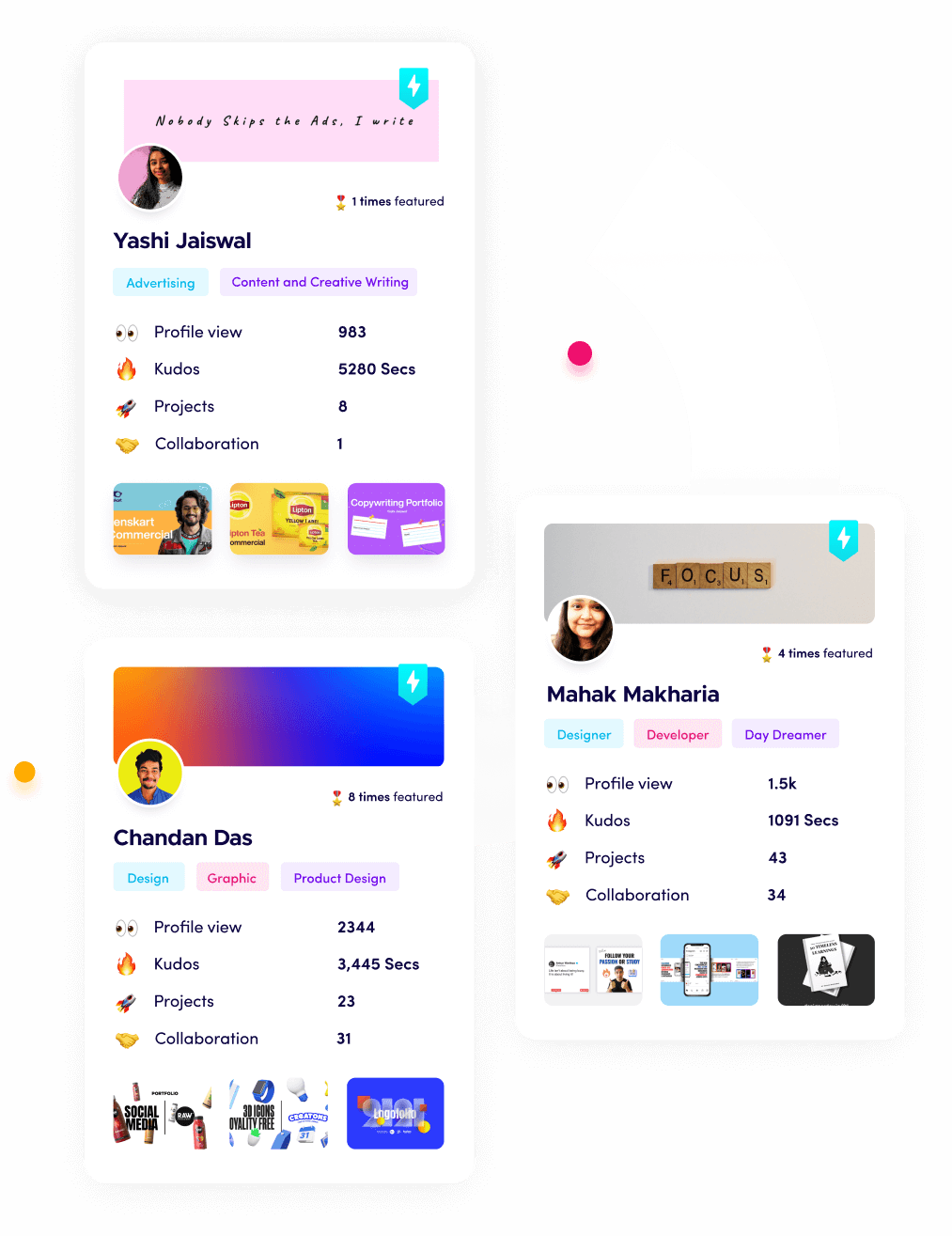

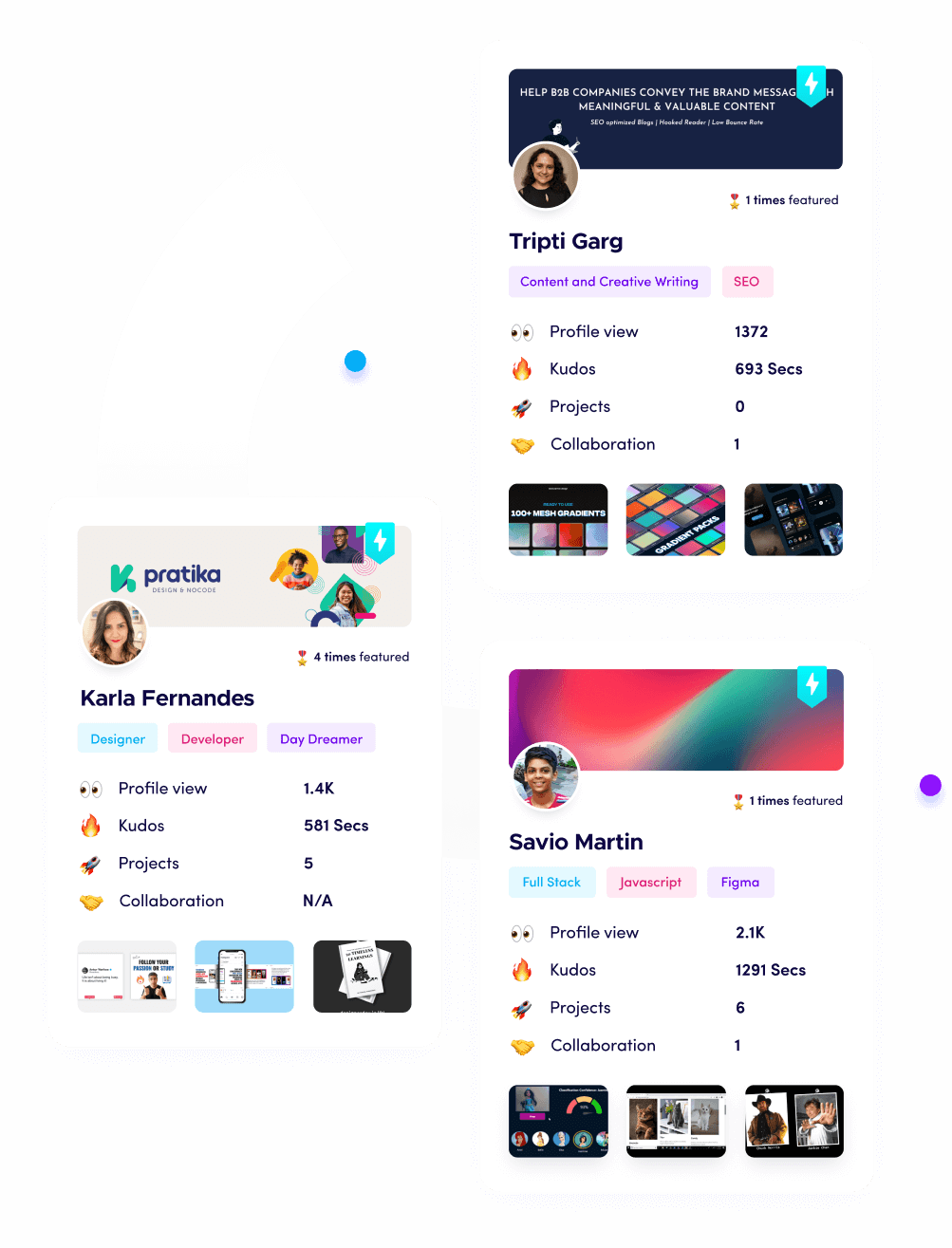

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. The "Silence Budget" and Processing Speed

The single biggest difference between voice and chat is how we perceive time. When you are typing in a chat window, you are comfortable waiting five or even ten seconds for a highly detailed and perfect answer because you can see a "typing" animation. However, in a phone call or a face-to-face conversation, a silence of more than one second feels like the call has been dropped or the person has ignored you. Voice agents have to operate with a "Silence Budget" of less than 500 milliseconds to feel human.

- Instant Reaction Workflows: Voice agents use a technology called "Streaming Inference," which means they start processing the very first word you say while you are still speaking the rest of your sentence, allowing them to have an answer ready the exact moment you stop talking without any awkward transition.

- Chunked Audio Delivery: To keep things fast, voice agents don't wait for a full sentence to be generated by the AI; they send "chunks" of audio to your ear as soon as the first few words are ready, creating a continuous flow of speech that sounds natural and responsive rather than robotic and jumpy.

- Latency-Filling Phrases: When the AI needs an extra second to look up a complex piece of data, a voice agent is programmed to say things like "That's a great question, let me check that for you," which keeps the human on the line engaged and prevents them from hanging up due to a sudden, unexplained silence.

- High-Speed Network Mapping: In 2026, voice agents are hosted on servers that are physically close to the user's city to shave off those tiny fractions of a second it takes for data to travel across the country, ensuring the conversation feels as snappy as talking to someone standing right next to you.

- Simplified Decision Paths: Because they have to be so fast, voice agents are often designed to make quicker, simpler decisions than chat agents, prioritizing a fast and helpful "good" answer over a slow and perfect "excellent" answer that would take too long to compute and deliver vocally.

Why it matters:

The way an agent handles time determines its success. In the world of AI Voice Agents vs. AI Chat Agents, speed is the "make or break" factor for vocal interactions.

2. Emotional Tone and Vocal Energy

A chat agent only has words to work with. If a chat agent says, "I am sorry to hear that," it can feel cold and corporate. But a voice agent can say those exact same words with a soft, sympathetic tone that actually makes the user feel cared for. In 2026, voice agents use "Emotional Prosody," which means they can change their pitch, their volume, and how fast they talk to match the mood of the person they are helping.

- Sentiment Sensing: Voice agents listen to the "shakiness" or "volume" of a user's voice to detect if they are angry, sad, or confused, and they automatically adjust their own voice to be more calming or more professional depending on what the situation requires at that exact moment.

- Emphasis and Meaning: By changing which word they emphasize in a sentence, a voice agent can change the entire meaning of a message, making sure the most important information stands out to the listener, something that is much harder to do with just plain text on a screen.

- Human-Like Breathing: Advanced voice agents now include tiny, artificial "breaths" and natural pauses in their speech patterns, which removes the "uncanny valley" feeling of a machine that talks forever without stopping, making the AI feel more like a friendly companion and less like a computer.

- Tone Consistency: Unlike humans who might get grumpy or tired after an eight-hour shift, a voice agent maintains a perfect, helpful, and high-energy tone for every single caller, ensuring that the last customer of the day gets the same high-quality experience as the very first one.

- Contextual Vocal Styles: A voice agent can instantly switch its entire personality from a "fun and energetic" shopping assistant to a "serious and focused" medical coordinator based on the specific department the user is calling, providing a tailored experience that matches the user's expectations.

Why it matters:

Empathy is the key to building trust. This emotional range is a primary reason why voice agents are used for sensitive tasks in this 2026 comparison.

3. Eyes-Busy and Hands-Free Utility

Chat agents require your full attention. You have to look at the screen, read the text, and type a response. This makes them useless for someone who is driving a car, cooking a meal, or performing surgery. Voice agents provide "Ambient Intelligence," meaning they live in the background of your life and can be used while you are doing other things. This opens up entirely new ways for businesses to help their customers throughout the day.

- Safety-First Interaction: Voice agents allow drivers to change their flight, report a lost card, or find a hotel without ever taking their eyes off the road, providing a level of safety and convenience that a text-based app simply cannot offer in a mobile world.

- Accessibility for All: For people with visual impairments or those who find it difficult to type on small screens, voice agents provide a wide-open door to technology, allowing them to manage their lives and access information using only the natural tool of their own voice.

- Multitasking Support: A voice agent can walk you through a complex 20-step recipe or a furniture assembly guide while your hands are covered in flour or holding a screwdriver, providing the right information at the exact moment you need it without you having to wash your hands to touch a screen.

- Home and Office Integration: Because they live in smart speakers and office hardware, voice agents can control your physical environment like dimming the lights or starting a conference call through simple spoken commands, making the technology feel like a part of the room rather than a separate device.

- Voice-First Design: These agents are built to be "brief and brilliant," giving you the most important information in short sentences so you can remember it easily while you are busy doing other things, whereas chat agents often give you long paragraphs that require deep focus.

Why it matters:

The ability to work without a screen is a massive advantage. This hands-free nature is a central theme in why voice agents are dominating the 2026 market.

4. Short-Term Memory and Information Layout

The way we "consume" information is different for our ears than for our eyes. If a chat agent sends you a list of 10 different pizza toppings, you can look at the list and pick your favorite in a second. If a voice agent reads 10 toppings to you, you will likely forget the first three by the time it reaches the last one. Voice agents have to be much more careful about how they "chunk" information so they don't overwhelm the human brain.

- The Power of Three: Voice agents are trained to never give more than three choices at a time, forcing the AI to be more intelligent about which options are the most relevant to the user, whereas chat agents can show dozens of options in a scrollable list without causing any confusion.

- Action-First Language: Voice agents put the most important information at the very beginning of the sentence (e.g., "Your flight is confirmed for 6 PM") so that even if the user gets distracted, they have already heard the most critical part of the message.

- Confirmation Loops: Because it is easy to mishear a spoken date or price, voice agents are designed to "loop back" and ask the user to confirm the details (e.g., "I have you down for Tuesday the 5th, is that correct?"), creating a safety net that chat agents don't need since the text is visible.

- Progressive Disclosure: Instead of explaining a whole process at once, a voice agent gives you one step at a time and waits for you to say "Okay" or "Next" before moving on, ensuring that the user never feels lost or buried under too much spoken information at once.

- Simplified Summaries: A voice agent will take a complex, five-page document and summarize it into two or three clear "talking points," acting as a filter that turns huge amounts of data into simple, actionable knowledge that can be understood in a quick 30-second conversation.

Why it matters:

Managing "Cognitive Load" is a specialized skill for voice developers. This difference in how we process info is a key part of the 10 differences explained here.

5. Noise Cancellation and Environment Awareness

Chat agents live in a "clean" digital world where the only thing that matters is the text you type. Voice agents live in a "messy" physical world filled with barking dogs, crying babies, and passing sirens. In 2026, voice agents have to be experts at "Signal Processing," which means they have to be able to pick out your specific voice in a crowded room and ignore everything else that is happening around you.

- Background Noise Filtering: Voice agents use advanced "Spectral Subtraction" to identify the frequency of your voice and digitally "erase" the sound of the wind or the hum of an air conditioner, ensuring the AI only hears your commands and not the chaos of your environment.

- Echo Cancellation Logic: When a voice agent is speaking through a speaker, it has to be smart enough to "ignore" its own voice so it doesn't get stuck in a loop of listening to itself, a complex technical challenge that chat agents never have to deal with in their silent world.

- Distance Normalization: Whether you are whispering right into your phone or shouting from across the kitchen, the voice agent uses "Automatic Gain Control" to adjust the volume of the audio so the AI gets a consistent, clear signal every single time you speak to it.

- Multi-Speaker Detection: In a home setting, a voice agent can distinguish between the voices of different family members, allowing it to provide personalized schedule updates to the father while refusing to give the children access to the "buy now" features without a parent's permission.

- Situational Adjustments: If a voice agent detects a lot of noise in your background, it might automatically speak louder or repeat itself more often to make sure you can hear it over the ruckus, showing a level of "awareness" that a static chat window simply cannot match.

Why it matters:

The ability to handle the "real world" makes voice agents much more resilient. This environmental awareness is a massive technical hurdle, explained in this 2026 guide.

6. Interruptions and the "Barge-In" Feature

In a chat, you type your message and wait. In a real conversation, humans interrupt each other all the time. Maybe the agent is explaining something you already know, and you want to say "Yeah, I've got that, move to the next part." A voice agent must have "Barge-In" capability, which means it can instantly stop talking the moment it hears you speak, just like a polite human would do.

- Real-Time Stream Interruption: The moment a voice agent detects a "Voice Activity" signal from the user, it kills the audio output instantly, preventing that annoying experience of a computer talking over a human and making the AI feel much more like an equal partner in the chat.

- Contextual Pivot Logic: If you interrupt a voice agent to ask a clarifying question, the agent has to "remember" where it was in the original explanation so it can go back and finish its point after answering your quick question, a complex memory task that requires high-level architecture.

- Handling False Starts: Humans often start a sentence, stop, and then start again (e.g., "Can you... wait, actually, can you tell me..."). A voice agent has to be smart enough to ignore the "junk" at the beginning of the sentence and only focus on the final, clear intent of the user.

- Politeness and Turn-Taking: The agent is programmed with "Social Norms" so it knows how long to wait after you stop talking before it starts its reply, ensuring the conversation doesn't feel like a series of interruptions but like a smooth, back-and-forth exchange of ideas.

- Dynamic Attention Scaling: A voice agent can "listen harder" when it expects an interruption, such as when it is reading a long list, allowing it to be extra responsive to the user's desire to skip ahead or ask for more detail on a specific item in the list.

Why it matters:

Conversational flow is what makes an agent feel "real." This "Barge-In" logic is a critical difference between voice and chat in 2026.

7. Privacy and the "Always-On" Social Barrier

Chat agents only see what you type in the box. Voice agents, especially those in smart speakers, have to manage a much more sensitive "Social Barrier." People are often worried that a voice agent is "always listening" to their private family conversations. Because of this, voice agents in 2026 have to be much more transparent about when they are active and how they are protecting your vocal data.

- Hardware Mute Indicators: Most voice-activated devices now have physical lights or switches that show youbeyond any doubtwhen the microphone is off, providing a level of physical security and "visual trust" that a software-based chat agent doesn't need to provide.

- Local "Wake Word" Processing: To protect privacy, the device doesn't send any audio to the cloud until it hears its specific "Wake Word" (like "Hey Assistant"), meaning your private conversations stay local on the device and are never seen by the AI until you explicitly ask for help.

- Voice Data Anonymization: In 2026, many voice agents "strip away" the unique identity of your voice before sending the data to the server, so the AI knows what was said but has no record of who said it, protecting your vocal "fingerprint" from being stored in a database.

- Public Use Constraints: People are often embarrassed to talk to an AI in a public place like a bus or a quiet office. Because of this, voice agents are often designed to be used in "private" spaces, while chat agents remain the king of public, discreet communication that no one else can hear.

- Explicit Consent Workflows: Voice agents are required to ask for permission before recording a call for "quality purposes" much more clearly than chat agents, ensuring that the user is always aware of how their auditory information is being used by the company they are calling.

Why it matters:

Trust is the currency of the future. This privacy focus is a major point of comparison for these agents in 2026.

8. Biometric Identity and Security

In 2026, your voice is your password. Voice agents use "Voice Printing" to verify exactly who is talking based on the unique physical shape of their throat and mouth. This is much faster and more secure than typing in a password or waiting for a text message code. While a chat agent has to rely on traditional logins, a voice agent knows it's you the moment you say "Hello."

- Friction-Free Authentication: Instead of fumbling with a keyboard to type a 12-character password, you can simply talk to the agent, and it will verify your identity in the background while you are explaining your problem, making the whole security process feel invisible and effortless.

- Anti-Spoofing Technology: Advanced voice agents can tell the difference between a real human voice and a recording or a "deepfake," using "Liveness Detection" to ensure that no one can hack into your bank account by just playing a video of you speaking.

- Multi-Factor Synergy: A voice agent can combine your voice print with your phone's location and your face ID to create a "triple-lock" of security that is nearly impossible for a hacker to break, providing a level of safety that is perfect for high-value financial transactions.

- Instant Personalized Access: Because the agent knows who is talking, it can immediately pull up your specific account history and preferences without you having to tell it your name or account number, allowing the conversation to start with "Hi John, are you calling about your recent order?"

- Secure Voice-Only Transactions: In 2026, you can authorize a payment of thousands of dollars using only your voice, as the "biometric signature" of your speech is now legally recognized as a valid form of signature in many countries for digital commerce and banking.

Why it matters:

Security should be simple, not a chore. This biometric power is a game-changer in the world of 16 agent architectures and vocal assistants.

9. Semantic Mapping vs. Physical Action

Chat agents are great at "knowledge" (finding a fact). Voice agents are becoming the masters of "action" (doing a task). In 2026, voice agents will be integrated with the "Internet of Things" (IoT), meaning they have the power to move things in the physical world. While a chat agent might tell you how to save energy, a voice agent can actually turn off your heater and lock your front door while you are walking out the house.

- Command-to-Actuator Logic: Voice agents are directly connected to the "actuators" in your smart home, meaning they can translate a spoken intent like "make it cozy in here" into a series of physical actions like closing the blinds, dimming the lights, and turning on the fireplace.

- Real-Time Logistics Coordination: In a warehouse setting, a manager can use a voice agent to "reroute all incoming trucks to Bay 4," and the agent will instantly update the digital signs and the drivers' GPS systems, turning a spoken command into a massive physical reorganization in seconds.

- Hands-Free Software Control: Voice agents act as a "shortcut" for complex software; instead of clicking through five menus to find a setting, you can just say "Enable dark mode and mute all notifications for an hour," and the agent performs the technical clicks for you instantly.

- Embodied AI Companionship: In 2026, voice agents are being built into physical robots that can follow you around and help you with physical tasks, using their "voice" to explain what they are doing while they help you carry groceries or clean up a spill in the kitchen.

- Proactive Physical Alerts: A voice agent can "shout" a warning if it detects a leak in your basement or a fire in the kitchen, providing an immediate physical alert that a silent notification on a phone screen might not catch in time to prevent a disaster.

Why it matters:

AI is moving from the screen to the streets. This physical integration is a standout feature of voice agents in this 2026 overview.

10. Language Nuance and Slang Adaptation

Text is formal; speech is messy. When we talk, we use slang, we mumble, we use "fillers," and we change our minds mid-sentence. Voice agents in 2026 have to be much more "linguistically flexible" than chat agents. They have to understand that "Yeah, no, for sure" actually means "Yes," whereas a literal-minded chat agent might get confused by the "No" in the middle of that sentence.

- Colloquial Intent Parsing: Voice agents are trained on thousands of hours of real human conversations to understand "The way people actually talk," allowing them to navigate the weird and wonderful ways humans use language in different regions and cultures without getting stuck on formal grammar.

- Disfluency Handling: The agent is smart enough to "edit" your speech in real-time, removing the "uhs," "ums," and "likes" from its internal transcription so it can focus on the core meaning of what you are trying to say without being distracted by your verbal tics.

- Code-Switching Capability: Modern voice agents can handle "Bilingual" users who switch between two languages in the same sentence (like Spanglish), maintaining the flow of the conversation without needing the user to stop and change their language settings manually.

- Phonetic Guessing: If you use a word the agent doesn't know, it will try to guess the word based on its "sounds" and the context of the sentence, often figuring out exactly what you meant even if your pronunciation was a bit off or the connection was slightly fuzzy.

- Evolutionary Vocabulary: As new slang becomes popular in 2026, voice agents update their "slang dictionary" weekly, ensuring they don't sound like "old computers" and can stay relevant to younger users who use a constantly changing set of terms and expressions to communicate.

Why it matters:

Communication is about connection, not just correctness. This linguistic flexibility is the final and most human difference explained in this guide.

Show Your Proof of Work on Fueler

As you decide whether to build a high-speed Voice Agent or a data-dense Chat Agent, remember that the "how" is just as important as the "what." In 2026, companies want to see that you understand the technical trade-offs between these two worlds. Fueler is the perfect place to host your portfolio. Instead of just a list of skills, show off the actual latency tests you ran for your voice agent or the complex information hierarchy you built for your chat agent. Showing your "Proof of Work" on Fueler is the best way to prove you are an AI Architect who knows how to pick the right tool for the job.

Final Thoughts

The choice between Voice and Chat is a choice between "The Speed of Life" and "The Depth of Data." As an AI builder in 2026, your goal should be to understand the user's situation before you ever write a single line of code. If they are busy, stressed, or on the move, give them a Voice Agent. If they are focused, at a desk, and looking for detail, give them a Chat Agent. By mastering both, you become a versatile architect in the most exciting era of technology history.

FAQs

Is voice more expensive to run than chat?

Yes, usually. Voice requires more "layers" of technology. You have to pay for the transcription (turning voice to text), the AI "brain," and the synthesis (turning text back to voice). You also have to pay for the phone line or the streaming data, which makes it more costly than a simple text-based chat.

Can one agent do both voice and chat?

In 2026, the best agents are "Multimodal." They can start as a chat in a quiet office and then "handoff" to a voice call when you walk out to your car. This provides a "seamless experience" where the AI remembers everything from the chat and continues the conversation perfectly over voice.

Which is better for security, voice or chat?

Voice is generally safer for "Identity" because your voice print is very hard to fake. However, chat is often better for "Privacy" because no one around you can hear what you are typing, whereas people in a coffee shop can easily overhear your private phone conversation with a voice agent.

Why do some voice agents still sound robotic?

Usually, this is a choice made to save "Latency." High-quality, human-sounding voices take more computer power and more time to create. Some companies choose a "robotic" but "instant" voice over a "human" but "slow" voice because, in a phone call, speed is more important than beauty.

Will voice agents eventually replace apps?

For many tasks, yes. Instead of having 50 different apps on your phone, you will simply have one "Voice Portal" that can talk to all those different services for you. Why open a pizza app and click 10 buttons when you can just say "Hey, get me my usual from the pizza place" and be done in 3 seconds?

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.