AI Voice Agents Explained: Technology Behind Smart Conversations

Riten Debnath

04 Apr, 2026

Last updated: April 2026

Have you ever stopped to wonder why a machine can now understand your sarcasm, your late-night grocery requests, or even the subtle frustration in your voice? We have officially moved past the era of robotic, clunky automated menus that never understood a word we said. Today, AI voice agents are becoming the primary interface for how we interact with the digital world. Whether it is a customer service bot that sounds indistinguishable from a human or a personal assistant managing your entire calendar through a simple conversation, the leap in technology has been nothing short of breathtaking.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. The Core Architecture of Modern Voice AI

To understand how a voice agent works, we have to look at the three-step journey a sound wave takes to become an action. It starts with Automatic Speech Recognition (ASR), where the computer "hears" the sound and converts it into text. Then comes Natural Language Understanding (NLU), which is the brain of the operation. This is where the AI figures out what you actually mean, not just what you said. Finally, there is Text-to-Speech (TTS), which converts the machine's response back into a human-like voice.

- Advanced ASR Engines: These sophisticated systems utilize deep learning architectures to filter out background noise, such as traffic or office chatter, and focus purely on human phonemes to ensure the transcription is accurate regardless of the environment.

- Sliding Contextual Windows: Modern systems do not just process one word at a time in isolation, they look at the entire sentence and the surrounding conversation to ensure that words with multiple meanings are interpreted correctly based on the context.

- Neural Transducer Frameworks: These allow for true real-time processing, meaning the AI can begin the "thinking" and "understanding" phase while the human is still speaking, which drastically reduces the perceived wait time for a response.

- Probabilistic Error Correction: Advanced models use complex mathematical probability to "guess" a word if the audio signal is muffled or unclear, which significantly reduces communication breakdowns and the need for the user to repeat themselves.

Why it matters: Understanding this core architecture is vital for anyone looking to implement this technology in a professional setting. If you know how the data flows from sound to text to meaning, you can better optimize the user experience, ensuring that the "hearing" and "thinking" phases are as seamless and friction-free as possible for the end user.

2. Natural Language Processing and the Power of LLMs

The biggest breakthrough in voice agents recently has been the integration of Large Language Models (LLMs). In the past, voice bots were "intent-based," meaning they only knew how to respond if you said a specific keyword. If you strayed from the script, they broke. Today, thanks to LLMs, voice agents can handle "unstructured" conversation. They understand context, history, and even nuance, making the interaction feel like a genuine back-and-forth dialogue rather than a rigid interrogation.

- Transformer Architecture Models: These are the foundations of modern AI, allowing the agent to weigh the importance of different words in a sentence simultaneously, which helps the machine understand complex sentence structures and long-distance dependencies.

- Zero-Shot Task Learning: This capability enables the AI to answer questions or perform specific tasks it was not explicitly trained for by using general logic and its vast knowledge base to "reason" through a new request on the fly.

- Real-time Sentiment Analysis: Modern AI can detect subtle acoustic cues to determine if a caller is angry, confused, or happy, allowing the agent to adjust its tone, speed, and vocabulary to match the user's emotional state effectively.

- Sub-word Tokenization Processes: This is the complex process of breaking down speech into smaller mathematical chunks that the machine can process, allowing it to handle new words, slang, or technical jargon that it might not have seen during its initial training phase.

Why it matters: For founders and developers, this shift means we no longer have to program every possible response or "if-then" scenario. We can now build agents that are inherently "smart," saving thousands of hours in development time and providing a much more empathetic and flexible experience for the customer.

3. The Evolution of Text-to-Speech (TTS) Technology

We have come a long way from the "Speak & Spell" voices of the 1980s. Modern TTS uses neural networks to mimic the cadence, pitch, and rhythm of a human voice. This is often called "Neural TTS." It doesn't just string together recorded words, it generates a waveform from scratch. This allows for features like "whispering," "excited tones," or "empathy," which are crucial for making a voice agent feel less like a computer and more like a helpful assistant.

- WaveNet Generative Technology: A deep generative model for raw audio that produces voices that sound significantly more natural and human-like than traditional concatenative methods that simply glued pre-recorded words together.

- Fine-tuned Prosody Control: This allows developers to adjust the specific timing, stress, and intonation of the AI's speech to emphasize certain points, ask questions with the correct rising tone, or pause for dramatic effect during a conversation.

- High-Fidelity Voice Cloning: This is the ability to take a very small sample of a real human voice and create a digital twin that sounds identical, allowing brands to have a consistent, recognizable voice across all of their digital touchpoints.

- Cross-Lingual Synthesis: Modern agents can switch between dozens of different languages and dialects while maintaining the same "personality" and vocal characteristics, which is essential for global companies serving a diverse customer base.

Why it matters: The "vibe" and quality of your voice agent represent your brand's personality in the ears of the user. If the voice sounds robotic or grating, users will naturally feel a sense of distrust. By leveraging high-quality, neural TTS, you create a sense of familiarity and comfort that encourages longer and more productive interactions.

4. Latency: The Technical Battle for Real-Time Response

One of the hardest problems to solve in voice AI is latency, the delay between when a human stops talking and the AI starts responding. In a natural human conversation, this delay is usually around 200 milliseconds. If an AI takes two seconds to respond, the "magic" is lost and the conversation feels awkward and forced. Solving for latency requires massive optimization of the servers and the code, often moving the processing closer to the user through "edge computing."

- Localized Edge Processing: By running the AI models locally on a user's device or a nearby "edge" server, developers can drastically reduce the time data spends traveling back and forth to a central data center, making responses feel instant.

- Continuous Streaming Inference: The AI starts generating its response as it receives the first few words of your speech, rather than waiting for you to finish your entire sentence, which helps overlap the "thinking" and "speaking" phases.

- Intelligent Voice Activity Detection (VAD): These sensors quickly identify the exact millisecond a human has stopped speaking by analyzing background noise levels, which triggers the AI's response immediately without an awkward silence.

- Model Quantization and Optimization: This involves shrinking the mathematical size of the AI models so they can run much faster on standard hardware without losing a significant amount of their "intelligence" or accuracy during the conversation.

Why it matters: If you are building a voice agent for sales or support, speed is everything. A fast, snappy response keeps the user engaged and moving toward a resolution, while a slow response leads to frustration and high drop-off rates. Reducing latency is the technical difference between a professional tool and a simple toy.

5. Deployment Use Cases in Modern Business

Voice agents are no longer just for booking hair appointments or checking the weather. They are being used across industries to handle complex, multi-step tasks that previously required a human. In healthcare, they help triage patients by asking about symptoms. In finance, they verify identities and handle complex fraud alerts. In the startup world, they are being used to qualify leads before they ever talk to a human sales rep.

- Automated Inbound Lead Qualification: These agents can automatically call or answer inquiries from potential customers, asking specific discovery questions to see if they fit your target profile before passing the "warm" lead to a human.

- Tier 1 Technical Support: Guiding users through complex hardware setup processes or software troubleshooting using only voice commands, which allows the user to keep their hands free while they work on the problem.

- End-to-End Appointment Scheduling: Handling the complex back-and-forth of finding a time that works for both parties, checking calendars in real-time, and sending out invites without any human intervention from your team.

- Conversational Post-Purchase Surveys: Calling customers to get qualitative feedback in a conversational way that yields much more detailed and useful data than a standard star-rating or a text-based survey could ever provide.

Why it matters: Scaling a business often means finding ways to do more with less. Voice agents allow you to maintain a high level of customer touch and "white glove" service without the massive overhead and management challenges of a 24/7 human call center. It is about maximizing your team's focus on high-value strategy.

6. Personalization and Long-Term Memory

The next frontier for voice agents is "long-term memory." A truly smart voice agent should remember that you called last week about a specific issue. It should know your preferences, your history, and your goals. This is achieved through "Vector Databases" and "Retrieval-Augmented Generation" (RAG). By connecting the voice agent to your CRM, the AI can provide a level of personalization that even a human might struggle to maintain across thousands of different customers.

- RAG (Retrieval-Augmented Generation): This allows the AI to look up specific, private facts about a customer's history in real-time during a call, ensuring the advice it gives is tailored specifically to that individual's account.

- Persistent Conversation Threads: The ability for the AI to maintain the "context" of a conversation across multiple days, calls, or even different platforms like email and voice, so the user never has to repeat themselves.

- Predictive User Profiling: Categorizing users based on their tone and previous voice interactions to offer better, more relevant product recommendations that match their specific buying habits and needs.

- Context-Aware Dynamic Scripting: The AI changes its vocabulary and approach based on the specific history and technical level of the person it is talking to, ensuring the conversation is always at the right level of detail.

Why it matters: Personalization is what turns a one-time user into a lifelong advocate for your brand. When a voice agent remembers a user's details and previous concerns, it makes the user feel valued and understood, which is the cornerstone of building long-term brand loyalty in a crowded market.

7. Bridging the "Uncanny Valley" of Sound

The "Uncanny Valley" describes the feeling of unease when a robot sounds almost human, but something is slightly off. In voice AI, this usually happens when the rhythm is too perfect or the "breathing" sounds fake. Developers are now working on "Paralinguistics," which is the study of non-verbal cues like sighs, pauses, and the "ums" and "uhs" that make us sound human. Adding these small imperfections actually makes the AI more relatable and trustworthy.

- Strategic Filler Word Insertion: Carefully adding "um," "uh," or "well" into the conversation to make the AI's "thought process" seem more human and less like it is just reading from a database.

- Ambient Breath Synthesis: Adding very subtle, natural breathing sounds into the audio stream to avoid the "dead silence" between sentences which often feels unnatural and alerts the brain that it is talking to a machine.

- Contextual Emotional Inflection: Automatically changing the pitch and warmth of the voice when delivering good news versus bad news, ensuring the machine's tone matches the gravity of the situation.

- Adaptive Conversation Pacing: Slowing down significantly when explaining complex or technical topics and speeding up during routine information gathering to match the natural flow of human speech patterns.

Why it matters: Trust is a fragile thing in business. If a user feels "creeped out" or unsettled by a voice agent, they will hang up and find a competitor. By bridging the Uncanny Valley, we create an environment where the user can focus entirely on the information being exchanged rather than the fact that they are talking to a piece of software.

8. Security, Privacy, and the Ethics of Voice

As voice agents become more powerful, the risks grow. Voice cloning can be used for "Deepfake" scams, and recording private conversations raises massive privacy concerns. Ethical AI development involves creating "Watermarks" for AI audio so it can be identified and ensuring that data is encrypted and handled according to strict regulations like GDPR. For any founder, building with "Privacy by Design" is not just a legal hurdle; it is a core part of the product.

- Secure Voice Biometrics: Using the unique, uncopyable characteristics of a user's voice as a secure form of multi-factor authentication for sensitive tasks like banking or account recovery.

- On-Device Data Processing: Keeping the actual audio data on the user's phone or computer rather than sending it to a central cloud server, which significantly reduces the risk of data breaches.

- Transparent Consent Protocols: Ensuring the AI clearly and honestly identifies itself as an artificial entity at the very start of the conversation so the user is never deceived about who they are talking to.

- PII Data Anonymization: Automatically stripping away names, addresses, and other personal identifiers from voice recordings before using them to further train or improve the AI models.

Why it matters: A single security breach or ethical scandal can destroy a startup overnight. By prioritizing ethical deployment and robust security from day one, you protect your users and your company's reputation, ensuring that your growth is sustainable and that your users' trust is well-placed and protected.

9. Multimodal Integration: When Voice Meets Vision

Voice agents are at their best when they are part of a larger ecosystem. Imagine talking to an AI while it shows you a chart on your screen, or using voice to edit a portfolio on a platform like Fueler. This is called "Multimodal" AI. It means the AI can see, hear, and talk all at once. This creates a much richer user experience because it uses the best tool for the specific job: voice for quick input and visuals for complex data.

- Integrated Vision-Language Models: Allowing the AI to "see" exactly what is on your screen in real-time and talk to you about it, providing contextual help as you navigate a complex software interface.

- Coordinated Haptic Feedback: Pairing voice responses with physical vibrations or visual cues on a mobile device to provide a more immersive and accessible experience for users with different needs.

- Seamless Cross-Platform Sync: Allowing a user to start a conversation on a smart speaker in their car and finish it on a mobile app in their office without losing a single bit of progress or context.

- Voice-Controlled Interactive Dashboards: Building interfaces that update visual data, charts, and graphs instantly as you speak, allowing for "hands-free" data analysis and reporting during meetings.

Why it matters: The future of work is not just one interface; it is all of them working together in harmony. For founders, building multimodal experiences means your product becomes more accessible and more powerful for a wider range of users, making it an indispensable part of their daily workflow.

10. The Future: From Simple Agents to Voice Partners

We are moving toward a world where AI voice agents aren't just tools we use for tasks, but partners we collaborate with. They will be proactive, not just reactive. Your voice partner might call you to remind you of a deadline, suggest a better way to phrase a pitch, or even help you practice for a high-stakes interview. The technology is moving from "What can I do for you?" to "Here is what we should do next to reach our goals."

- Intelligent Proactive Assistance: The AI initiates contact based on specific triggers, market changes, or goals it knows you have set, rather than waiting for you to ask for help.

- Real-time Collaborative Problem Solving: Working through a logic puzzle, a coding error, or a business strategy out loud with an AI assistant that can offer counter-arguments and suggestions.

- Immersive Language Learning Partners: AI that acts as a 24/7 tutor, correcting your pronunciation, grammar, and even cultural nuances in real-time conversation to help you master a new language.

- Creative and Strategic Brainstorming: Using voice AI as a "sounding board" to bounce ideas back and forth when you are stuck on a creative project or need a fresh perspective on a difficult business decision.

Why it matters: This shift represents a fundamental change in how we relate to technology. As these agents become more like partners, the value they provide grows exponentially. They become an extension of our own capabilities, helping us work smarter, learn faster, and achieve more than we ever could alone.

Showcasing Your Skills in the AI Era

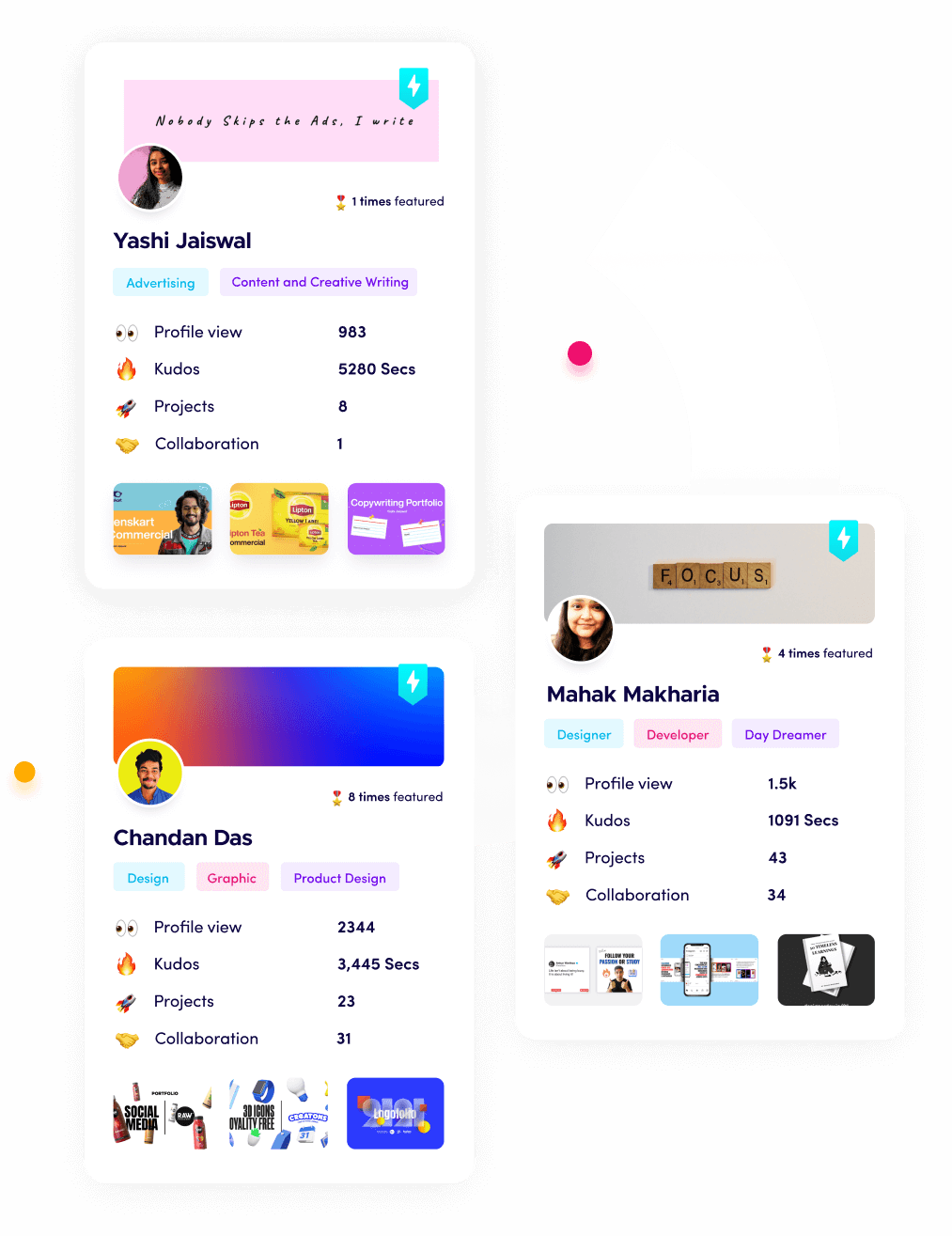

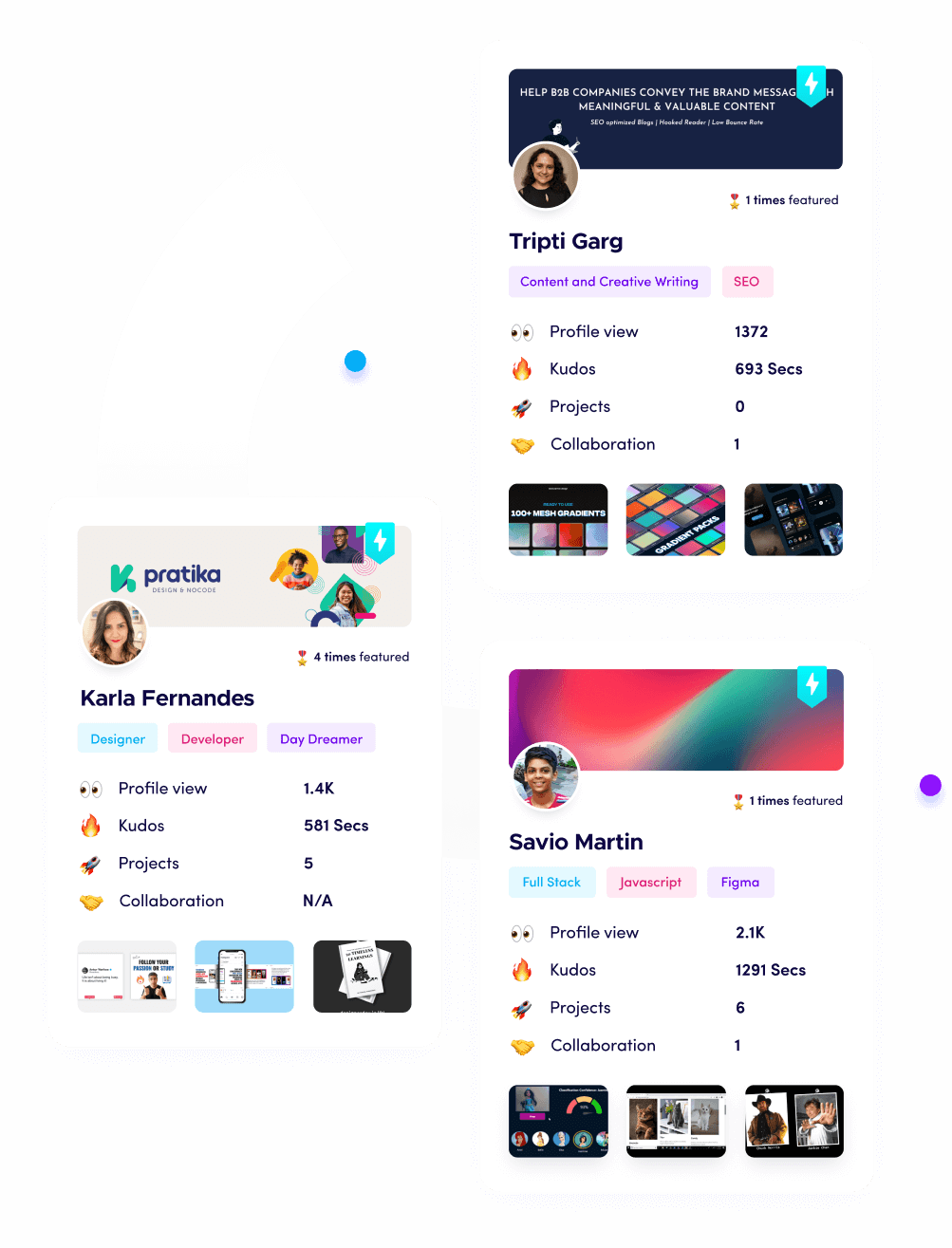

In this rapidly changing world, the most important thing you can do is prove that you can adapt and use these advanced tools effectively. Whether you are building voice agents, designing their conversational flow, or using them to scale your sales operations, you need a professional way to show that work to the world. This is exactly why I built Fueler. On Fueler, you can create a portfolio that doesn't just list your skills, but shows the actual projects you have completed. You can upload your AI-driven marketing campaigns, your code for a custom voice bot, or your strategy for scaling a startup with automation. It is a place to document your professional journey and show hiring managers that you are not just a static resume, but a person with a proven track record of creating real value in the real world.

Final Thoughts

The technology behind AI voice agents is undeniably complex, involving everything from neural waveforms to large language models, but the ultimate goal is simple: to make our interaction with machines as natural as our interaction with each other. From the nuances of speech recognition to the empathy of neural voices, every piece of this puzzle is coming together to create a more connected and efficient world. As a founder or a professional, the best way to stay ahead is to embrace these tools, understand their deep potential, and most importantly, document your progress as you build the future.

FAQs

What is the best free AI voice agent technology for beginners in 2026?

The most effective free tools currently include open-source models like OpenAI's Whisper for speech-to-text and various fine-tuned implementations of Llama 3 for the conversational engine, providing high accuracy without expensive subscription fees.

How do I reduce latency in my AI voice application?

To reduce latency, you should focus on using quantized models that run faster, implementing streaming for both audio input and output, and utilizing edge computing to process the AI logic closer to the physical location of the user.

Can AI voice agents understand different accents and dialects?

Yes, modern ASR engines are trained on massive, global datasets that include thousands of different regional accents and dialects, making them much more effective at understanding diverse speakers than the rigid systems of the past.

Is voice cloning legal for professional business use?

Voice cloning is legal as long as you have the explicit, written consent of the individual whose voice is being cloned. It is vital to follow strict ethical guidelines and provide clear disclosure to listeners to avoid legal and brand reputation issues.

How can I integrate a voice agent into my existing CRM?

Most professional AI voice platforms offer robust APIs that allow you to connect them directly to popular CRMs like Salesforce or HubSpot, enabling the agent to pull customer history and update records in real-time during a conversation.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.