AI Infrastructure Explained: The Backbone of Modern AI Systems

Riten Debnath

04 Apr, 2026

Last updated: April 2026

Imagine trying to run a Formula 1 race on a dirt track using a lawnmower engine. No matter how great the driver is, the car simply won't perform because the foundation is weak. This is exactly how Artificial Intelligence works in the real world. While everyone talks about the "driver," which is the AI model like ChatGPT, the real magic happens because of the "track" and the "engine," known as AI Infrastructure. We are currently witnessing a massive industrial revolution where physical data centers are being transformed into digital power plants that churn out intelligence instead of electricity.

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. High Performance Computing: The Muscle of AI Training

To train a modern AI model, you need an incredible amount of raw processing power that traditional computers simply cannot provide. Standard computer chips, called CPUs, are great for everyday tasks like browsing the web or writing a document, but they are far too slow for the complex, repetitive math required by machine learning. This is why specialized hardware like GPUs (Graphics Processing Units) has become the gold standard for the industry. These chips can handle thousands of small mathematical tasks at the exact same time, making them the perfect engine for processing the massive datasets that feed today’s most popular AI applications.

- NVIDIA H100 and Blackwell GPUs: These are currently the most powerful and sought-after chips in the world, specifically designed to handle the trillion-parameter calculations required for Large Language Models.

- Custom Tensor Processing Units (TPUs): Google and other tech giants have designed their own custom hardware that is stripped of unnecessary features to focus entirely on speeding up the specific math used in machine learning.

- Massive Parallel Processing Architectures: Unlike a standard computer that does one thing at a time, AI hardware breaks a giant problem into millions of tiny pieces and solves them all simultaneously across thousands of cores.

- High-Bandwidth Memory (HBM3): Modern AI chips include specialized memory that can move data to the processor at speeds of several terabytes per second, ensuring the "brain" of the chip never has to wait for information.

Why it matters: Without high-performance computing, training a model like GPT-4 would take decades instead of months. This hardware layer is the literal physical limit of human innovation, and it is what makes the lightning-fast evolution of modern AI possible in the first place.

2. Scalable Cloud Infrastructure for AI Deployment

Most companies, even large ones, do not have the billions of dollars or the physical space required to build their own massive data centers. This is where cloud providers come in, offering "AI as a Service" to the general public. These platforms allow developers and startups to rent the exact amount of power they need, right when they need it, through a web browser. It is essentially like using a utility company for your electricity instead of building your own private power plant in your backyard. This scalability ensures that as an app grows from ten users to ten million, the underlying infrastructure can expand instantly to handle the traffic.

- AWS SageMaker and Bedrock: A complete cloud environment provided by Amazon that gives developers the tools to build, train, and deploy high-level models without managing any physical hardware.

- Microsoft Azure AI Infrastructure: This platform provides the massive computational backbone for many of the world's largest AI companies, including the infrastructure used to power OpenAI's most famous tools.

- Google Cloud Vertex AI: A unified platform that combines data engineering, machine learning, and AI monitoring into one single interface, making it much easier for small teams to launch complex products.

- Serverless Inference Technology: A modern way to run AI models where you don't even have to pick a server size; the cloud provider automatically assigns power only when a user asks the AI a question.

Why it matters: Scalable cloud infrastructure lowers the barrier to entry for creators and small businesses everywhere. It ensures that any person with a laptop and a good idea can access the same world-class computing power as a multi-billion dollar corporation without any upfront costs.

3. Data Storage and Management Systems

AI is only as good as the data it learns from, which is why data is often called the "fuel" for the AI engine. If the fuel is dirty, disorganized, or full of errors, the AI engine will eventually break or provide wrong answers. AI infrastructure must include advanced storage solutions that can hold petabytes of information and deliver it to the processors at speeds that would crash a normal hard drive. It also requires "Data Pipelines," which are automated software systems that clean, label, and organize information so the AI can actually understand the patterns within the data.

- Specialized Vector Databases: Tools like Pinecone or Milvus that store information as mathematical "vectors," allowing the AI to find related concepts based on meaning rather than just matching specific keywords.

- Massive Data Lakes and Warehouses: Large-scale storage buckets that hold raw, unstructured data like videos, audio files, and text documents in their natural format until they are needed for a specific training run.

- Automated Data Labeling Pipelines: Systems that use smaller AI models to automatically identify what is in an image or a document, saving thousands of hours of manual human work during the preparation phase.

- Data Version Control (DVC): A way for engineers to keep track of every change made to a dataset, ensuring that they can go back to an older version if the AI starts learning the wrong things.

Why it matters: High-quality data management prevents "hallucinations" and ensures that the AI provides accurate, helpful, and safe answers to the end-user. It is the invisible work that makes the difference between an AI that knows its facts and one that just makes things up.

4. High-Speed Networking and Interconnects

When you are building a massive AI, you aren't just using one chip; you are using thousands of them wired together. If the wires between these chips are slow, the entire system slows down, no matter how fast the individual processors are. This is why AI infrastructure requires specialized networking that is much faster than the standard internet cables we use in our homes. These "interconnects" allow thousands of separate servers to talk to each other so quickly that they function as if they were one single, giant supercomputer with a shared brain.

- InfiniBand Networking Standards: A high-speed, low-latency communication link used almost exclusively in supercomputers to move data between server racks without any delays.

- NVLink Technology: A specialized physical connector developed by NVIDIA that allows multiple GPUs within the same server to share memory and data at speeds that traditional cables cannot match.

- Optical Fiber Backbone: The use of light-based cables to connect different parts of a data center, allowing for massive amounts of information to travel long distances without losing signal strength.

- Network Fabric Virtualization: Software that manages how data flows through the cables, ensuring that the most important tasks always have an open "lane" to travel through during heavy training sessions.

Why it matters: Networking is the "nervous system" of an AI cluster. As models grow larger and more complex, the bottleneck is often not how fast a chip can think, but how fast the chips can share their thoughts with each other to solve a single problem.

5. Model Monitoring and Observability Tools

Once an AI system is live and talking to real users, the work is far from over. AI models can experience "drift" over time, which means they start becoming less accurate as the world changes and their training data becomes old. Infrastructure must include monitoring tools that act like a digital dashboard in a car, constantly telling the engineers if the system is overheating, making mistakes, or becoming biased. These tools track how long it takes for an AI to respond and whether the answers it gives are still high-quality and safe for the public to read.

- Weights & Biases Dashboards: A popular platform used by machine learning researchers to track their experiments in real-time and see exactly how a model is learning during the training process.

- Arize AI Observability: An advanced tool that helps technical teams find the root cause of why an AI model might be making "weird" or incorrect mistakes when interacting with real customers.

- Latency and Throughput Tracking: Software that measures the "ping" or delay between a user's question and the AI's response, ensuring the experience feels fast and smooth for everyone.

- Bias and Fairness Scanners: Automated systems that check the AI's output for harmful stereotypes or unfair patterns, helping companies maintain a high level of ethics and safety.

Why it matters: Monitoring ensures that AI systems remain reliable, ethical, and helpful over long periods of time. Without these tools, companies would have no way of knowing if their AI was slowly breaking, becoming outdated, or turning biased against certain users.

6. Security and Guardrail Infrastructure

Because AI is becoming so integrated into our lives, the infrastructure must also include a massive layer of security. This is the "shield" that protects the AI from being hacked or from giving out dangerous or private information. Security infrastructure includes "Firewalls for AI" that scan your questions to make sure you aren't trying to trick the system, and "Output Filters" that check the AI's answer before it ever reaches your screen. It also involves protecting the billions of dollars worth of intellectual property that lives inside the model itself.

- Prompt Injection Defense Systems: Security software designed to block users from using "jailbreaks" or clever phrasing to trick the AI into ignoring its safety rules or sharing private data.

- Data Encryption at Rest and Transit: Ensuring that the massive datasets the AI learns from are scrambled and locked away so that hackers cannot steal private information even if they get into the server.

- Identity and Access Management (IAM): Strict digital rules that define which specific employees at a tech company are allowed to look at or change the internal settings of an AI model.

- Compliance and Audit Logging: A digital "paper trail" that records every single interaction and change made to the system, which is essential for following government laws and safety regulations.

Why it matters: Without security infrastructure, large organizations like banks and hospitals would be too afraid to ever use AI. This layer creates the foundation of trust necessary for society to adopt these powerful new systems in critical areas of life.

7. Edge Computing: AI on Your Local Device

Not all AI infrastructure lives in a giant data center thousands of miles away. As our phones and laptops get more powerful, we are seeing a shift toward "Edge Computing." This means the AI infrastructure is actually built into the hardware you are holding in your hand right now. Running AI locally is faster because the data doesn't have to travel across the ocean to a server, and it is much more private because your personal information never leaves your device. This is the future of "Personal AI" that works even when you are offline.

- Neural Engines in Smartphones: Specialized parts of the mobile chip (like the Apple Neural Engine) that are dedicated solely to running AI tasks like FaceID or voice recognition locally.

- On-Device Model Quantization: The process of shrinking a massive AI model so that it can fit into the memory of a laptop without losing too much of its original intelligence or speed.

- Local Privacy Frameworks: Systems that allow an AI to learn from your habits on your phone without ever uploading your private photos or messages to a company's cloud server.

- Low-Power Inference Modes: Specialized software settings that allow a device to run AI tasks without draining the battery, making it possible for smartwatches and tiny sensors to use AI.

Why it matters: Edge computing is what makes AI feel truly personal and instant. It removes the need for a constant internet connection and ensures that your most private data stays under your own control, rather than being stored on a distant server.

8. Cooling and Energy Management Systems

The physical infrastructure of AI is incredibly hot and power-hungry. A single rack of AI servers can use as much electricity as a small neighborhood, and all that electricity turns into heat. If a data center cannot stay cool, the expensive chips will melt or slow down to protect themselves. Modern AI infrastructure includes massive cooling systems that are far more advanced than simple fans. This area of infrastructure is also focused on "Sustainability," trying to find ways to run these giant computers using wind, solar, or nuclear energy to protect the planet.

- Direct-to-Chip Liquid Cooling: A system where cold water or specialized fluids are piped directly onto the surface of the AI chip to absorb heat much faster than air ever could.

- Immersion Cooling Tanks: An advanced method where entire server racks are submerged in a non-conductive "oil-like" liquid that keeps every component at a perfectly steady temperature.

- AI-Driven Power Optimization: Using a separate AI to manage the electricity of the data center, turning off parts of the building when they aren't needed to save massive amounts of energy.

- Renewable Energy Integration: Building massive data centers directly next to solar farms or hydroelectric dams to ensure the "intelligence" being created isn't harming the environment.

Why it matters: Cooling and energy are the hidden costs of the AI era. As we build more powerful models, the ability to manage heat and electricity will determine which countries and companies lead the way in technology.

9. Showcasing Your Skills in the AI Era

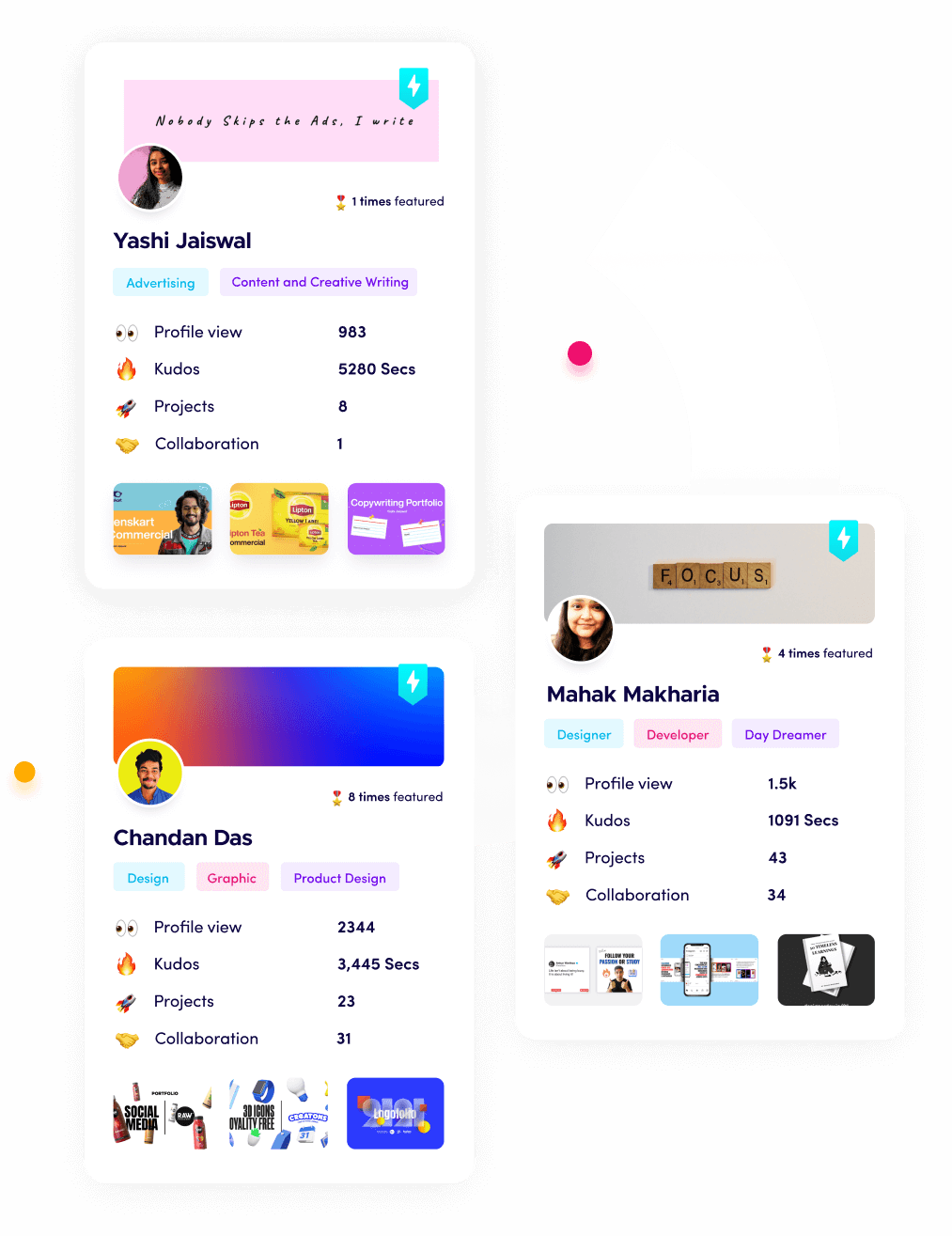

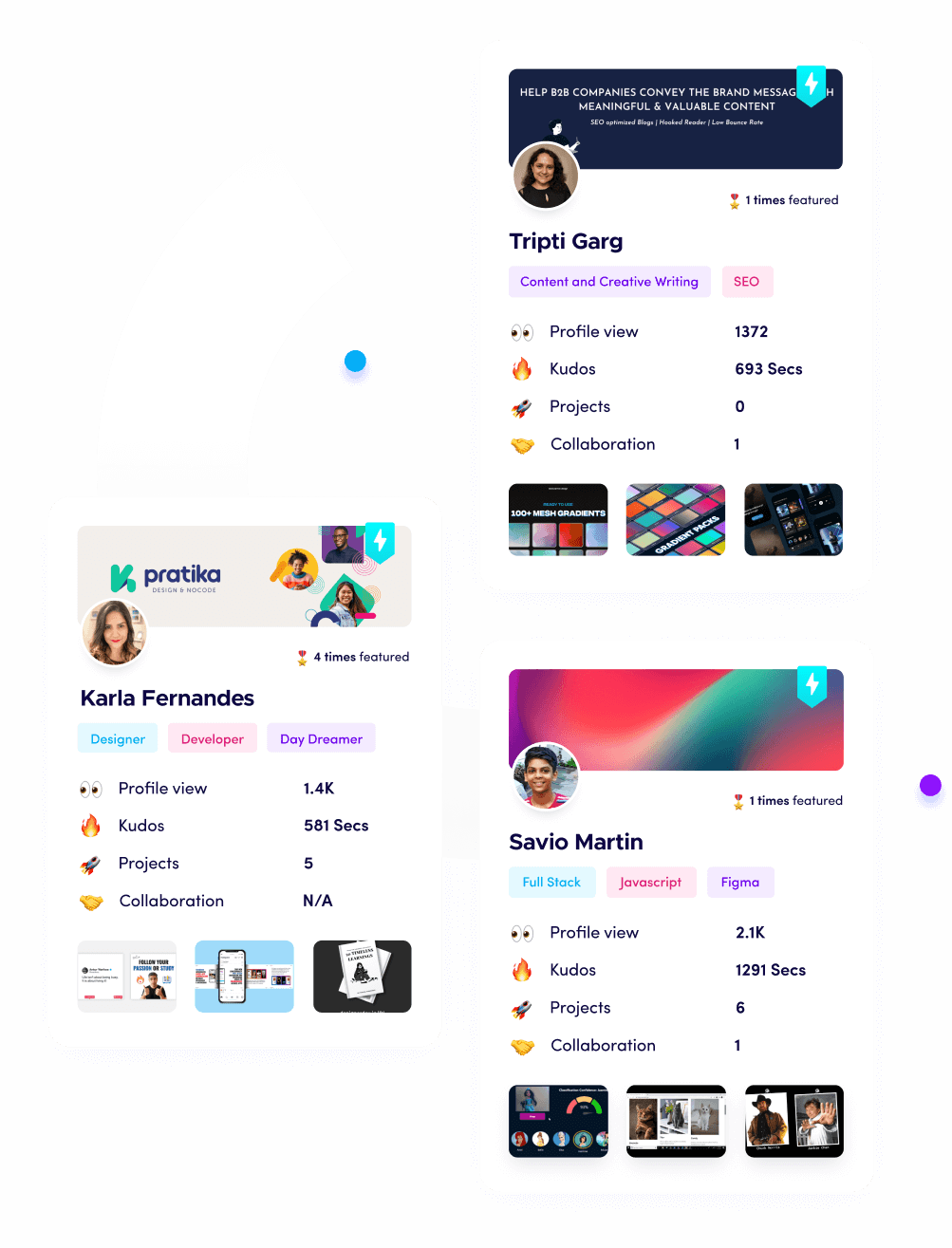

As we have seen, AI infrastructure is all about building a foundation of proof, performance, and reliability. In the modern professional world, your personal "infrastructure" is your Fueler portfolio. In an age where an AI can generate a generic resume in three seconds, a simple list of past jobs is no longer enough to prove you are talented. Companies now want to see the "backbone" of your career: the actual code you wrote, the designs you finished, and the assignments you completed.

By using Fueler, you are building your own high-performance infrastructure for your career. You aren't just telling a recruiter that you "know AI," you are showing them the actual evidence-based work samples that prove it. In a world where the "engines" (the AI tools) are becoming available to everyone, the "fuel" (your unique human work and creativity) is the only thing that will help you stand out and get hired by the best companies in the world.

Final Thoughts

AI infrastructure is likely the most complex and expensive machine that humans have ever constructed. It is a perfect symphony of high-end hardware, intelligent software, massive data pipelines, and intense physical engineering. While it is very easy to get distracted by the latest chatbot or a funny AI-generated video, the real power lies in the thousands of miles of fiber optics and the millions of GPUs working in total silence. Understanding this backbone is the first step toward mastering the future of technology. As this infrastructure becomes more efficient and more available to everyone, we will see AI move from being a "cool novelty" to being the invisible foundation of every business and household on Earth.

Frequently Asked Questions (FAQs)

What is the best AI infrastructure for beginners to learn in 2026?

For most beginners, the best starting point is using "Cloud Inference" tools like Google Colab or Hugging Face. These platforms allow you to experiment with world-class infrastructure for free without having to buy any expensive hardware yourself.

Why is everyone talking about GPUs instead of regular computer chips?

Regular computer chips (CPUs) are built to do many different things one at a time, which makes them slow for AI. GPUs are built to do thousands of simple math problems at the same time, which is exactly how an AI "thinks."

Does building an AI model always require a giant data center?

Not necessarily. While training a massive model like ChatGPT requires a data center, "fine-tuning" an existing model for a specific task can often be done on a single high-end desktop computer or a small cloud server.

How does AI infrastructure impact the cost of using AI apps?

The "hidden cost" of every AI answer is the electricity and hardware time it uses. This is why many advanced AI tools have monthly subscriptions; the companies have to pay the massive bills for the data centers and cooling systems.

Is AI infrastructure different from traditional IT infrastructure?

Yes, it is very different. AI infrastructure requires much higher power density, specialized networking like InfiniBand, and specific types of "Vector Databases" that traditional IT systems simply weren't designed to handle.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.