16 AI Agent Architectures Explained: Reactive, Goal-Based, and Utility-Based Agents

Riten Debnath

24 Feb, 2026

The landscape of artificial intelligence has shifted from static models to dynamic, autonomous entities that don’t just process data, but navigate the world. In 2026, the conversation has moved beyond what an AI knows to how an AI acts. Understanding the "brain" of these systems, the architecture, is the key to building software that can truly think, plan, and execute. Whether you are building a simple automation script or a complex multi-agent hive, the architectural pattern you choose determines the limits of your agent’s intelligence.

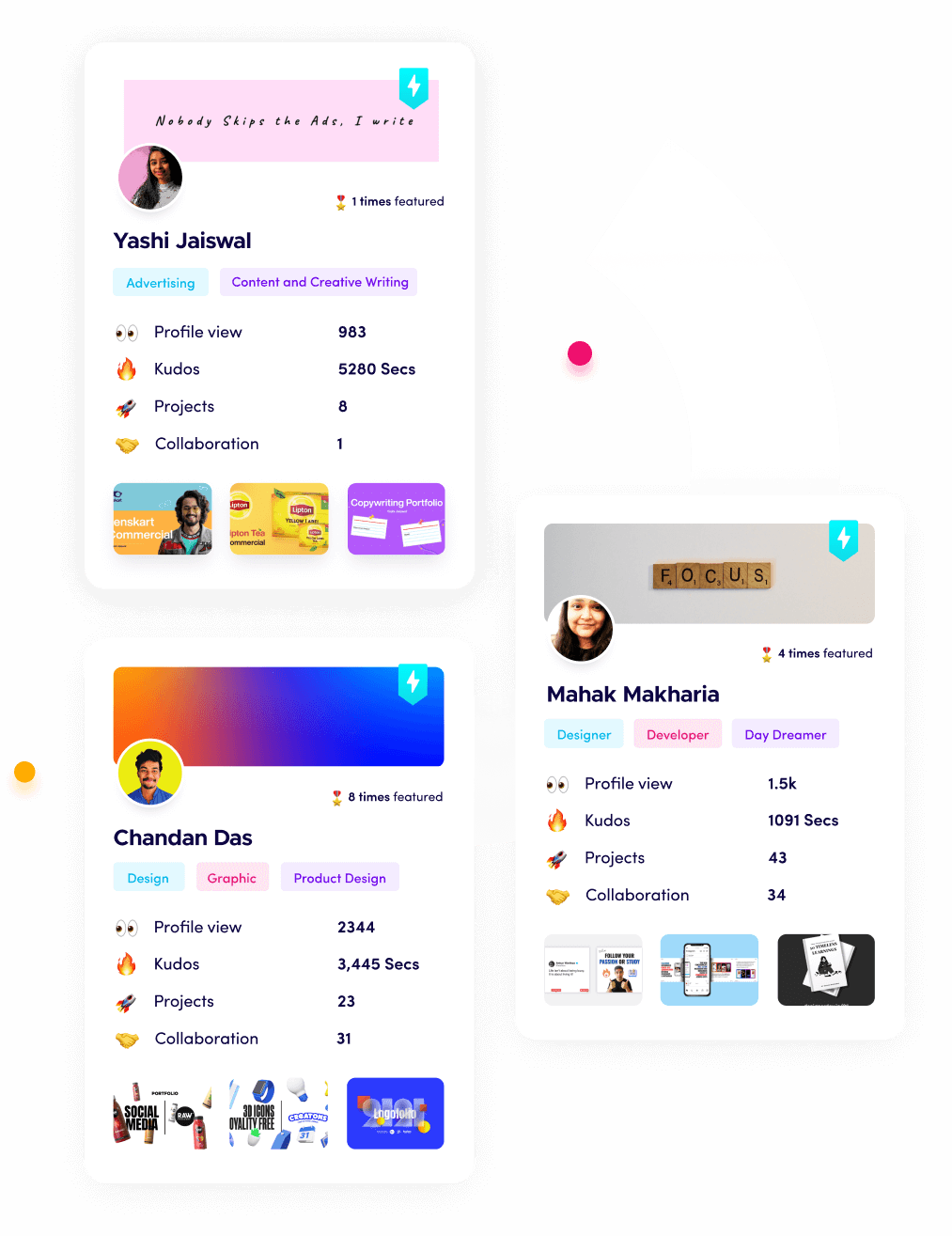

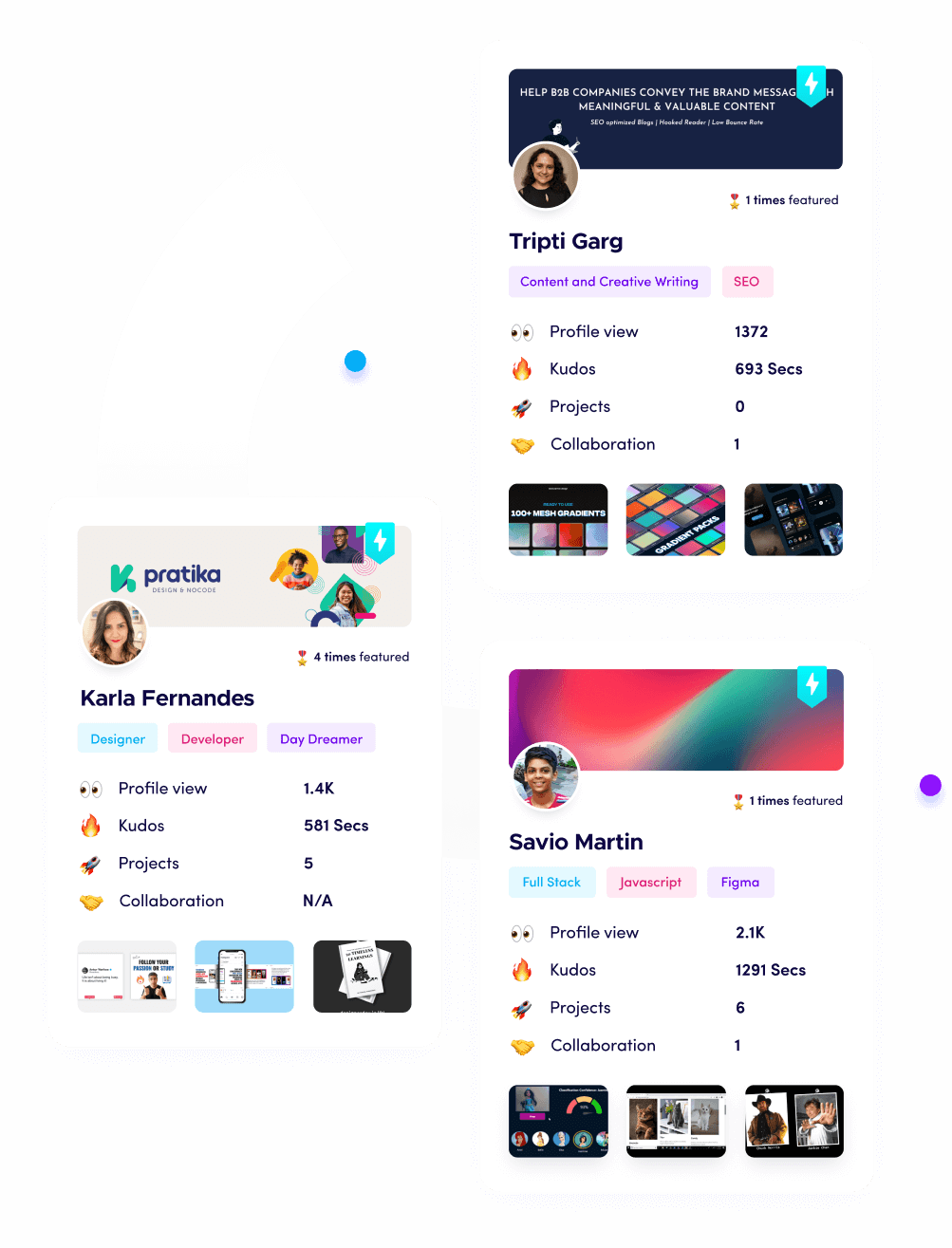

I’m Riten, founder of Fueler, a skills-first portfolio platform that connects talented individuals with companies through assignments, portfolios, and projects, not just resumes/CVs. Think Dribbble/Behance for work samples + AngelList for hiring infrastructure.

1. Simple Reflex Agents

Simple reflex agents are the most fundamental type of AI, functioning entirely on fixed "condition-action" rules. They operate on a purely "if-then" basis, meaning they take a current sensory input and immediately trigger a pre-defined response without considering the past or the future. In the world of 2026, these are used for high-speed, low-latency tasks where the environment is fully observable and the rules are clear. Because they lack any memory, they are incredibly efficient but can fail easily if the environment becomes unpredictable or hidden from view.

- Hardcoded Decision Rule Sets: These agents rely on a strictly defined set of condition-action rules that map specific environmental triggers directly to actions, ensuring that the agent responds with zero latency because it does not need to perform complex reasoning or consult a massive database before executing its programmed instructions in real-time, which is vital for safety systems that must act instantly to prevent accidents.

- Stateless Interaction Cycles: Because these agents do not maintain any internal memory of previous events, they treat every single moment as an isolated occurrence, which makes them highly predictable and easy to debug for developers but limits their ability to handle tasks that require an understanding of a sequence of events over time, effectively acting as a high-speed digital reflex rather than a thinking mind.

- Low-Power Edge Compatibility: Due to their lack of complex processing needs, simple reflex agents can run on minimal hardware like microcontrollers or basic sensors, allowing for "intelligence" to be embedded in everyday objects like smart light switches or emergency shut-off valves without requiring a constant connection to a powerful cloud server or high-end GPU for simple decision making.

- Fully Observable Environment Reliance: These agents are designed to work only in situations where all necessary information is visible to the sensors at once, meaning they excel in controlled settings like a factory assembly line where the position of every part is always known and the agent never has to "guess" what might be happening out of its line of sight during its operation.

- Deterministic Output Reliability: You can guarantee that a simple reflex agent will give the exact same response to the same stimulus every single time, providing a level of reliability that is essential for safety-critical systems where a "creative" or "learning" response could lead to a dangerous malfunction or an unpredictable system failure that endangers human workers or hardware.

Why it matters:

The extreme speed and predictability of these agents make them a cornerstone of basic automation. This directness is why they are the starting point in this guide to architecture.

2. Model-Based Reflex Agents

Model-Based Reflex Agents improve upon the simple version by maintaining an "Internal State" that keeps track of the world. They essentially have a "memory" that allows them to understand parts of the environment that are not currently visible to their sensors. If an object moves behind a wall, a model-based agent still knows it exists because its internal model has updated to reflect that change. This makes them significantly more effective in "partially observable" environments where the agent needs a mental map to navigate.

- Internal World State Representation: These agents maintain a complex internal model that tracks the current state of the world, allowing the agent to remember where objects are even when they are blocked by obstacles or move out of the camera's field of view, which provides a much more stable and continuous operating experience than simpler bots that forget everything instantly.

- History-Informed Situational Awareness: By keeping a record of past sensory inputs, the agent can understand the context of its current situation, such as knowing that a door was just closed or that a specific tool was moved to a different shelf, allowing it to make choices based on a sequence of events rather than just a single frame of data.

- Inference of Non-Visible Data: The agent uses its internal model to "fill in the blanks" when sensors are noisy or obstructed, allowing it to maintain its course and continue its task even if it loses sight of its target for a few seconds, which is a massive advantage for mobile robots in busy warehouses or delivery settings.

- Dynamic Perception Updating: As new data comes in, the agent constantly updates its mental map to ensure its "beliefs" about the world match reality, ensuring that its reflexes are always based on the most accurate and up-to-date information available in its local environment at any given moment during the execution of its task.

- Handling Partially Observable Spaces: Because they don't need to "see" everything at once to know it's there, these agents are perfect for complex indoor navigation where walls, furniture, and people constantly block the agent's line of sight, allowing for smoother and more confident movement through unpredictable and crowded physical spaces without stopping.

Why it matters:

The addition of a world model provides the "situational awareness" necessary for real-world navigation. This architectural shift is a key part of the 16 types explained here.

3. Goal-Based Agents

Goal-based agents take intelligence a step further by acting with a specific "destination" in mind. Instead of just reacting to what they see, they evaluate different sequences of actions to see which one will lead them to their assigned goal. This involves a process called "Search and Planning." In 2026, these are the engines behind modern navigation systems and automated project management tools that have to figure out a path to completion through multiple steps.

- Proactive Planning Logic: These agents look ahead into the future to simulate different action sequences, allowing them to choose the specific path that actually leads to their assigned objective rather than just reacting to immediate stimuli, which is essential for complex tasks like playing chess or planning a multi-city travel itinerary with many variables.

- Search-Space Navigation: They use advanced search algorithms to navigate through a "state space" of possibilities, identifying the most promising routes to success while discarding dead ends early in the process, which saves massive amounts of computing time and ensures the agent finds a solution before the system reaches its deadline.

- Flexible Path Selection: Unlike reflex agents that are stuck with one set of rules, goal-based agents can find a new way to reach their target if the original path is blocked by an obstacle, making them much more resilient in dynamic environments like a construction site where the layout changes every single day.

- Outcome-Oriented Focus: Every action the agent takes is filtered through the question of whether it brings the system closer to the final goal, which ensures that the AI remains focused and doesn't get distracted by "noise" or secondary tasks that don't contribute to the primary mission assigned by the human.

- Explicit Objective Representation: Because the goal is defined as a specific data structure, it is incredibly easy for a developer to change the agent’s behavior by simply updating the target coordinates or desired outcome, allowing for a highly customizable and "programmable" autonomous assistant that can switch tasks on the fly.

Why it matters:

The transition from reaction to planning is what makes these agents powerful. This goal-oriented focus is a major pillar of these explained architectures.

4. Utility-Based Agents

Utility-based agents are the "perfectionists" of the agent world. While a goal-based agent is happy just to finish the task, a utility-based agent wants to finish it in the best way possible. They use a "Utility Function" to assign a score to different outcomes, considering factors like cost, speed, safety, and comfort. If you are building a ride-sharing AI that needs to find the fastest route while also saving gas and providing a smooth ride, you are building a utility agent.

- Mathematical Success Scoring: These agents use complex functions to calculate the "happiness" or "utility" of a specific state, allowing them to make trade-offs between conflicting goals like speed versus safety, which results in much more nuanced and "intelligent" behavior in high-stakes environments like financial trading or automated logistics.

- Optimized Resource Management: By evaluating the cost of every potential action, utility agents can ensure they are using the minimum amount of energy, memory, or money required to achieve a result, making them the most cost-effective choice for enterprise-level automation where efficiency directly impacts the company's bottom line.

- Uncertainty Handling: These agents don't just plan for the "best case," they calculate the "expected utility" by weighing different possibilities against their likelihood of happening, which allows them to make smart bets in unpredictable markets or weather-dependent industries like renewable energy management or agriculture.

- Preference-Driven Personalization: You can tune the utility function to match a specific user's preferences, such as an agent that prioritizes "eco-friendly" routes over "fastest" routes for a sustainable brand, providing a level of personalized service that makes the AI feel like a true partner rather than just a cold tool.

- Sophisticated Conflict Resolution: When multiple goals compete for attention, the utility agent uses its internal scoring system to decide which one is more important in the current context, preventing the system from freezing up or oscillating between two different choices without making any actual progress toward a result.

Why it matters:

The move toward optimization is what defines high-level AI in 2026. This utility-driven logic is a sophisticated part of the agent architectures explained in this article.

5. Learning Agents

Learning agents are built to improve over time by observing their performance in the real world. They consist of four main components: a "Learning Element" that makes improvements, a "Performance Element" that acts, a "Critic" that provides feedback, and a "Problem Generator" that suggests new things to try. These are the gold standard for personal assistants that learn your voice, your habits, and your preferences the more you interact with them.

- Autonomous Skill Improvement: These agents don't need to be hardcoded with every possible scenario, instead, they use their learning element to discover new strategies and refine their existing code based on actual data they collect while operating in the field, leading to a system that gets smarter every single day it is active.

- Feedback-Driven Refinement: The "Critic" component constantly monitors the agent's actions against a set of performance standards, providing the necessary "grade" that tells the agent whether its last move was successful or needs to be adjusted in the future to avoid repeating the same costly mistake in a live environment.

- Exploratory Problem Generation: The agent doesn't just stick to what it knows, it uses its "Problem Generator" to intentionally try new actions that might lead to even better outcomes, ensuring the AI doesn't get stuck in a "local optimum" and continues to innovate and improve its own performance over time.

- Dynamic Environment Adaptation: Because they learn from experience, these agents are incredibly good at adapting to "drift" or changes in the environment, such as a customer support agent that learns new slang or an industrial robot that adjusts its movements to compensate for the wear and tear of its own mechanical joints.

- Human-Aligned Evolution: By processing user feedback as a primary data source, these agents can slowly align their internal logic with the specific nuances of a human's communication style, resulting in a user experience that feels more organic and less like interacting with a rigid piece of traditional software.

Why it matters:

Self-improvement is the ultimate goal of modern AI development. This learning capability is a standout feature in these explained architectures.

6. Belief-Desire-Intention (BDI) Agents

BDI agents are modeled after human psychology. "Beliefs" represent what the agent thinks is true about the world, "Desires" are the goals it wants to achieve, and "Intentions" are the specific plans it has committed to acting upon. This architecture is used when an agent needs to balance many competing tasks over a long period. They are particularly good at "commitment," knowing when to stick to a plan and when to abandon it if the world changes too much.

- Psychological Action Modeling: By using beliefs, desires, and intentions, these agents operate in a way that is easy for humans to understand and predict, making them excellent for collaborative "cobot" environments where humans and AI must work side-by-side on the same physical task or digital project without confusion.

- Intention Persistence Management: A BDI agent doesn't just blindly follow a plan, it constantly checks if its current "intentions" are still valid based on its latest "beliefs," allowing it to drop a task that is no longer possible or useful and switch to a more important "desire" instantly without wasting time.

- Complex Multi-Tasking: These agents are designed to maintain focus on high-level objectives over days or weeks, managing their own schedule and prioritizing sub-tasks to ensure that the final goal is met even if there are hundreds of interruptions or setbacks along the way in a chaotic environment.

- Separation of Reasoning Layers: The BDI architecture separates the "deliberation" (deciding what to do) from the "execution" (doing it), which allows the agent to think about its next move while it is finishing its current task, resulting in much smoother and more efficient operations for complex systems.

- Social Communication Clarity: Because they use human-like reasoning categories, BDI agents are very good at communicating their "reasons" for an action to other agents or humans, which is essential for building trust in complex systems like automated air traffic control or medical triage where transparency is a requirement.

Why it matters:

Modeling AI after human thought processes makes them more reliable for long-term work. This mental modeling is a core part of the 16 architectures being explored.

7. Logic-Based (Symbolic) Agents

Logic-based agents use formal logic (like "if A and B, then C") to represent their knowledge and make decisions. They are the "professors" of the AI world, excelling at tasks that require strict adherence to rules, laws, or physical principles. In 2026, they are still widely used in legal tech, tax software, and medical diagnosis systems, where every single decision must be perfectly explainable and backed by a clear logical proof.

- Rigorous Formal Reasoning: These agents use mathematical logic to derive new facts from existing knowledge, ensuring that every conclusion they reach is 100% accurate based on the data provided, which is a requirement for sensitive fields like accounting, structural engineering, or high-level legal analysis, where errors are not an option.

- Decision Explainability: Because the agent’s "brain" is a set of logical rules, you can trace exactly how it reached a specific answer, providing a clear "audit trail" that is necessary for compliance with government regulations or for debugging complex system failures in the field for high-stakes enterprise projects.

- Expert Knowledge Injection: Developers can "inject" specialized expertise directly into the agent by writing logical axioms, allowing a junior developer to create an agent that has the combined knowledge of ten senior medical doctors or high-level legal consultants without needing years of manual training and data collection.

- Hard Constraint Satisfaction: These agents are perfect for "scheduling" or "allocation" problems where there are hard rules that cannot be broken, such as ensuring no pilot works more than a certain number of hours or that no two volatile chemical ingredients are stored in the same room.

- Symbolic Knowledge Representation: Information is stored in a way that is readable by both humans and machines, bridging the gap between human expertise and automated execution, which ensures that the AI's internal knowledge base remains organized, searchable, and easy to modify as new rules emerge.

Why it matters:

Explainability is a huge topic in 2026, and these agents are the masters of it. This logical clarity is a key reason they are included in these explained architectures.

8. Blackboard Agents

Blackboard agents use a "Collaborative Workspace" architecture. Imagine a group of experts standing around a chalkboard. Each expert (a specialized mini-agent) looks at the board, and if they see a part of the problem they can solve, they write their contribution on it. This continues until the problem is solved. This is used for speech recognition, complex signal processing, and any task where no single algorithm is smart enough to find the whole answer.

- Heterogeneous Expert Collaboration: This architecture allows you to combine many different types of AI models such as a neural network for vision and a logic engine for reasoning into a single system where they can work together on the same problem without needing to be compatible with each other.

- Asynchronous Problem Solving: The various "experts" in the system don't need to work in a specific order; they simply contribute whenever they have useful information to add to the "blackboard," which makes the system incredibly resilient to delays or failures in any individual component of the stack.

- Emergent Solution Discovery: Because the final answer is built piece-by-piece by different specialists, the system can often find creative solutions to complex problems that a single, linear algorithm would have missed, making it ideal for high-level research and signal analysis tasks in the lab.

- Centralized Data Coordination: The "blackboard" acts as a single source of truth for the entire system, ensuring that all agents are working with the same information and preventing the "data silos" that often cause multi-agent systems to become confused or work at cross-purposes during a task.

- Scalable Expertise Integration: You can easily add a new "expert" to the system by simply pointing it at the blackboard, allowing you to upgrade the system's capabilities over time without having to rewrite the core architecture or change how the existing components interact with each other.

Why it matters:

Teamwork makes the dream work, even for AI. This collaborative approach is a unique and powerful entry in the 16 agent architectures explained here.

9. Reactive (Behavior-Based) Agents

Reactive agents are common in robotics and prioritize "acting" over "thinking." They decompose complex behavior into simple, competing layers like "avoid obstacles," "move forward," and "find light." There is no central planner; instead, the layers compete for control of the robot's motors. This makes them incredibly robust in messy, unpredictable environments like a forest or a crowded room, where a central plan would break as soon as something moved.

- Subsumption Architecture Layers: These agents are built in layers where higher-level behaviors can "subsume" or take over control from lower-level behaviors when necessary, ensuring that the robot always prioritizes its most critical survival tasks, like not falling off a cliff over its long-term goals.

- Decentralized Decision Power: By removing the central "brain," these agents avoid the "bottleneck" of complex reasoning, allowing for lightning-fast reactions that are more akin to the biological instincts of an insect than the slow, deliberate thoughts of a human, which is perfect for real-time navigation.

- Environmental Robustness: Because they react directly to what they sense, these agents are almost impossible to "confuse" with unexpected changes; if you move a chair in front of them, they don't need to re-plan their whole day, they just reactively walk around it and keep going.

- Emergent Complex Behavior: Simple rules like "follow the wall" and "turn left if blocked" can combine to create surprisingly complex and effective behaviors, such as navigating an entire maze, without the agent ever needing a map or a high-level understanding of where it actually is.

- Low Computational Latency: Since there is no heavy planning or memory lookup required, the time between sensing an obstacle and moving the motor is near zero, which is essential for high-speed drones or robots operating in environments where things move very quickly and unpredictably.

Why it matters:

Robustness is key in the physical world. This reactive nature is why these are a staple of the 16 architectures being discussed in this blog.

10. Multi-Agent Systems (MAS)

Multi-Agent Systems consist of a "swarm" or "team" of agents that communicate and coordinate to solve a task. Each agent has its own local view and limited power, but together they can achieve massive goals. Think of a swarm of delivery drones or a group of software agents that handle different parts of a supply chain. They rely on "Negotiation" and "Coordination" protocols to ensure they don't crash into each other or do the same job twice.

- Distributed Intelligence: Instead of one super-smart AI, you have dozens of simpler agents that work together, which makes the system much more "fault-tolerant." if one drone in a swarm fails, the others can simply pick up the slack and complete the mission without the whole system crashing.

- Agent-to-Agent Negotiation: These systems use specialized protocols like "Contract Net" where agents bid on tasks based on their current location or battery level, ensuring that every job is handled by the agent that is most qualified and efficient for that specific piece of work.

- Scalability by Design: You can add hundreds of new agents to a multi-agent system without significantly increasing the complexity of the central controller, making it the perfect architecture for managing large-scale operations like city-wide traffic light optimization or global logistics networks.

- Localized Task Specialization: Different agents in the swarm can be assigned different roles such as "scout," "transporter," or "repairer," allowing the collective to handle multifaceted projects that would be far too complex for any single, general-purpose AI to manage on its own.

- Collaborative Emergence: Through simple communication rules, the swarm can exhibit "intelligent" group behaviors like forming a search grid or surrounding a target, similar to how a flock of birds or a colony of ants can solve complex survival problems through decentralized cooperation.

Why it matters:

The future of AI is collaborative. This "swarm" logic is a high-growth area in the architectures explained in this guide.

11. Cognitive Architectures (e.g., SOAR, ACT-R)

Cognitive architectures are "Grand Unified Theories" of the mind. They attempt to model every part of human intelligence, including short-term memory, long-term memory, perception, and decision-making, in a single system. These are the most ambitious architectures, used by researchers who want to build "Artificial General Intelligence" (AGI) that can learn any task a human can, from folding laundry to writing a symphony.

- Unified Memory Structures: These systems typically feature a "Working Memory" for current thoughts and multiple "Long-Term Memories" for facts and skills, mimicking the human brain's ability to store and retrieve different types of information for different tasks throughout the day.

- Rule-Based Procedural Learning: They use "Production Rules" to decide what to do next, but they can also learn new rules over time by "chunking" smaller actions into larger, more efficient skills, which allows the system to become more expert at a task the more it practices.

- Multimodal Data Integration: Cognitive architectures are designed to handle inputs from many different sourceslike vision, sound, and textand combine them into a single "mental" representation of the world, providing a much more holistic form of intelligence than specialized models.

- Human-Like Error Patterns: Because they are modeled after the human brain, these systems often exhibit similar cognitive biases and memory "forgetting" patterns, which makes them incredibly valuable for psychological research and for building AI that interacts with humans in a natural way.

- Cross-Domain Task Versatility: Unlike a chess AI that can only play chess, a cognitive architecture like SOAR is designed to be "general-purpose," meaning the same underlying "brain" can be taught to play a game, drive a car, or manage an office with enough training and memory.

Why it matters:

This is the "Holy Grail" of AI design. This attempt to map the human mind is a fascinating entry in the 16 agent architectures explained here.

12. Recursive Self-Improving Agents

Recursive agents are a specialized type of learning agent that has access to its own source code. Their "goal" is to rewrite their own algorithms to become more efficient at rewriting their own algorithms. This creates a "feedback loop" of intelligence. While mostly theoretical and restricted to high-level research labs in 2026, they represent the ultimate form of autonomous evolution where the AI is its own developer.

- Code-Level Autonomy: These agents are given the permission and the capability to modify their own underlying software architecture, allowing them to optimize their processing speed, memory usage, and reasoning logic far beyond what a human developer could ever manually code.

- Intellectual Feedback Loops: As the agent becomes slightly smarter, it becomes better at finding the next improvement to its own code, leading to an "intelligence explosion" where the system's capabilities grow at an exponential rate rather than a linear one over a period of time.

- Dynamic Hardware Optimization: These agents can analyze the specific hardware they are running on and rewrite their code to take full advantage of the specific GPU or NPU architecture, squeezing every drop of performance out of the silicon without human intervention.

- Autonomous Versioning: The agent essentially manages its own CI/CD pipeline, deploying "version 2.0" of itself when it finds a significant improvement, while keeping a backup of its previous state in case the new code introduces bugs or performance regressions during its evolution.

- High-Stakes Safety Requirements: Because these agents can change their own "brains," they require incredibly strict safety "guardrails" to ensure they don't accidentally (or intentionally) delete their own ethical constraints or secondary goals while trying to optimize for raw processing speed.

Why it matters:

This is the cutting edge of AI autonomy. This self-evolution is a mind-bending part of the architectures being discussed today.

13. Interface Agents (User-Centric)

Interface agents are designed to sit between a human and a complex software system. Their job is to learn the user's habits and perform tasks on their behalf, like "autonomous secretaries." They observe how you use your computer, which emails you reply to first, and how you schedule your meetings, and then they start doing those things for you automatically. They are the ultimate productivity multipliers in the modern workplace.

- Observational Learning Patterns: These agents work in the background, quietly watching how a human interacts with their applications to identify repetitive patterns, such as "every Monday the user summarizes these 10 reports," and then offering to automate that specific workflow.

- Proactive Task Delegation: Instead of waiting for a command, an interface agent might say, "I noticed you have a meeting in 10 minutes; I’ve already summarized the last three emails from the attendees for you," taking the mental load off the human worker before they even ask.

- Personalized Workflow Adaptation: Every interface agent becomes unique to its user, adapting its communication style and prioritization logic to match the specific quirks and preferences of the person it is assisting, which creates a highly efficient and "invisible" form of automation.

- Cross-Application Orchestration: These agents act as a bridge between different software tools like Slack, Jira, and Emailperforming complex multi-app tasks like "find the bug report in Jira and send a summary to the engineering channel on Slack" with a single voice command.

- User-Intent Interpretation: They are experts at understanding "fuzzy" human language, translating a vague request like "help me get ready for my next call" into a specific list of actions across multiple systems based on the user's past history and current context.

Why it matters:

Productivity is the main reason people use AI. This user-centric logic is a vital part of the agent architectures explained in this guide.

14. Hybrid (Neuro-Symbolic) Agents

Hybrid agents combine the "Intuition" of deep learning (neural networks) with the "Logic" of symbolic AI. The neural network handles the messy perception tasks like identifying an object in a photo while the symbolic logic handles the high-level reasoning like deciding if that object is allowed to be in that room. This "best of both worlds" approach is how we build AI that is both incredibly capable and perfectly predictable.

- Perception and Reasoning Split: These agents use a "System 1" (fast, intuitive neural net) for processing raw data like images and sound, and a "System 2" (slow, logical symbolic engine) for making the final decisions based on rules and facts, mimicking how the human brain works.

- Common Sense Integration: By using a symbolic knowledge base, these agents can be taught "common sense" facts like "water is wet" or "knives are sharp"that pure neural networks often struggle to grasp, making them much safer and more reliable in everyday human environments.

- Small Data Efficiency: While pure neural networks need millions of photos to learn a concept, a hybrid agent can be told a rule once ("don't go near the stairs") and follow it perfectly, combining the power of modern AI with the efficiency of traditional programming and expert systems.

- Explainable Neural Outputs: When the neural network identifies a pattern, the symbolic engine can explain why that pattern is important in the context of the current mission, bridging the gap between "black box" AI and the human need for transparency and clear evidence.

- Robustness to Adversarial Attacks: These agents are harder to "trick" with weird data because even if the neural network is confused by a strange image, the symbolic logic layer can step in and say, "that doesn't make logical sense," and prevent the agent from making a dangerous or stupid mistake.

Why it matters:

This is how we get the "intelligence" of ChatGPT with the "reliability" of a calculator. This hybrid nature is a key entry in the 16 architectures explained here.

15. Mobile Agents (Code-in-Motion)

Mobile agents are a unique architecture where the agent's code actually moves from one server to another to perform a task. Instead of sending massive amounts of data across the internet to a central AI, the AI travels to the data. This is used in "Edge Computing" and distributed databases where bandwidth is limited or where data privacy is so high that the information is not allowed to leave the building.

- Data-Locality Processing: By moving the agent to the server where the data lives, these systems drastically reduce network congestion and latency, making them the most efficient choice for processing the massive amounts of data generated by factory sensors or city-wide camera networks.

- Autonomous Migration Protocols: These agents can decide when and where to move based on the available CPU power or network speed, "hopping" from a phone to a nearby edge server to a central cloud as the complexity of the task increases or the battery level of the device drops.

- Enhanced Privacy Preservation: Since the data never leaves the local server, mobile agents allow companies to perform complex AI analysis on sensitive medical or financial records while remaining in total compliance with strict privacy laws like GDPR or HIPAA without any risk of a data breach.

- Disconnected Operation Capability: A mobile agent can move to a remote device (like an offshore oil rig), perform its task while the device is offline, and then "call home" or migrate back to the main server once a connection is re-established, providing AI power to the most isolated places on Earth.

- Dynamic Load Balancing: These systems act like a living cloud, automatically distributing the AI "workload" across thousands of devices based on where the most processing power is currently available, which ensures that no single server becomes overwhelmed while others sit idle.

Why it matters:

Privacy and speed are the two biggest challenges in 2026. This "moving code" logic is a clever solution in the 16-agent architectures explained in this article.

16. Robotic Agents (Embodied AI)

Robotic agents are AI systems that have a physical body. This architecture is unique because the agent must deal with "Physics" gravity, friction, and mechanical wear and tear. Their "brain" is directly connected to "actuators" (motors and pistons). In 2026, the focus for these agents is "Sim-to-Real" transfer, where an agent learns how to walk or grab objects in a virtual simulation and then transfers that knowledge to its physical body.

- Sensorimotor Integration: These agents must constantly sync their "thoughts" with their physical movements, processing thousands of data points per second from touch sensors, gyroscopes, and cameras to ensure they don't lose their balance or crush a delicate object they are trying to pick up.

- Physical Constraint Awareness: Unlike a chatbot, a robotic agent knows that its arm can only reach so far and that it has a limited amount of battery life, requiring a specialized type of "body-centric" reasoning that is entirely different from pure digital AI architectures.

- Sim-to-Real Transfer Learning: Developers use massive digital "universes" to train these agents in millions of different physical scenarios in a few hours, allowing the robot to "wake up" in the real world already knowing how to navigate a kitchen or fix a piece of machinery without any manual training.

- Actuator Control Precision: The architecture includes specialized "Low-Level Controllers" that translate a high-level command like "pick up the cup" into the exact electrical pulses needed to move twenty different motors in perfect harmony, resulting in fluid and human-like physical motion.

- Human-Robot Interaction (HRI) Safety: Because they occupy the same physical space as humans, these agents are built with "Collision Detection" and "Force Limiting" logic at the core of their architecture, ensuring that they instantly freeze or move away if they detect a human in their path to prevent any chance of injury.

Why it matters:

This is where AI meets the real world. This physical embodiment is the final and most visible entry in the 16 agent architectures explained in this guide.

Show Off Your Architecture Skills on Fueler

As you experiment with these different AI agent architectures, you need a way to prove your technical depth to potential employers or collaborators. Building a "Reactive Agent" is very different from building a "Utility-Based Multi-Agent System," and companies in 2026 want to see that you understand these nuances. Fueler is the perfect platform to document your "Proof of Work." Instead of just listing "AI" on your resume, you can showcase the specific architectural diagrams, the code snippets, and the reasoning behind why you chose a BDI architecture over a Simple Reflex model for your latest project.

Final Thoughts

Understanding these 16 agent architectures is the difference between being a "prompt engineer" and being an "AI Architect." As we move deeper into 2026, the demand for people who can design these complex, autonomous systems is skyrocketing. Whether you are building a simple bot to manage your emails or a swarm of drones to replant forests, the architecture you choose will be the foundation of your success. Start small, experiment with these patterns, and don't forget to showcase your progress as you master the art of building the next generation of intelligent systems.

FAQs

Which AI agent architecture is best for a beginner to learn?

The Simple Reflex Agent is the best place to start because it teaches you the basic "Input-Logic-Output" cycle without the complexity of memory or planning. Once you master that, moving to a Goal-Based Agent will help you understand how AI can "look ahead" to solve more complex problems.

What is the most common architecture used in industry today?

In 2026, Utility-Based Agents are the most common in commercial software because businesses always want to "optimize" for something, whether it is profit, speed, or customer satisfaction. They provide the best balance of goal-seeking and high-efficiency performance.

Can one agent use multiple architectures at the same time?

Yes, these are called Hybrid Agents. For example, a self-driving car might use a Reactive Architecture for emergency braking (fast) and a Goal-Based Architecture for deciding which turns to take to get to your destination (planning).

Are these architectures specific to certain programming languages?

No, these are "logical patterns." You can build a BDI agent in Python, Java, or even C++. However, some languages have better libraries for certain tasks, like Python's crewAI for Multi-Agent Systems or SOAR for cognitive architectures.

How do I decide which architecture to use for my project?

It depends on your "Environment" and your "Task." If the world is predictable and fast, use a Reflex Agent. If the world is messy and requires planning, use a Goal-Based Agent. If you need to make trade-offs between many different goals, a Utility-Based Agent is your best bet.

What is Fueler Portfolio?

Fueler is a career portfolio platform that helps companies find the best talent for their organization based on their proof of work. You can create your portfolio on Fueler. Thousands of freelancers around the world use Fueler to create their professional-looking portfolios and become financially independent. Discover inspiration for your portfolio

Sign up for free on Fueler or get in touch to learn more.